You have a building management system in place, the dashboards are live, alarms are flowing, and the vendor has already done the handover. Then the primary problem appears. Operators only use a fraction of the interface. Technicians can handle routine issues but stall on diagnostics. Supervisors want better performance, but nobody has translated system capability into repeatable staff capability.

That gap is where most BMS investments either mature or flatten out.

Building management system training works when it is designed as an operating model, not as a one-time orientation. The strongest programmes tie training to daily decisions, fault response, scheduling discipline, and system optimisation. They also recognise a simple truth. Operators, technicians, and managers do not need the same depth of knowledge, and forcing them through the same content wastes time and weakens adoption.

Laying the Foundation for Effective BMS Training

At 6:15 a.m., the first shift logs in, alarms are active, and the building is already telling you where the training gaps are. One operator clears alarms without checking root cause. A technician knows the plant is not behaving normally but cannot trace whether the issue sits in the sequence, the sensor, or the controller. The system is working. The team is not yet working at the same level.

That is the starting point for building management system training. A useful programme begins with the decisions people must make on shift, under time pressure, with live building conditions in front of them. Training should support system return on investment by turning platform capability into repeatable operator, technician, and supervisor performance.

Start with roles, not features

Training plans fail when they mirror the software menu instead of the job.

Role-based design fixes that. Each audience needs a different level of access, judgement, and risk tolerance. If everyone gets the same training, operators get buried in detail they will never use, and technicians still do not get enough practice on fault isolation.

Split your audience by operational responsibility:

Operators: Daily monitoring, schedule changes, setpoint review, alarm acknowledgement, and standard response actions.

Technicians: Sensor checks, sequence validation, controller communications, diagnostics, commissioning support, and corrective changes.

Supervisors or facility leaders: Exception reporting, trend review, work prioritisation, and governance.

I build from task exposure and consequence. If an operator makes a scheduling mistake, comfort and runtime suffer. If a technician misreads sequence logic, the team can waste hours chasing the wrong fault. If a manager cannot read trend exceptions, performance problems stay invisible for weeks.

Run a practical training needs analysis

Before writing a curriculum, map the work. A short front-end analysis saves far more time than it costs because it prevents generic training, duplicate modules, and avoidable retraining later.

Focus on four areas first:

Critical tasks by role Define what each role must perform alone, what requires approval, and what should stay restricted to senior staff or vendors.

Known failure points Review alarm history, overrides left in place, scheduling conflicts, missed handoffs, repeat callouts, and trouble tickets that point to user error rather than equipment failure.

Interface friction Screen layout, naming quality, alarm clarity, and mobile access directly affect training time. Teams learn faster when graphics, point names, and workflows reflect how the building is operated, as noted by J2 Innovations in its BMS guide.

Facility priorities Match the programme to the site. A hospital team needs stronger coverage on pressurisation, air quality, and response discipline. A commercial office team usually needs more practice with scheduling, energy exceptions, and tenant-driven changes.

For teams that need a structured way to capture this, a training needs analysis template is a useful starting point.

A helpful parallel comes from IT training. Technical teams do better when fundamentals are organised into a clear progression tied to real tasks, which is one reason the CompTIA Network+ Study Guide works well as a reference model for sequencing foundational and applied knowledge.

Tip: If staff cannot describe the first 15 minutes of their shift in clear task language, the training plan is organised around software screens instead of operational work.

Define success in operational terms

“Improve BMS knowledge” is not a training target. It is a placeholder.

Use success measures that a supervisor can observe on shift and a manager can defend in a budget review:

Training target | What competence looks like |

Alarm response | Operators classify alarms correctly and follow the right escalation path |

Scheduling discipline | Staff update schedules without creating conflicts or unnecessary overrides |

Basic optimisation | Teams can review trends and identify obvious control issues |

Fault handling | Technicians isolate whether a problem is sensor, sequence, communication, or equipment related |

In this way, training becomes a deployment plan rather than a classroom event. Engineering gets fewer repeat callouts. Operations gets cleaner daily control. Leadership gets better use of the system they already paid for.

Set the foundation properly, and every later decision gets easier: what to teach first, what to assess, what to standardise across sites, and where to spend coaching time for the highest operational return.

Designing Your Core BMS Training Curriculum

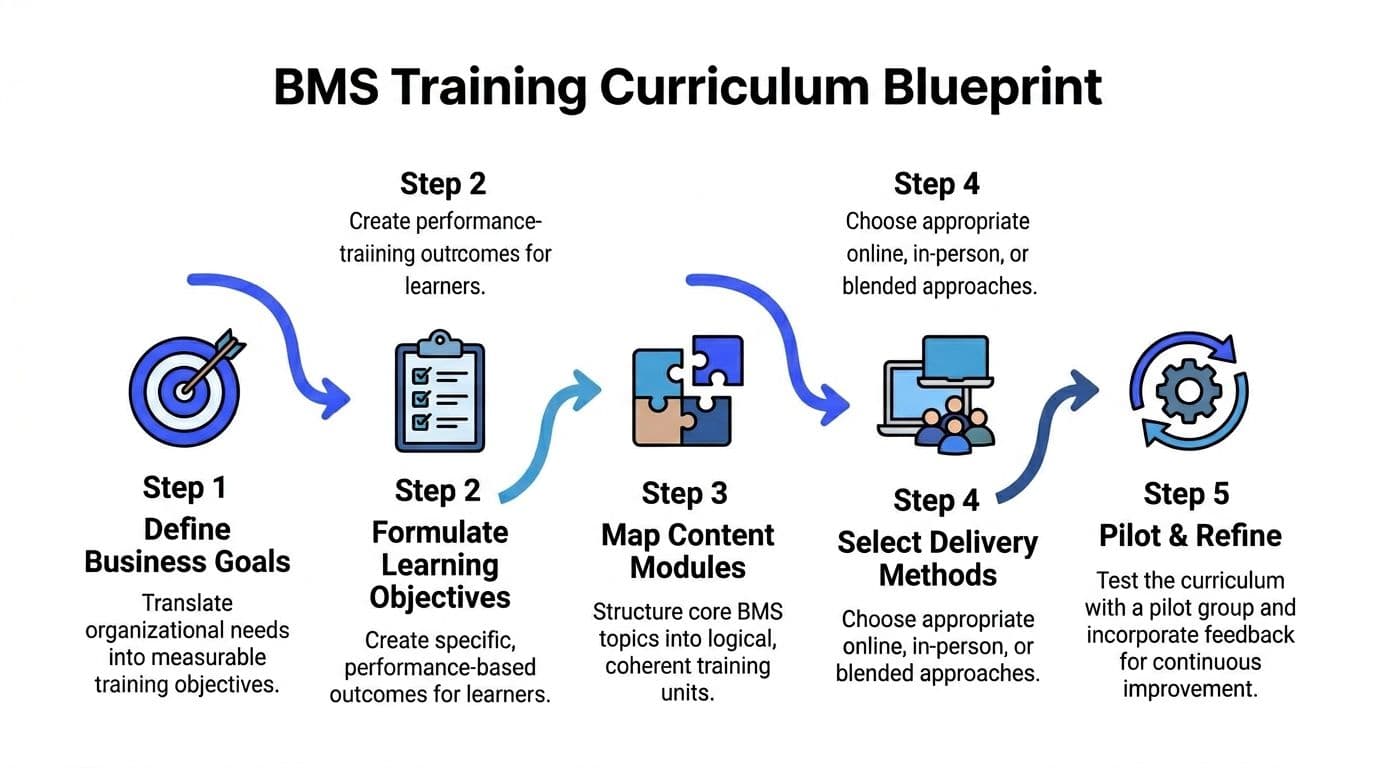

A good curriculum does not try to teach everything at once. It builds competence in layers.

Start by translating operational needs into performance-based learning objectives. If an operator needs to manage schedules, the objective is not “understand scheduling”. It is “adjust a time-of-day schedule without affecting unrelated zones and confirm the change in the interface”. That level of specificity changes how you design the content.

Build the sequence from fundamentals to judgement

The most reliable curriculum shape is progressive:

Foundations first Interface navigation, naming conventions, point types, alarms, trends, schedules, and control loop basics.

Applied system logic next How sequences behave in occupied and unoccupied modes, what normal looks like, and how overrides affect downstream behaviour.

Advanced practice last Diagnostics, optimisation, network awareness, reporting, and troubleshooting across systems.

That progression mirrors how people learn on site. They need orientation before interpretation, and interpretation before diagnosis.

A useful comparison comes from IT training. Network fundamentals often become more practical when learners study them through structured progression and repeated scenarios, which is why resources such as the CompTIA Network+ Study Guide remain useful models for technical curriculum design even outside pure networking.

Choose vendor-neutral or manufacturer-specific on purpose

This is the decision many teams avoid until budget season, and that creates mismatched training.

There is a significant gap in market guidance on the trade-off between vendor-neutral credentials and manufacturer-specific certifications. The UK BMS training market is split between vendor-neutral pathways such as BCIA courses and manufacturer-specific options, with some manufacturer training costing upwards of £1,200 per level, as described in this BMS training pathways article.

The practical choice depends on workforce reality.

When vendor-neutral training works best

Vendor-neutral training is stronger when your team needs transferable thinking.

Use it when:

You manage mixed estates with more than one controls platform.

You hire for long-term capability, not only support for the current install base.

Your technicians need stronger fundamentals in HVAC control logic, sequence reading, and system architecture.

The limitation is speed to platform-specific productivity. Learners may understand the theory but still struggle inside your exact interface.

When manufacturer-specific training works best

Manufacturer-specific training is stronger when the installed system drives the business case.

Use it when:

One platform dominates your estate.

You need staff to become productive in your live environment quickly.

Your service partner expects internal staff to handle first-line changes or diagnostics.

The limitation is portability. Staff may become highly competent on one platform but weaker when systems, employers, or estates change.

Key takeaway: If your problem is immediate operational reliability, start manufacturer-specific. If your problem is long-term bench strength, add vendor-neutral theory underneath it.

A practical curriculum map

I usually structure the curriculum into separate lanes:

Learner group | Core modules |

Operators | Interface use, alarms, schedules, trends, standard response procedures |

Technicians | Sensors, sequences, controllers, communications, graphics, diagnostics |

Supervisors | Reporting, performance review, exception management, governance |

That prevents two common failures. First, overloading operators with engineering detail they will never use. Second, underpreparing technicians by treating them like power users instead of diagnosticians.

Developing Engaging Hands-On Training Content

The fastest way to lose a BMS learner is to hand them a manual and call it training.

People become competent by doing the work in a controlled setting, then repeating it until their responses become reliable. In technician training, the most practical model combines 4-5 hours of daily field work with 2-3 hours of computer work, covering tasks such as sensor calibration, commissioning, programming, trend analysis, and report generation, according to the HVAC Know It All guide to BMS technician development.

Use task clusters, not isolated lessons

Hands-on content works best when it reflects a real shift pattern.

A technician might start the morning by checking a complaint about space temperature, move into sensor verification, review controller communications, and then return to the workstation to inspect trends and revise seasonal programming logic. Training should mirror that flow.

I prefer to bundle content into task clusters such as:

Sensor and field validation Calibrate temperature, humidity, CO2, or pressure devices. Confirm readings against expected conditions.

Sequence and control testing Walk through occupied mode, setback, alarm states, and fail conditions.

Communications troubleshooting Identify what changes when a point is stale, missing, mislabelled, or mapped incorrectly.

Operator handoff Explain what changed, what should be monitored, and what not to override.

That last point is often ignored. A technician who cannot explain a change to an operator creates the next service call.

Make the software side feel real

Computer-based practice should not be a slideshow. It should require decisions.

Good exercises ask learners to:

modify a seasonal control programme

create or interpret graphics

inspect trend data for poor scheduling or unstable control

generate a report a manager could use. Short simulation clips are helpful here. Teams building their own media often benefit from examples of modern training video software because screen-based technical instruction is much more effective when walkthroughs are segmented, annotated, and easy to update.

A useful pattern is to pair field evidence with workstation evidence. If the discharge air reading is suspect in the plant room, the learner should also inspect what that bad value does inside the graphics, alarms, and trend views.

Turn documentation into scenarios

Most BMS documentation is extensive and unreadable under pressure.

Do not ask staff to memorise manuals. Convert manuals, SOPs, sequence descriptions, and change-control notes into micro-lessons, guided exercises, and fault scenarios. The content should answer a practical question: “What do I do when this happens?”

For supervisors designing structured practice around live work, this guide to on-the-job training is a helpful reference.

Video is especially useful when you need to demonstrate sequence behaviour or common troubleshooting flows. Used properly, it reinforces classroom and field practice instead of replacing them.

Tip: If a learner can pass your quiz but cannot explain why a valve is hunting or why a zone is in override, the content is too passive.

Assessing Learner Competency and Program Impact

Completion is not competence.

A learner can finish every module and still freeze when a live alarm appears, a trend graph looks wrong, or a schedule change affects the wrong area. Assessment in building management system training has to measure performance in context.

Test the work, not just the memory

The best assessments ask learners to perform realistic tasks under defined conditions.

Examples include:

diagnosing a simulated fault and stating the likely cause

adjusting a schedule to match occupancy without affecting unrelated spaces

reviewing a trend and identifying whether the issue is sequence, sensor, or operator action

responding to an alarm using the correct escalation path

These tasks reveal judgement, not just recall.

A written quiz still has a place. It is useful for terminology, navigation, and policy. It should not be your main proof of readiness.

Score against observable standards

Assessment becomes more credible when supervisors use clear criteria. I recommend a simple rubric:

Competency area | What to observe |

Accuracy | Did the learner identify the correct issue or action? |

Process | Did they follow the expected workflow? |

System awareness | Did they consider upstream or downstream effects? |

Communication | Could they explain the action to another team member? |

This gives you evidence you can defend. It also makes retraining more precise.

For teams formalising this process, a practical framework for assessment of competency can help standardise how skill checks are built and recorded.

Look for operational signals after training

Programme impact shows up in work patterns before it shows up in presentation decks.

Useful indicators include:

fewer avoidable mistakes in schedules or overrides

cleaner alarm handling

better technician notes

faster movement from issue detection to root-cause investigation

less dependence on a small number of “go-to” experts

Key takeaway: A key test happens during the next abnormal event. If learners cannot apply what they learned in that moment, the programme still has design work to do.

Deploying and Scaling Your BMS Training Program

A rollout looks fine on paper until week three. The night shift missed the live session, a contractor needs limited system access, one site is still using old alarm categories, and a new hire starts after the vendor has already left. That is the point where a training plan either becomes an operating system for the estate or turns into a stack of files nobody trusts.

Scaling BMS training is not mainly a content problem. It is a deployment design problem. The programme has to fit shift patterns, site variation, vendor dependencies, audit requirements, and the fact that staff join at different stages. If those conditions are ignored, even good material produces weak adoption.

Pick the delivery model that matches the estate

A single delivery method rarely holds up across a mixed estate.

On-site instruction still earns its place for commissioning, field validation, and supervised work on live sequences. It is also expensive to repeat and difficult to schedule at scale. Fully online delivery solves the scheduling problem, but it falls short when learners need to interpret plant behaviour, trace cause and effect, or make safe changes under pressure.

A blended model works better because each format does a different job:

Online for foundations Interface basics, terminology, alarm categories, reporting logic, and local policy.

Instructor-led for applied judgement Sequence reviews, fault scenarios, and team workflows that need discussion.

Field coaching for sign-off Sensor checks, point verification, fault isolation, and supervised changes.

That mix is how training programmes scale without losing operational relevance.

Build deployment into routine operations

Training should start automatically from role, not from someone remembering to send an email.

Set up learning paths by job function and access level. Operators need a shorter path into daily use, alarm review, schedules, and overrides. Technicians need deeper work on sequences, trend interpretation, field verification, and controlled changes. Supervisors need reporting, exception review, governance, and audit evidence. Contractors often need a narrower version with time-limited access and clear boundaries.

This structure solves a common scaling problem. One site should not be learning from current modules while another site relies on copied slides and outdated PDFs. Version control, enrolment rules, and completion records need to be part of the programme design from the start.

Design for compliance and handover at the same time

Compliance work tends to expose weak deployment decisions.

For example, California Title 24 training expectations include offsite operating instruction for at least one building operator and on-site technician training sized to the project, as outlined in the institutional BMS training requirements document. In practice, that means a scalable programme needs more than lesson content. It needs scheduled delivery, attendance records, role-based completion paths, and a clear handover standard that survives staff turnover.

Manual administration breaks down fast here. If the team is pulling sign-off sheets from binders, vendor manuals, and email chains, the result is delay, missing records, and uneven site readiness.

Standardise the core, localise the application

The best academy models do both.

Keep the estate-wide core consistent:

naming conventions

alarm philosophy

scheduling rules

override limits

change-control expectations

escalation standards

Then add site modules for equipment differences, local plant arrangements, critical spaces, and known failure points. I have seen central teams over-standardise and produce tidy training that nobody can use on the plant they run. I have also seen every site build its own materials and lose any chance of consistency. The workable middle ground is a fixed core with controlled local adaptation.

Scale with a hub-and-spoke model

Centralise what should stay stable. Distribute what depends on the site.

A small central team should own curriculum standards, platform administration, version control, and baseline assessments. Site leads or regional champions should handle local walkthroughs, coaching, and feedback on where users still hesitate. That model is easier to maintain than flying one trainer everywhere, and it avoids the opposite mistake of leaving every site to improvise.

This is the trade-off to manage. Full central control gives consistency but slows response to local changes. Full local control increases speed but creates drift. A hub-and-spoke structure keeps the programme aligned while letting sites train against the equipment and risks they face.

Sustaining Excellence with a Continuous Improvement Loop

BMS training decays faster than most managers expect.

Interfaces change. Sequences get revised. Equipment gets replaced. A strong technician leaves. A site starts using remote access differently. Six months later, the original course still exists, but the work has moved on.

Create a live feedback channel from the field

The best updates come from recurring operational friction, not from annual reviews.

Ask operators where they hesitate. Ask technicians which faults junior staff misread. Ask supervisors which reports nobody trusts. Those answers tell you what to refresh.

Use a lightweight review rhythm:

collect field questions continuously

review incident patterns and recurring confusion

update the smallest useful piece of content

redeploy it quickly

Short refreshers outperform large rewrites because they reach people before habits harden.

Build refresher content around changes and errors

Do not wait for a full curriculum overhaul.

When a new sequence is introduced, create a micro-lesson on that sequence. When staff keep mishandling overrides, publish a short refresher with examples of acceptable and unacceptable use. When remote troubleshooting creates confusion, update the handoff steps.

This keeps the programme relevant without exhausting your team.

Protect institutional knowledge before it walks out

Many estates run on a handful of experienced people who know every naming inconsistency, weak point, and workaround. That knowledge is valuable and fragile.

Capture it in usable forms:

annotated troubleshooting guides

short screen recordings

operator decision trees

common fault libraries

supervisor review checklists

That material turns one person’s hard-won experience into organisational capability.

Tip: Every repeated question is a training asset waiting to be built.

Use a simple improvement loop

A durable loop looks like this:

Stage | Practical action |

Measure | Review assessments, field errors, alarm handling, and user questions |

Refine | Adjust content, sequencing, or role pathways |

Redeploy | Push updated microlearning, practice tasks, or coaching guides |

Verify | Observe whether behaviour changed in live operations |

The goal is not perfect content. The objective is a programme that stays aligned with the system people use.

Strong building management system training does more than support adoption. It creates a facility team that can operate with confidence, troubleshoot with discipline, and improve performance over time.

If you want to turn manuals, SOPs, and technical PDFs into interactive BMS training without building every lesson by hand, Learniverse gives training teams a faster way to create, deliver, and improve role-based learning at scale. It is especially useful when you need consistent onboarding, compliance-ready course delivery, and a cleaner way to keep training current as your systems evolve.