A lot of teams reach the same breaking point in the same way. Training starts in PowerPoint, policy updates live in shared drives, assessments sit in separate forms, and every urgent change turns into a hunt for the latest version. One manager edits the slide deck, another updates the PDF, someone else uploads the wrong file to the LMS, and learners end up taking three different versions of the same course.

That chaos feels manageable when you have a handful of modules. It becomes expensive when you're supporting onboarding, compliance, product training, franchise operations, or role-based enablement across multiple locations. At that point, the primary problem isn't course design. It's production discipline.

An elearning authoring system solves that problem by giving your team a structured way to build, update, standardise, and publish training content. It turns scattered assets into managed learning products. For leadership teams making their first serious investment here, that's the shift to understand: you're not buying a design tool. You're buying a content operations layer for training.

The Growing Challenge of Scalable Corporate Training

The pattern is familiar. A training lead gets asked to update onboarding for a new process. Sales enablement needs revised product messaging. HR needs a policy module refreshed before the next compliance cycle. Operations wants the same content adapted for regional teams. None of those requests are unusual. The problem is that teams often find themselves still using tools that weren't built for controlled learning production.

PowerPoint is fine for presenting. PDFs are fine for reference. Neither is fine for managing an evolving training catalogue with approvals, learner tracking, accessibility checks, review cycles, and LMS deployment. Once content starts changing frequently, manual workflows stop being annoying and start becoming a business risk.

Where the bottleneck usually appears

The first bottleneck is content assembly. Subject matter experts hand over documents, videos, screenshots, and comments in different formats. The second is review. Feedback arrives in email threads, comments, meeting notes, and chat messages. The third is version control. Nobody is fully sure which file is current, which one was approved, or what was published.

That creates three predictable outcomes:

Slow updates: critical training changes wait in queues because every revision is manual

Inconsistent learner experience: one team gets polished interactive learning, another gets a static deck

Weak governance: branding, compliance language, and assessment logic drift across departments

When training teams say they need a faster tool, they usually mean they need fewer handoffs, clearer control, and less rework.

The urgency is real because online learning is no longer a side channel. In 1995, only 4% of organisations used online learning systems, but by 2026, that figure had risen to 99%, while 89% of organisations now use an LMS as their core learning technology, according to Growth Engineering's elearning statistics roundup.

Why leadership teams should care

An unmanaged training workflow doesn't just waste L&D time. It slows employee readiness, increases the chance of outdated instruction reaching learners, and makes scale harder than it should be. If you're opening locations, hiring quickly, rolling out new systems, or operating in a regulated environment, poor authoring workflow shows up as operational drag.

An elearning authoring system is the point where training stops being a collection of files and starts becoming a repeatable production process. That matters because scalable training depends less on heroic effort and more on having the right system underneath the work.

Defining the Modern eLearning Authoring System

If an LMS is the library, an elearning authoring system is the printing press. The LMS stores, assigns, and reports on learning. The authoring system creates the learning asset itself.

That distinction clears up a lot of buying mistakes. Teams often expect the LMS to handle course creation well, or they buy an authoring tool without thinking through publishing and tracking. Those tools work together, but they do different jobs.

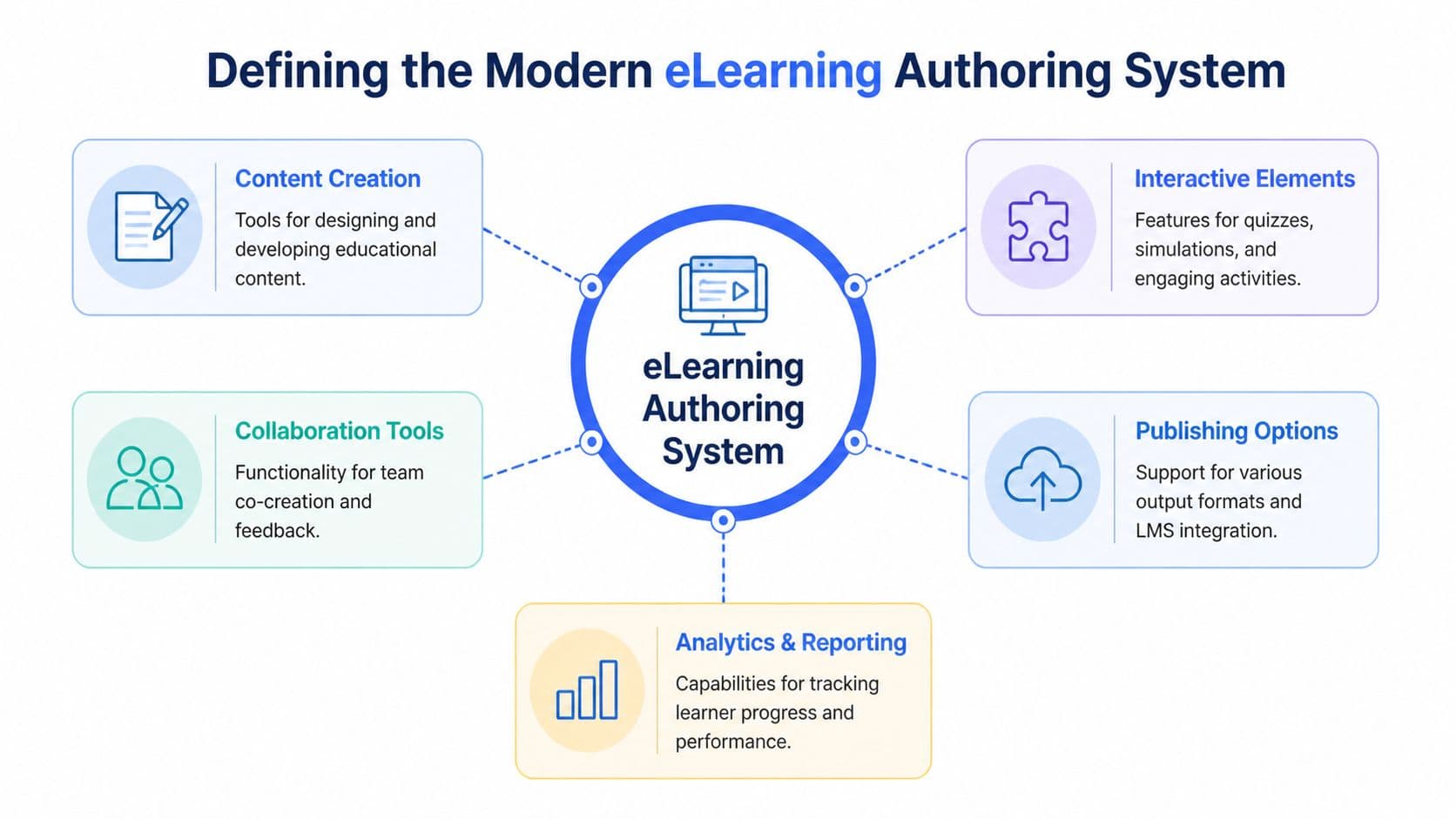

A modern elearning authoring system is the software layer that turns raw knowledge into structured, interactive, trackable training content that can be published and delivered through other learning platforms.

What it actually does

At a practical level, the system takes source material and transforms it into something learners can complete and managers can monitor. The source material might be a slide deck, policy document, product manual, video transcript, SME interview, or website content. The output is a course, lesson, simulation, quiz, or assessment package ready for distribution.

A capable authoring system usually handles work across these layers:

Content structuring: organising information into lessons, topics, and logical learning paths

Experience design: adding interactions, media, knowledge checks, and scenarios

Team workflow: supporting reviews, edits, approvals, and controlled publishing

Output and compatibility: exporting content in formats that work inside your delivery environment

Measurement: capturing completion, scores, progress, and learner behaviour through connected systems

What makes it modern

Older authoring tools were often built like desktop publishing software. They gave skilled designers deep control, but they also depended on specialist users and clunky review cycles. Modern platforms move toward cloud collaboration, reusable templates, and easier publishing across environments.

The difference is similar to the shift from desktop file sharing to modern document collaboration. You're no longer passing files around and hoping someone merges comments correctly. You're building from a common system of record.

A modern elearning authoring system should help your team answer simple but critical questions:

Question | Why it matters |

Which version is current? | Prevents outdated training from going live |

Who approved this content? | Supports governance and auditability |

Can we reuse this module elsewhere? | Reduces duplicated effort |

Will it work in our LMS? | Avoids deployment friction |

Can we update once and scale everywhere? | Improves long-term efficiency |

The business view

Leadership teams shouldn't evaluate an authoring system as a creative toy. It sits closer to production infrastructure. It determines how quickly your organisation can convert internal knowledge into consistent training. It also determines how painful updates become six months after launch, which is when many tool decisions start to look either smart or shortsighted.

Core Features That Drive Engagement and Efficiency

A feature sheet rarely tells you how a tool will perform once the work starts piling up.

The test is operational. Can your team build faster without lowering quality? Can subject matter experts update content without breaking design? Can compliance sign off quickly and still get an audit trail? Those are the questions that separate a useful elearning authoring system from software that looks polished in a demo and slows everyone down after purchase.

Content import and rapid course assembly

Few organisations start with a blank page. They start with slide decks, SOPs, policy documents, recorded webinars, and product notes scattered across teams.

That makes import quality a business issue, not a convenience feature. If the tool can pull in source material cleanly, authors spend their time structuring decisions, adding practice, and tightening messages. If import is poor, the team burns hours rebuilding content by hand, and errors slip in during reformatting.

This becomes even more important at scale. Enterprise teams, franchise networks, and regulated businesses often need to turn existing documentation into training quickly, then update it again when policy or product details change. In that context, strong import and rapid assembly are directly tied to production cost, speed to launch, and how many courses a small team can realistically maintain.

Templates and brand control

Templates set the rules for repeatable production. They help a distributed team create courses that feel consistent, meet brand standards, and follow the same learning logic.

Useful template systems usually include:

Locked layouts for recurring use cases: onboarding, compliance, product updates, SOP refreshers

Shared component libraries: disclaimers, intros, assessment blocks, branded callouts

Editable content zones: SMEs can change text and examples without damaging structure

Role-based permissions: reviewers, authors, and admins each have clear limits

There is a trade-off here. Tight templates improve speed and governance, but they can flatten the learning experience if every course follows the same pattern. Loose templates give experienced designers more freedom, but they also create catalogue sprawl. Leadership teams should decide where standardisation matters most and where variation improves learning.

Interactivity that supports the job

Interactivity should earn its place. A clickable layer that looks modern but does not improve recall or decision-making adds development time without adding business value.

The strongest interactions are usually simple:

Scenario branches for customer conversations, manager judgement, or compliance choices

Drag-and-drop exercises for process order, categorisation, and workflow checks

Clickable walkthroughs for product knowledge or system steps

Short reflection prompts that make learners pause and apply a rule to their own work

Research reviews from the U.S. Department of Education found that, on average, students in online conditions performed modestly better than those receiving face-to-face instruction alone. The lesson for buyers is straightforward. Digital delivery can work well, but only if the course design asks learners to think, choose, and practise instead of clicking Next through static screens.

A practical rule helps here. If an interaction does not help the learner make a decision, apply a rule, or rehearse a task, cut it.

Assessments and knowledge checks

Assessments shape behaviour. Employees pay attention to what gets tested, and managers use results to judge whether training is doing its job.

A good authoring system makes it easy to add low-friction checks throughout the course rather than saving everything for a final quiz. That matters in long compliance modules, product training for sales teams, and franchise environments where consistency matters across locations.

Look for:

Question types that fit the content: recall, application, sequencing, judgement

Answer feedback that explains the reasoning: not just right or wrong

Logic for retries, branching, and pass thresholds: useful for regulated or role-specific training

Export compatibility with your reporting setup: score and completion data need to pass cleanly into the LMS

If your team is still separating course creation needs from delivery and reporting needs, this breakdown of learning management system features for administrators and training teams helps clarify where the authoring tool should stop and the LMS should take over.

Standards and LMS compatibility

Compatibility problems usually show up late, after money has been spent and launch dates are already set.

A course can look excellent in the authoring environment and still fail in deployment because completion data does not pass correctly, the LMS handles packages inconsistently, or a client requires a standard your platform cannot publish. That risk is highest in enterprise procurement, customer education, and any business serving multiple business units or external partners.

Modern systems should support the standards your organisation uses now and the ones you may need later. SCORM still matters for many LMS environments. xAPI and cmi5 matter when teams want richer tracking, more flexible data capture, or learning that happens beyond a standard browser session, as explained in Teachfloor's guide to elearning authoring tools.

Ask direct questions during selection:

Which standards do we need today?

Which standards do clients, partners, or regulated teams require?

How difficult is cross-LMS testing?

What learner and assessment data passes through?

Collaboration, analytics, and accessibility

These features are often evaluated separately. In practice, they affect the same workflow.

Collaboration controls review speed. Analytics determine whether the course can be improved after launch. Accessibility decides whether the training works across your full workforce, including employees using assistive technologies or mobile devices in the field.

If any one of those pieces is weak, production slows down. Email-based reviews create version confusion. Poor analytics leave teams guessing why learners drop off or fail assessments. Late accessibility fixes create expensive rework and launch delays.

For leadership teams, the broader point is simple. The right elearning authoring system is not just a content builder. It is production infrastructure for repeatable, measurable training. The strongest platforms reduce the cost of updates, support scale across business units or franchise locations, and create a foundation for AI-assisted course creation, where speed only helps if the underlying workflow is already disciplined.

Authoring System vs LMS vs Microlearning Platform

Leaders often bundle these tools together because they all sit somewhere in the learning stack. That causes poor purchases. Each platform has a different job-to-be-done.

An elearning authoring system creates learning content. An LMS assigns, delivers, and tracks it. A microlearning platform focuses on short-form reinforcement, quick refreshers, and often mobile-friendly nudges. Some products blur categories, but the core roles still matter.

The shortest useful distinction

Authoring system: build the course

LMS: manage the learner and distribute the course

Microlearning platform: reinforce knowledge in small bursts

If you're still mapping your broader training stack, this guide to learning management system features is useful for separating delivery requirements from content creation requirements.

eLearning Tool Comparison Authoring vs LMS vs Microlearning

Criterion | eLearning Authoring System | Learning Management System (LMS) | Microlearning Platform |

Primary function | Create and package training content | Assign, deliver, track, and manage learning | Deliver short, focused learning moments |

Typical content format | Courses, modules, quizzes, simulations, assessments | Programmes, learning paths, hosted courses, reports | Short lessons, refreshers, prompts, reinforcement activities |

Main users | Instructional designers, SMEs, L&D teams, agencies | L&D admins, managers, HR, operations leaders | Learners, managers, enablement teams |

Key metrics | Content readiness, assessment performance, interaction data | Completion, compliance, enrolment, progress, assignment status | Engagement, repeat use, reinforcement uptake |

Best use case | Building custom content at scale | Running training operations | Supporting recall and behaviour reinforcement |

Strength | Content production and control | Administration and governance of learners | Speed and ease of consumption |

Limitation | Doesn't replace learner management | Usually weaker for deep content creation | Not ideal for full-length structured courses |

How it integrates | Exports or publishes into LMS or related systems | Hosts or launches authored content | Complements formal training and follow-up learning |

Where teams get confused

The most common confusion happens when a company buys an LMS and expects native authoring to be enough for all use cases. It usually isn't. Built-in course builders inside LMS platforms can be fine for simple pages, checklists, and lightweight quizzes. They often struggle when you need stronger visual consistency, scenario-based learning, reusable templates, or a repeatable content workflow across multiple authors.

The second confusion is treating microlearning as a replacement for structured training. It isn't. Microlearning works well for reinforcement, reminders, and role-specific refreshers. It doesn't replace formal onboarding, certification, or compliance instruction that requires sequencing, records, and rigorous assessments.

Use the authoring system for production, the LMS for administration, and the microlearning platform for reinforcement. The stack works better when each tool stays in its lane.

The practical buying rule

If your biggest pain is creating and updating content, start with authoring capability. If your biggest pain is assignment, reporting, and learner administration, the LMS decision comes first. If your formal training already exists but behaviour fades after launch, add microlearning as a reinforcement layer.

How to Select the Right Authoring System for Your Business

The right elearning authoring system depends less on industry labels and more on operating conditions. A franchise network doesn't need the same controls as a consultancy. A healthcare provider doesn't face the same content risk as a retail brand. The selection process gets better when you stop asking, "Which tool has the most features?" and start asking, "Which failure modes can we not afford?"

Start with governance, not interface polish

A slick editor can hide operational weakness. That's why selection should begin with control questions:

Who is allowed to create, edit, approve, and publish content?

How do updates flow across many courses or locations?

How is brand and compliance language protected?

What happens when multiple teams build at once?

Governance tends to break before design does. As noted in Gomo Learning's discussion of authoring tool myths, the operational burden of content governance and version control at scale is often overlooked, even though preventing fragmentation and enforcing brand and compliance consistency across large course libraries is a primary driver of ROI for franchises and large enterprises.

A broader content-governance lens also helps to understand where an authoring platform ends and where an LCMS may become relevant. This overview of what a learning content management system is is useful if your team is managing a growing shared catalogue rather than isolated courses.

What different business models should prioritise

Enterprise teams

Enterprise buyers should focus on scale discipline. That includes permissions, review workflow, single sign-on alignment, reusable assets, and support for multiple deployment environments. Enterprise teams often have decentralised experts and central governance, which means the tool has to support both distributed contribution and controlled publishing.

The wrong fit is usually a tool that feels quick in a pilot but falls apart when several departments start producing content simultaneously.

Franchise and multi-location operations

Franchise organisations need tight brand control with enough flexibility for local relevance. Look for template locking, central asset libraries, easy localisation, and a clear approval path for regional adaptations. Without those controls, every location starts producing its own version of training, and consistency collapses.

For this group, the authoring system isn't just a course builder. It's a way to stop operational drift.

Regulated industries

Healthcare, finance, manufacturing, and similar sectors should put compliance workflow near the top of the list. Audit trails, approval records, controlled updates, assessment evidence, and accessibility processes matter more than flashy interactions.

One of the biggest blind spots in the market is accessibility operations. Many tools present accessibility as a checklist feature, but the fundamental challenge is the ongoing workload required to maintain standards across a growing library. If your team works in a regulated environment, ask how the platform supports remediation, review, and repeatable compliance practice over time.

A regulated organisation shouldn't choose an authoring system based on how quickly it can publish a course. It should choose based on how reliably it can defend that course later.

Agencies and consultants

Agencies need flexibility across clients. That usually means multi-brand production, straightforward collaboration with external reviewers, reusable frameworks, and export options that fit different client ecosystems. White-labelling and tenant separation can matter as much as design capability.

The poor fit here is a tool that works beautifully for one in-house academy but becomes cumbersome when handling multiple client brands and approval chains.

A simple evaluation scorecard

Use a short scorecard in vendor review meetings. Keep it practical.

Evaluation area | What to ask |

Governance | Can we control roles, approvals, and publishing rights? |

Reuse | Can shared elements be updated without rebuilding multiple courses? |

Compatibility | Will this work cleanly with our current delivery environment? |

Collaboration | Can SMEs and reviewers participate without creating bottlenecks? |

Accessibility workflow | How is compliance maintained over time, not just at launch? |

Scalability | Will this still work when our catalogue and contributor pool grow? |

What usually works and what usually doesn't

What tends to work:

Pilot with a real course, not a polished vendor demo

Test with your actual reviewers, including compliance or legal if relevant

Use one high-change training use case to expose update workflow quality

Check export and reporting early, before procurement momentum makes reversal difficult

What tends to fail:

Choosing on ease of first build alone

Ignoring governance until the catalogue is already sprawling

Letting one power user's preference determine a system-wide decision

Assuming accessibility and review processes will somehow sort themselves out later

A good elearning authoring system should make your team more organised as volume increases. If the platform only works when a small number of skilled people carefully manage everything by hand, it won't hold up under business growth.

Implementation Workflow and The Rise of AI Automation

Most training teams still build courses through a familiar sequence. Gather source material. Draft a storyboard. Build screens. Add interactions. Route the course for review. Consolidate feedback. Revise. Export. Upload. Test. Fix something that broke in the LMS. Repeat.

That process isn't wrong. It's just labour-heavy.

The traditional workflow and where it drags

The manual path has hidden cost in every stage:

Content gathering often means chasing SMEs for files, notes, screenshots, and clarifications.

Storyboarding helps quality, but it also creates another handoff layer if the build happens elsewhere.

Production slows down when interactions, assessments, and formatting are built screen by screen.

Review becomes messy when comments are fragmented across channels.

Publishing and maintenance create recurring work every time source material changes.

None of that is surprising. The issue is scale. Once the catalogue grows, every update inherits the same manual burden.

Where AI changes the economics

AI automation changes the workflow by collapsing several early-stage tasks into one connected process. Instead of treating source documents as references that humans must manually convert into courses, newer systems can ingest those materials and generate a first structured draft with lessons, quizzes, and sequencing already in place.

That doesn't remove the need for human judgement. It changes where human effort goes. Less time goes into formatting and first-pass assembly. More time goes into accuracy, relevance, context, and quality control.

For example, a platform such as Learniverse can turn uploaded documents or web content into structured training assets, which is a different operating model from traditional authoring. If you're evaluating this category, this overview of auto course creation software is a useful reference point for what automated workflow now looks like in practice.

What to automate first

Not every part of course creation should be automated equally. Start with the repetitive layers:

Source ingestion: convert manuals, PDFs, and policy documents into draft lesson structures

Assessment generation: create first-pass knowledge checks tied to the source content

Content updates: refresh training when source documentation changes

Accessibility review support: surface issues earlier in production

Translation preparation: structure content so localised variants are easier to manage

That last point matters more than many teams realise. If your organisation supports multiple languages, translation workflow becomes part of authoring workflow. For teams dealing with localisation files and AI-assisted language handling, TranslateBot's view on AI for .po files is a useful parallel because it shows how automation can help with structured language assets while still requiring review discipline.

Accessibility is becoming an automation test

A lot of platforms say they support accessibility. Fewer help teams manage the operational burden of keeping a large course library compliant over time. That's where AI has practical value. As discussed in eLearning Industry's article on overlooked authoring tool features, a key advantage for regulated sectors lies in platforms that automate accessibility auditing and reduce the specialised effort needed to maintain WCAG 2.1 AA or AAA standards across many courses.

That changes implementation priorities. Accessibility should no longer sit at the end as a final review step. Teams should look for workflow support that flags issues during build and update cycles.

The most useful AI in learning design doesn't replace judgement. It removes repetitive production work so judgement can be applied where it matters.

A better implementation rhythm

Whether you're using a traditional authoring suite or a newer AI-assisted platform, the strongest implementation rhythm looks like this:

Begin with a governed template set so every course doesn't start from zero

Use real source material rather than rewriting content prematurely

Assign one accountable owner per course even in collaborative builds

Review for decision quality, not just wording

Treat publishing as the start of optimisation, not the end of production

A short product walkthrough can help teams visualise what that more automated model looks like in action:

The broader shift is straightforward. Traditional authoring systems improved digital course production. AI-assisted authoring is changing the cost structure of that production. For teams under pressure to launch more training without proportionally increasing headcount, that's not a marginal improvement. It's a different planning model.

Your Next Step in eLearning Authoring

A leadership team usually feels the limits of its current approach at the same point. Product training is late, compliance updates are stuck in review, franchise locations are teaching different versions of the same process, and every urgent change depends on one or two people who know how the old tools work.

That is the point where an elearning authoring system stops being a design purchase and becomes an operating decision. The platform you choose shapes how quickly training moves from source material to live course, how consistently standards are applied, and how expensive each revision becomes over time.

For enterprise teams, that affects scale. For franchises, it affects brand and process consistency across locations. For regulated businesses, it affects version control, review traceability, and the risk that outdated guidance stays live longer than it should. The wrong system creates hidden costs long after procurement is complete. The right one gives the team a repeatable production model.

That is also why feature checklists only get you part of the way. A tool can look strong in a demo and still fail in practice if it does not match your review workflow, your subject matter expert capacity, or the pace of content change in the business. The better question is simple. Will this system reduce production effort while improving control as our catalogue grows?

AI now matters in that answer. Earlier authoring tools improved how teams built digital learning. AI-assisted authoring changes how much manual work the team has to carry, especially when courses start from documents, policies, manuals, and existing web content. That shift has direct ROI implications because it changes production capacity without requiring the same increase in headcount.

Start with an audit of how training is built today. Look at where work stalls, who creates bottlenecks, how often content is updated, and which audiences create the most operational risk if training slips. Then assess authoring systems against those constraints, not polished demo scenarios. That is how teams choose a platform that still fits six months after rollout, when reviewer volume rises, content libraries expand, and the business asks for faster turnaround again.

If you're exploring a more automated approach, Learniverse is worth a look. It helps teams turn existing documents, manuals, and web content into interactive courses, quizzes, and branded training experiences with less manual setup, which can be especially useful for organisations trying to scale training without scaling admin work at the same pace.