You've probably been handed a familiar brief. The team needs onboarding videos. Compliance wants proof people completed training. Operations wants fewer repeat mistakes. Leadership wants it done quickly and cheaply. Someone says, “Let's just record a few videos.”

That's where many training projects go sideways.

If you want to learn how to create online training videos that help the business, don't start with cameras, editing software, or animated intros. Start with the operational problem. Then build a video system that people will finish, managers can track, and your team can update without rebuilding everything every quarter.

Most guides stop at production mechanics. That's useful, but incomplete. A training video isn't valuable because it exists. It's valuable when it changes behaviour, shortens ramp time, improves consistency, or reduces risk. That standard should shape every decision you make, from scripting to hosting.

Aligning Training Videos with Business Goals

The biggest mistake new training managers make is treating the video as the project.

It isn't. The project is the business outcome. The video is one delivery method.

Existing guidance on training video creation tends to focus on scripting, visuals, and tools, while leaving a major gap around business measurement and ROI. That gap matters because teams need a way to connect training to operational outcomes, not just completion data, as noted in this analysis of interactive training video creation gaps.

Start with the failure you need to reduce

Before you write a script, ask four blunt questions:

What's going wrong today

Who is affected

What behaviour needs to change

How will we know the change happened

That shifts the conversation from “we need a training video” to “we need fewer customer handoff errors” or “new hires are taking too long to handle standard requests without help.”

That distinction changes everything. If the issue is inconsistent execution across locations, your video needs standardised demonstrations and job-specific examples. If the issue is compliance drift, your video needs clear decision rules, checks for understanding, and auditable completion records.

Build a KPI map before production

You don't need a complicated dashboard on day one. You do need a simple map that links training activity to business evidence.

A practical version looks like this:

Business problem | Learner behaviour to change | Training evidence | Business evidence |

Slow onboarding | New hires complete core tasks correctly | video completion, quiz results, manager sign-off | shorter time to independent work |

Process inconsistency | Staff follow the same sequence every time | scenario responses, replay hotspots, assessment results | fewer escalations or rework cases |

Compliance risk | Staff recognise and respond to high-risk situations properly | required completions, pass marks, policy acknowledgement | stronger audit readiness |

Don't promise dramatic savings before the first video goes live. That's how training loses credibility. Instead, agree on what evidence leadership will accept. If operations leaders care about fewer process deviations, measure that. If HR cares about onboarding speed, track that. If compliance cares about records, prove records.

Practical rule: If you can't state the operational problem in one sentence, you're not ready to record.

Get stakeholder buy-in with operational language

Training teams often present video projects in learning language only. Leaders outside L&D usually respond better to operational language.

Say this:

Consistency across sites: Every learner gets the same explanation and demonstration.

Faster updates: One approved source can be republished without pulling managers into repeated live sessions.

Better proof: Completion records, assessment results, and content version control are easier to review than verbal handoffs.

Don't say this:

Engaging multimedia learning experience

Creative knowledge transfer initiative

Transformational content ecosystem

That language sounds polished but rarely survives budget review.

Decide what not to film

Not every problem needs video. If employees just need a checklist, publish a checklist. If the process changes weekly, a static PDF or live huddle may be more practical until the workflow settles.

Use video when one of these is true:

Demonstration matters: People need to see a task done correctly.

Consistency matters: Different trainers are currently explaining the same thing in different ways.

Scale matters: You need one approved version across many people or locations.

Replay matters: Learners will need to revisit the content while working.

That's the first real test of how to create online training videos effectively. Don't ask, “Can we make a video?” Ask, “Will a video solve the problem better than the alternatives?”

Scripting and Storyboarding for Learner Retention

Weak scripts create expensive edits.

You can fix lighting in post. You can trim pauses. You can add captions. You can't easily rescue a video that rambles, buries the point, or forces the learner to guess what matters.

A reliable starting structure comes from a simple three-part formula: introduction, authentic demonstration, and recap with next steps, supported by guidance on SME interviews and prep workflow in .

Use a three-part script that respects attention

Here's the structure that works in corporate training:

Part one: introduction State who the training is for, what task or decision it covers, and why it matters. By doing so, you establish relevance and credibility.

Part two: demonstrationShow the exact process, judgement call, or interaction. Use a real workflow, not a vague summary. If it's software training, record the clicks. If it's a compliance issue, show the scenario and the right response.

Part three: recap and next stepReinforce the key rule or sequence. Then tell the learner what to do next. That might be a short quiz, a follow-up module, or a manager-led practice task.

That structure keeps you out of the two worst script traps: overexplaining context and ending without action.

Interview SMEs like an editor, not a court reporter

New managers often ask SMEs to “talk through the topic.” That produces a long transcript filled with side roads, caveats, and insider language.

A better approach uses open questions:

What does a new employee usually get wrong first?

What signals tell you the situation is becoming risky?

What should the employee do before they escalate?

What language do you want them to use with a customer or patient?

Where does policy get misread in real life?

Open questions surface judgment, not just facts. That's what makes training useful.

Run a mock interview with a colleague first. You'll catch missing terms, awkward question order, and sections that need examples. That review saves time and reduces the odds of dragging your SME into another recording session.

If the SME gives you policy language and no real-world example, keep asking. Learners remember decisions in context, not abstract statements.

Turn the script into a storyboard

A storyboard doesn't need to look like a film production board. For most corporate teams, a clean table is enough.

Scene | Visual | On-screen text | Narration | Purpose |

Opening | presenter or title slide | course title and role relevance | what this covers and why it matters | orient learner |

Demo step | software screen or live task | step label | explain action and reason | teach process |

Risk point | highlighted field or wrong example | common error | explain what to avoid | prevent mistakes |

Close | summary slide | key takeaway | recap and direct next action | reinforce retention |

If you need examples of how to lay this out clearly, these storyboard examples for training content are a useful reference point.

Write for speech, not for reading

Scripts that read well on a page often sound stiff on camera.

Use shorter sentences. Cut stacked clauses. Replace policy wording with plain language where accuracy allows. Put terms people need to remember on screen instead of making the presenter repeat them three times.

A good script review asks:

Can a first-time employee follow this without translation from a manager?

Does every scene earn its place?

Are we teaching the task, the judgement, or both?

Can the presenter say this naturally?

If the answer to the last question is no, rewrite it. A natural delivery beats a “perfect” sentence every time.

Recording High-Quality Video and Audio on a Budget

Teams often overspend on the wrong things.

They worry about camera quality, branded backdrops, and cinematic edits. Meanwhile, the main problems are echo, bad framing, dim lighting, and screen recordings with tiny unreadable text. Learners will forgive a simple setup. They won't forgive audio they have to fight through.

Put your budget into clarity

If you can only improve three things, improve these:

Audio first: Use an external microphone instead of the laptop mic when possible. Even an inexpensive USB mic or wired lavalier usually improves intelligibility.

Lighting second: Face a window or use a simple light positioned in front of the speaker. Overhead office lighting alone often creates shadows and a tired look.

Framing third: Keep the camera at eye level and clean up the background. A neutral office wall beats a cluttered bookshelf full of distractions.

That's the production 80/20. Don't chase polish before you've fixed clarity.

A practical low-cost setup

You can record solid training videos with tools many teams already have.

Need | Good budget option | What to watch for |

Camera | smartphone or built-in webcam | stabilise it, use landscape orientation |

Microphone | USB mic or clip-on lav | test echo and plosives before recording |

Lighting | window light or basic LED light | avoid strong backlighting |

Screen capture | desktop recording software | zoom interface elements before recording |

If you're comparing software without wanting to spend much, this list of low-cost recording tools from Budget Loadout is a sensible place to start.

Record software tutorials differently from talking-head videos

A lot of first-time creators use the same production style for everything. That usually weakens both formats.

For software or process walkthroughs:

record at a resolution that keeps text readable

zoom browser windows or application panels before you begin

hide irrelevant notifications and tabs

move the cursor deliberately, not constantly

narrate the reason behind each action, not just the click path

For presenter-led videos:

use a slide deck or talking points beside the camera

keep the shot steady

ask the speaker to pause and restart a sentence if needed instead of pushing through mistakes

If learners need to revisit fine detail, pairing the final module with replay support can help. A feature set like audio and video replay in learning content is useful when the task requires repeated review.

A quick demonstration helps less experienced presenters see what “clear enough” looks like before they overcomplicate the setup.

Don't record until you run a technical rehearsal

A five-minute rehearsal catches most avoidable problems:

microphone not selected

screen text too small

glare on glasses

camera slightly below eye line

background noise from HVAC or office traffic

This is the part inexperienced teams skip because it feels minor. It isn't. One rehearsal can prevent a complete re-record.

Record a short sample, play it back with headphones, and check whether the learner can understand every word without strain.

That's a better quality standard than “looks professional.”

Editing for Engagement and Accessibility

Editing is where training videos become usable.

Raw footage often contains every bad habit that weakens learning: long openings, repeated phrases, unnecessary context, and examples that arrive too late. Good editing removes friction. Great editing also makes the content easier to understand, easier to revisit, and easier to complete on a busy workday.

Cut for microlearning, not for completeness

A lot of managers assume one topic equals one video. It usually shouldn't.

Microlearning videos in the 1 to 6 minute range generate 50% higher engagement rates, improve learning effectiveness by 17%, and are preferred by 94% of learners, according to these online learning statistics on microlearning. The same source also notes 6 to 10 minutes as a better range for foundational training.

That doesn't mean every video must be extremely short. It means each video should do one job well.

Edit into decision-sized chunks

A useful editing pass asks, “What single question does this segment answer?”

That creates tighter modules such as:

how to log the case correctly

when to escalate to a manager

what wording to use in a customer call

how to verify a required document

what to do if the system response looks wrong

Those are easier to assign, easier to update, and easier for learners to replay on demand than one long lesson called “Customer Service Onboarding”.

A simple segmentation model:

Content type | Better edit choice |

quick task | one short clip focused on one action |

foundational concept | a slightly longer module with one example |

complex workflow | several linked clips with a checkpoint between them |

Use visual reinforcement sparingly

On-screen text should support the narration, not duplicate every sentence.

Good additions include:

step names

policy terms learners must recognise

warning labels for common errors

callouts that point to the exact button, field, or form area

brief summaries at the end of a segment

Less helpful additions include stock footage that has nothing to do with the process, animated transitions on every cut, and paragraphs of text pasted onto slides.

The edit should reduce mental load, not increase it.

Accessibility is part of quality control

A training video without captions is harder to use in offices, shared spaces, mobile settings, and multilingual teams. It also creates unnecessary barriers for learners who rely on text support.

At a minimum:

Add accurate captions: Review auto-generated captions carefully. Industry terms, names, and acronyms are often wrong.

Provide a transcript: This helps learners skim, search, and review.

Check on-screen text size: If a learner can't read the label on a laptop, the edit failed.

Avoid colour-only cues: If red and green are your only signals, some learners will miss the distinction.

If your team needs a simple workflow, this guide on how to make content accessible for your audience is a practical reference.

Accessibility work often improves completion because it removes friction for everyone, not only for learners with formal accommodation needs.

That's the connection many teams miss. Engagement and accessibility aren't separate editing goals. They reinforce each other.

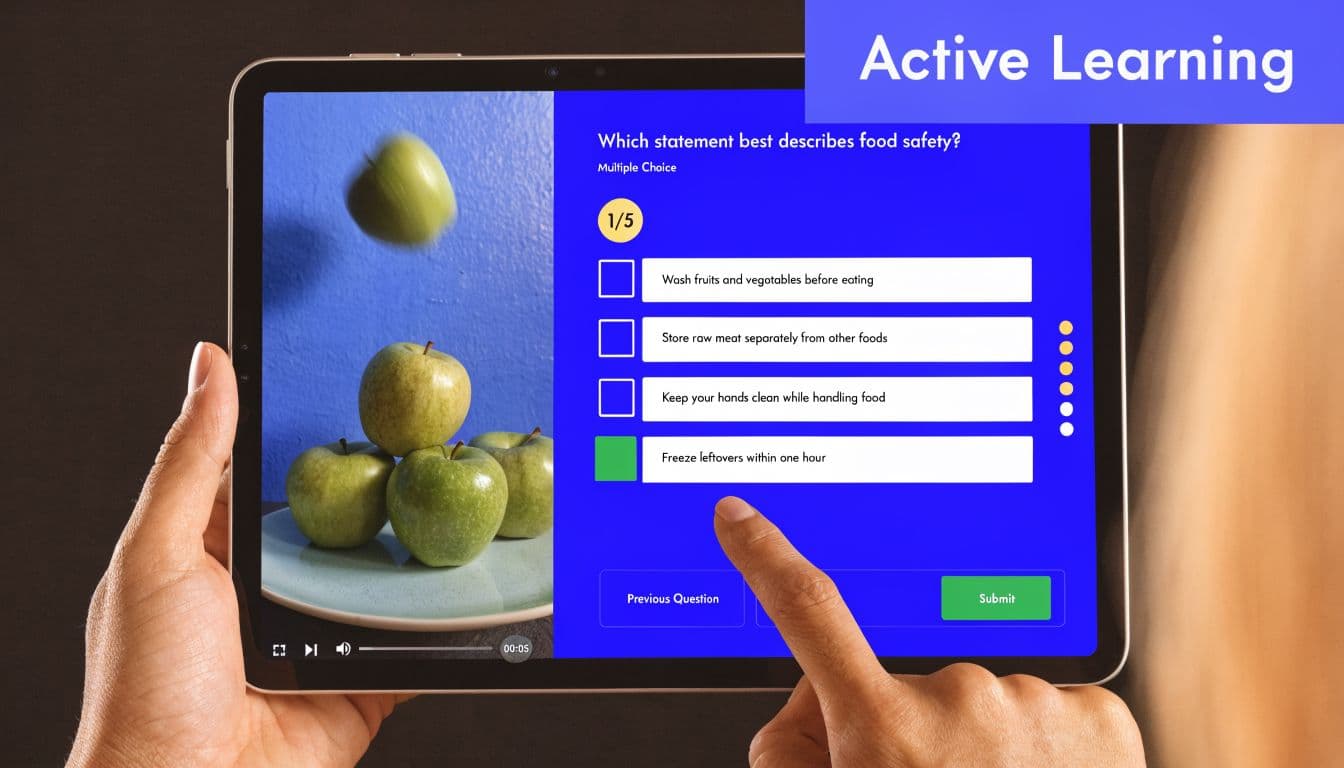

Adding Interactivity and Assessments

Passive video is better than no training. It's still a compromise.

If a learner can click play, drift mentally, and still get completion credit, you haven't built training. You've built a viewing event. That's a problem in onboarding, and it's a serious problem in compliance, safety, and decision-based roles.

Add checks where people usually guess

Interactivity works best when it appears at moments where employees commonly make errors. Don't interrupt every minute with a quiz. Interrupt when a wrong choice in real work would matter.

That can be as simple as:

a multiple-choice question after a policy scenario

a click-to-identify hotspot on the correct field in a form

a short poll that asks what the learner would do next

a branch that sends the learner to different follow-up content based on their answer

The point isn't novelty. The point is evidence. A good interaction shows whether the learner understood the rule, recognised the risk, or can apply the process.

Match the interaction to the skill

Different content needs different assessment methods.

Training need | Better interaction |

remembering a required step | quick knowledge check |

spotting an error | hotspot or annotated choice |

making a judgement call | branching scenario |

testing confidence before supervisor review | recap quiz with feedback |

Branching deserves special attention. It's one of the few ways video-based training can approximate decision-making under pressure. For a new manager learning incident response, or a frontline worker handling a difficult customer, a branch can show consequences without exposing the business to real-world risk.

Keep feedback immediate and specific

A weak quiz only tells learners whether they were right or wrong. A useful quiz tells them why.

For example:

Wrong because the employee skipped a required verification step

Wrong because escalation should happen before the refund is promised

Correct, but only after documenting the exception in the approved system

That kind of feedback turns assessment into learning instead of grading.

If you need ideas for making learning content more participatory, Bulby's tips for interactive presentations offer several formats that transfer well into video-based training.

The best interaction is the one that catches a mistake before a customer, regulator, or manager does.

Don't confuse activity with understanding

Some teams add interactions because the platform makes them available. That often creates noise.

Avoid:

trivia that tests memory on details nobody uses

drag-and-drop tasks that look impressive but teach nothing

branching paths with no realistic consequences

quizzes placed at random rather than at risk points

Use interaction where it helps answer a hard question: Can this employee apply the training in context?

That's a much higher standard than “Did they watch the video?”

Choosing the Right Hosting and Delivery Platform

Where the video lives affects whether the training stays manageable.

This decision gets underestimated because it feels administrative. It isn't. Hosting determines update speed, reporting depth, access control, and whether you can serve different learner groups without duplicating your entire library.

Current guidance often misses the harder operational issue for distributed teams: personalization, localization, and efficient updates across roles and locations. That gap is outlined in this discussion of training video challenges for diverse workforces.

Compare the three main options honestly

Each platform type solves a different problem.

Option | Works well for | Main limitation |

Self-hosting | simple public access, full control | more technical overhead, weaker training workflows |

Third-party video platform | quick publishing, easy playback | limited learning paths and assessment depth |

LMS or eLearning platform | tracked learning, role-based assignment, assessments | more setup and governance required |

Self-hosting suits public-facing education or internal libraries where tracking isn't critical. It's less attractive if you need robust completion records, role-specific assignments, or structured pathways.

Third-party video platforms are easy to deploy and familiar to users. They're often fine for communications, product explainers, or optional learning content. They're weaker when the training needs to prove competence, not just views.

LMS and eLearning platforms are stronger when accountability matters. They can assign content by role, track completion, attach quizzes, and support recurring programs. For teams comparing options, this overview of online course platform types and trade-offs is a helpful shortlist.

Choose based on update pressure and audience complexity

A central question is how often your content changes.

If policies, forms, or system screens change regularly, your platform should make updates simple. If you support multiple job roles or regions, your platform should let you vary assignments and pathways without making ten copies of the same course.

That's where many organisations outgrow a basic video host.

A practical selection checklist:

Audience control: Can you assign by role, team, or location?

Assessment support: Can you attach quizzes, acknowledgements, or branching?

Reporting: Can managers see more than view counts?

Update agility: Can you swap or revise content without rebuilding everything?

Localization workflow: Can you maintain versions clearly when language or policy differs?

Don't let your library turn into a content graveyard

Many teams start with a folder of videos and end with a mess: duplicate titles, old policy versions, and no clear ownership.

Prevent that early:

name videos by task and audience

assign an owner for each module

set a review cadence

archive outdated versions visibly

keep source files organised, not scattered across laptops

A platform won't solve poor governance by itself. But the wrong platform makes governance harder.

If your workforce is distributed, multilingual, or heavily regulated, don't settle for “where can we upload this?” Ask, “How will we maintain this library without losing control?”

Measuring Success and Scaling with AI

At this point, the project either earns credibility or becomes another content archive.

Once the videos are live, your job isn't to report views and move on. Your job is to show whether the training changed anything that leadership cares about. That requires a clean link between the business problem you defined earlier and the learner evidence you're collecting now.

Track learning signals that lead to operational proof

View count is weak evidence. Completion rate alone isn't much stronger.

Better signals include:

Completion by required audience: Who finished, who didn't, and where the gaps are

Assessment performance: Whether learners can answer or apply the material correctly

Interaction data: Which decision points cause confusion

Replay behaviour: Where people return because the task is difficult or unclear

Manager verification: Whether learners can perform the task correctly after training

Those signals become useful when you compare them against the operational metric attached to the project. For onboarding, that might be readiness for independent work. For compliance, it might be cleaner adherence to required process steps. For service roles, it might be fewer preventable escalations.

Use a simple evidence chain

A practical review model looks like this:

Layer | Question |

Training activity | Did the right people complete the right modules |

Learning evidence | Did they understand and apply the content in assessments |

Behaviour evidence | Are managers seeing the expected actions on the job |

Business evidence | Did the original operational problem improve |

That sequence matters. If completion is high but behaviour doesn't change, the content may be too abstract. If learners fail the same assessment item repeatedly, the script or demonstration may be unclear. If behaviour improves but the business metric doesn't move, the original problem may involve process design, staffing, or tooling rather than training alone.

That last point is worth saying plainly. Training can solve a lot. It can't fix a broken workflow by itself.

Good reporting doesn't prove training always worked. It shows where training helped, where it didn't, and what needs to change next.

AI now has a practical role in scaling production

For lean training teams, production volume is often the bottleneck. You may have manuals, SOPs, slide decks, and policy documents, but not enough time to turn them into polished learning assets.

There's growing evidence that AI-assisted production belongs in the practical toolkit. A UCL study found that AI-generated training videos matched human-recorded videos in learning outcomes and engagement, and learners completed courses 20% faster with no decline in performance, as reported in this summary of the UCL synthetic video training findings.

That doesn't mean every video should become an avatar-led production. It does mean teams should stop assuming human-presented video is always superior.

Use AI where it reduces repetitive work:

turning source documents into first-draft scripts

generating narration for straightforward procedural modules

converting long training into shorter lessons

producing quiz drafts from existing content

updating standard modules when source material changes

One platform in this category is Learniverse, which turns PDFs, manuals, and web content into structured training modules with quizzes and tracking. For teams managing frequent updates or distributed delivery, that kind of workflow can reduce manual build time.

Scale with standards, not just speed

AI helps most when your content operation is already organised.

That means:

approved source documents

named content owners

standard templates for module structure

review rules for legal, HR, or compliance sign-off

a clear process for retiring outdated material

Without that discipline, AI will only help you produce inconsistencies faster.

The strongest training teams treat video as a managed system. They plan against business outcomes, script tightly, record clearly, edit for usability, add interaction where it matters, choose delivery platforms with operational logic, and review performance with honesty. That's how to create online training videos that get used, get trusted, and keep their value after launch.

If you're building training with a small team and need a faster way to turn existing PDFs, manuals, and web content into structured learning, Learniverse is worth a look. It helps training managers create interactive courses, quizzes, and microlearning lessons with built-in tracking, which is useful when you need to scale content without adding more admin.