Training teams have a cost problem, not just a content problem. In 2024, organizations reported an average of 13.7 formal learning hours used per employee, down from 17.4 in 2023, while the average cost per learning hour rose 34% to $165, according to the ATD 2025 State of the Industry Report.

That changes the conversation around learning in organizations.

The old model assumed you could keep adding courses, schedule more workshops, and rely on manual administration to hold the system together. That model is getting more expensive while producing less formal learning time. If you're running training across franchises, frontline teams, regulated environments, or growing SMBs, you can already feel the strain. Content ages fast. Managers want faster onboarding. Compliance updates keep coming. The team responsible for learning usually isn't getting more time to manage all of it.

The practical response isn't to chase more content. It's to build a learning ecosystem that uses formal training carefully, supports learning in daily work, and automates the repetitive parts of delivery and maintenance. That's where modern L&D is heading. Not because it's fashionable, but because the economics now demand it.

The Modern Challenge in Organizational Learning

Most organizations still treat learning as an event. They launch a course, assign it, track completion, and move on. That works for a narrow compliance requirement. It doesn't work for capability building at scale.

Why the old model breaks down

Formal training has always been the most visible part of L&D. It's easy to budget, easy to schedule, and easy to report. It's also the easiest part to overbuild.

When formal learning time drops while cost per hour rises, every low-value activity becomes more expensive. Long slide-based modules. Duplicate content across departments. Manual updates every time a process changes. Instructor-led sessions used for information that could have been delivered asynchronously.

Those aren't just annoyances. They're operating costs.

Practical rule: If a training asset takes longer to maintain than it takes learners to use, your system is likely overengineered.

Many teams misdiagnose the problem at this point. They think they need better content. Often they need better architecture.

What efficient learning systems do differently

High-functioning learning systems usually share a few traits:

They reserve formal learning for high-value moments. Onboarding milestones, risk-heavy tasks, management transitions, and major process shifts deserve structured learning.

They move reference material out of courses. Policies, manuals, and SOPs shouldn't all live inside long modules.

They reduce admin work. Course creation, updates, tagging, and path assignment shouldn't consume the bulk of L&D capacity.

They connect training to work. The closer the learning sits to actual tasks, the more likely people are to use it.

For franchise operators and smaller companies, this matters even more. You don't have the luxury of building every programme by hand. You need repeatability, speed, and enough flexibility to adapt by role or location.

A modern learning strategy starts by accepting one hard truth. Formal training alone can't carry organizational learning anymore. It has to be part of a broader system.

Understanding the DNA of a Learning Organization

A learning organization behaves less like a library and more like a living system. It senses changes, interprets what they mean, and adjusts how people work.

Think of learning as an organizational nervous system

Every business receives signals. A customer complaint. A process failure. A safety incident. A drop in conversion. A new policy requirement. A new product line.

Weak learning organizations react late. Information stays trapped inside teams. People patch the immediate issue and carry on.

Strong learning organizations do something else. They turn signals into action. They spot patterns, share lessons, redesign workflows, and make future performance more reliable.

This is the core meaning of learning in organizations. Not course volume. Not LMS activity. Behavioural adaptation.

This matters competitively. Organizations with strong learning cultures are 92% more likely to innovate, and AI usage in L&D rose to 25% in 2024, according to Docebo's review of learning and development trends. The practical read is simple. Teams that learn well also tend to adopt better tools faster.

Single-loop and double-loop learning

These two ideas sound academic until you put them into a daily business scenario.

Single-loop learning fixes the immediate error.

A store manager notices that staff are submitting inventory counts incorrectly. The response is to remind the team how to complete the form and require another submission.

Useful? Yes. Sufficient? Not always.

Double-loop learning questions the system that created the error.

The same manager asks different questions. Was the form confusing? Were instructions buried in a manual? Did the workflow force staff to guess? Was the training too generic for the store's actual process?

That second response changes the conditions, not just the outcome.

A mature learning culture doesn't just correct people. It redesigns the environment so the same mistake is less likely to happen again.

What a real learning culture looks like

A learning culture isn't free snacks and access to a course catalogue. It's visible in operating habits.

Look for signs like these:

Managers discuss skill gaps openly. They treat capability as an operational issue, not a personal weakness.

Teams capture workarounds. Informal knowledge doesn't stay trapped with one experienced employee.

Feedback changes systems. If learners keep getting stuck at the same point, the process or content gets revised.

Leaders protect time for practice and reflection. Not constantly, but deliberately.

What doesn't work is declaring that learning matters while rewarding only speed, output, and short-term firefighting. People learn what the organization values.

How to assess your own maturity

Ask four direct questions:

When something goes wrong, do you only retrain people, or do you also inspect the workflow?

Can frontline knowledge move upward fast enough to change policy or process?

Do managers know the critical skills for each role?

Can your learning system adapt without a complete rebuild every time the business changes?

If the answer is mostly no, the issue isn't motivation. It's design.

Key Models for Building a Learning Ecosystem

Teams typically do not need more theory. They need a small set of models that help them decide where learning should happen and how support should be structured.

The role of 70-20-10

The 70-20-10 model remains useful because it forces realism. It reminds leaders that most capability doesn't come from formal training alone. It develops through work, coaching, collaboration, and repetition.

If you want a deeper breakdown, this guide on the 70-20-10 model in learning and development is a practical reference.

The mistake is treating 70-20-10 as a rigid formula. It isn't. It's a planning lens.

In practice, the model pushes teams to ask better questions:

Are employees learning this by doing the work?

Do managers have the tools to coach it?

Does formal training only cover what needs structure?

Communities of practice

A community of practice is a structured way to let people in similar roles share methods, decisions, and lessons from the field.

This is especially useful in distributed environments. Franchise managers, regional sales leads, claims teams, clinical educators, and onboarding specialists often solve the same problems repeatedly without seeing each other's fixes.

A community of practice helps prevent that waste. But it needs curation. Without a clear purpose, it turns into another chat channel with no retention.

Comparing key organizational learning models

Model | Core Principle | Best For | Implementation Focus |

70-20-10 | Most learning happens through work, support, and limited formal instruction | Capability building tied to daily performance | Align formal training with job tasks, coaching, and practice |

Communities of Practice | Peers improve performance by sharing expertise in role-based groups | Distributed teams, specialist roles, operational consistency | Define a role-based community, recurring discussions, and knowledge capture |

Formal Programme Design | Structured instruction is reserved for critical knowledge and high-risk tasks | Compliance, onboarding, certification, process shifts | Build clear pathways, assessments, and reinforcement moments |

Workflow Learning Support | People need access to guidance at the moment of need | Fast-moving operations, frontline execution, process adherence | Embed job aids, searchable resources, and short refreshers into work |

When each model works and when it doesn't

70-20-10 works when managers are willing to coach and when tasks can be learned through repetition. It struggles when management capability is weak or roles are highly regulated.

Communities of practice work when members face similar recurring issues and someone curates the exchange. They fail when participation is optional but unsupported.

Formal programmes work when the content must be standardised. They fail when teams use them as a storage bin for every piece of information.

Workflow learning support works when employees can access it quickly. It fails when the resource library is cluttered, outdated, or disconnected from real tasks.

The best learning ecosystems don't choose one model. They assign each model to the problem it solves best.

That is the discipline many organizations skip. They overinvest in whatever tool they already have, usually an LMS, and then expect it to solve coaching, knowledge sharing, onboarding, and performance support at the same time.

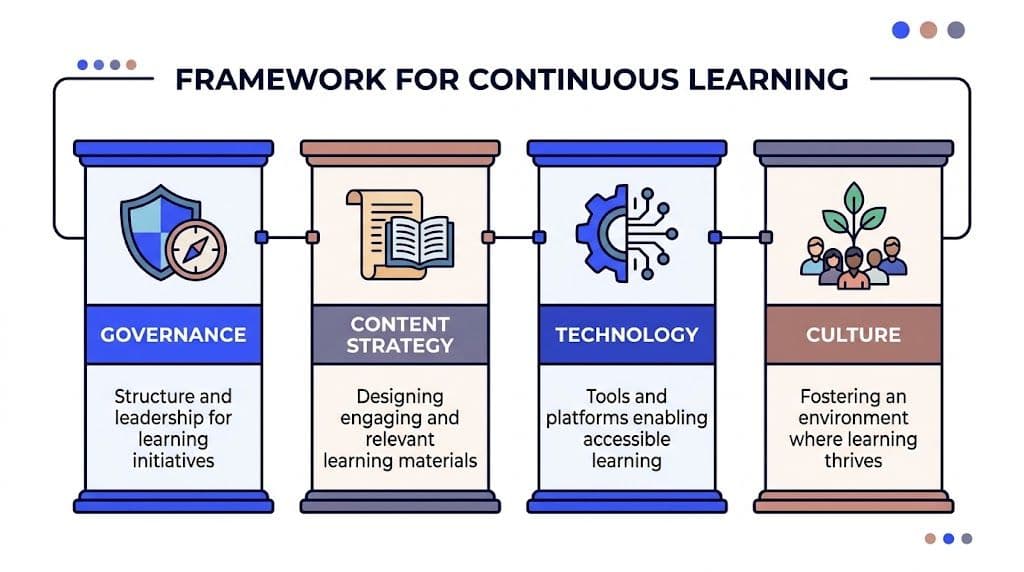

A Practical Framework for Continuous Learning

A scalable learning system needs structure. The simplest way to build it is around four pillars: governance, content strategy, technology, and culture.

Governance

If nobody owns decisions, learning becomes a side project.

Governance answers practical questions. Who approves critical content? Who decides which skills matter by role? Who can retire outdated material? Who owns onboarding versus compliance versus manager development?

In smaller organizations, governance can stay light. A training lead, an HR partner, and one operational sponsor may be enough. In larger or regulated environments, ownership needs to be explicit by domain.

What doesn't work is letting every department create training independently with no common standards. That produces duplicated modules, conflicting instructions, and a reporting mess.

Content strategy

Most organizations already have more source material than they realise. Manuals, SOPs, policy PDFs, process documents, call scripts, checklists, playbooks, internal FAQs, and recorded walkthroughs all contain usable learning content.

The issue is conversion.

A content strategy should define:

What becomes formal training

What stays as reference material

What gets turned into microlearning

What requires periodic review

What must be personalised by role, region, or risk level

AI changes the operating model here. Instead of rebuilding from scratch, teams can convert existing documents into structured learning assets and keep updates closer to the source material.

One practical example is Learniverse, which can turn PDFs, manuals, or web content into interactive courses, quizzes, and microlearning lessons. That matters when your team needs to publish quickly without hand-building every module.

Technology

Technology should reduce friction, not add another admin layer.

A useful stack usually does four things well:

Creates learning assets efficiently

Delivers them across roles and locations

Supports personalisation

Produces usable analytics

The strongest platforms also support learning paths, not just standalone courses. That matters because capability rarely develops in one sitting. It develops across a sequence of exposure, practice, feedback, and reinforcement.

There is measurable upside here. Adaptive platforms that create personalised learning paths from performance data can increase knowledge transfer to job impact by 32%. For Canadian SMBs, AI-driven microlearning automation has cut onboarding time by 45%, from four weeks to 2.2, based on the analysis cited by eLearning Industry on learning metrics that matter.

Culture

Culture determines whether the system gets used.

You can have strong governance, clean content, and solid tooling, then still fail because managers treat learning as optional overhead. Employees read that signal immediately.

Build the culture through operating choices:

Managers discuss development in one-to-ones

Teams share local improvements

New knowledge is captured, not lost

Learning time is linked to real business priorities

A simple build sequence

Don't try to perfect all four pillars at once. Build in this order:

Start with role clarity. Define the critical skills and decisions for a small number of roles.

Then fix content flow. Identify which existing materials can become training, support, or reference.

Next choose enabling technology. Prioritise speed of creation, update control, and analytics.

Finally reinforce with manager habits. The system sticks when leaders use it in everyday performance conversations.

That sequence keeps learning in organizations practical. It starts with work, not with software.

Activating Your Learning Strategy with Change Management

Good learning design fails all the time for one reason. The organization never changes its behaviour around it.

Leaders approve the platform. L&D launches new pathways. A pilot goes live. Then managers keep using old job aids, teams stay inside familiar shortcuts, and the new system becomes another layer of work.

That's why change management isn't separate from learning strategy. It is part of implementation.

Build the business case in operational terms

Executives rarely resist learning because they dislike development. They resist vague proposals.

Tie the strategy to problems they already care about:

Onboarding drag

Inconsistent execution across sites

Compliance exposure

Knowledge loss when experienced staff leave

Too much manual effort in training operations

The case gets stronger when you show trade-offs clearly. If content updates currently require manual rebuilding across multiple courses, say so. If managers spend time reteaching material that should already be standardised, say that too.

A useful companion resource is this overview of the change management process, especially if you're formalising implementation across multiple teams.

Why employee buy-in usually stalls

Most resistance isn't ideological. It's practical.

Employees disengage when:

They can't see how training helps them do the job

The content feels generic

Managers don't reinforce it

The new process adds clicks without reducing confusion

People will adopt a new learning system when it saves time, removes uncertainty, or helps them perform better. If it does none of those things, comms won't fix the problem.

Field note: Adoption improves when the first learning experience solves an immediate issue the learner already cares about.

Start with a pilot that has visible pain

Don't begin with the broadest possible rollout. Start where the pain is obvious.

Strong pilot candidates usually include a messy onboarding flow, a frequently updated process, a high-risk compliance area, or a role with persistent performance variance across teams. Those use cases create natural urgency.

Once the pilot is live, collect practical feedback fast. Not abstract reactions. Ask what helped, what slowed people down, and what still required manager intervention.

Later in the rollout, a short visual can help leaders align on the shift from plan to practice.

Equip managers before you train employees

Many L&D rollouts train the workforce first and brief managers later. That sequence creates drag.

Managers need three things early:

What is changing

Why it matters operationally

What they are expected to do differently

Keep that manager toolkit simple. Talking points. Escalation path. Reinforcement checklist. A view of where to find role-based materials. Nothing bloated.

Make participation visible

Learning becomes real when leaders talk about it in ordinary rhythms of work. Team huddles. One-to-ones. Quarterly reviews. Site visits. Operational updates.

If the initiative only appears in launch emails, people will treat it like a campaign. If leaders use it in performance conversations, people will treat it like part of the job.

Measuring What Matters KPIs and Learning Analytics

Most learning dashboards report activity. Executives want impact.

That gap is why many L&D teams struggle to defend budget. Completion rates and attendance numbers are useful, but they don't answer the core question. Did capability improve in a way the business can feel?

Separate leading and lagging indicators

A practical dashboard includes both.

Leading indicators show whether the learning process is functioning:

Engagement with assigned pathways

Assessment performance

Manager follow-through

Use of reinforcement materials

Progress by role or location

Lagging indicators show whether learning changed outcomes:

Time to productivity

Retention in key roles

Compliance performance

Operational consistency

Quality or customer-related performance trends

Don't overload the dashboard. Each audience needs a different view. Operations leaders need role readiness and execution risk. HR leaders need retention and ramp-up. Trainers need content performance and learner friction points.

Connect learning data to workforce data

A significant shift happens when learning analytics stop living alone.

Integrating learning analytics with HRIS data in Canadian firms has been linked to targeted skills gap closure that boosts employee retention by up to 25% within 12 months. Organizations using integrated data also achieve 18% faster time-to-productivity for new hires, with ROI often exceeding 3x the training cost, according to the source cited in McKinsey's discussion of steps to drive effective organizational learning.

That means you should stop asking only who completed the course. Ask better questions:

Which roles improved fastest after a pathway change?

Where are skill gaps persistent even after training?

Which manager populations are reinforcing learning well?

Which content assets correlate with stronger ramp-up?

A focused training analytics dashboard can help teams structure these views without defaulting to vanity metrics.

If you can't connect learning activity to role performance, retention, or readiness, the dashboard is reporting motion rather than value.

Build the dashboard around decisions

The dashboard should support action, not just reporting.

A good review rhythm looks like this:

Decision Area | Question to Review | Likely Action |

Content quality | Where are learners dropping off or failing to apply knowledge? | Rewrite, shorten, or restructure the asset |

Role readiness | Which teams are slowest to ramp? | Add manager coaching or role-specific reinforcement |

Compliance risk | Which locations show weak understanding on critical topics? | Trigger targeted refreshers and local follow-up |

Programme ROI | Which pathways align with better workforce outcomes? | Expand investment in the strongest-performing pathways |

That turns learning in organizations into an operating system for capability, not just a catalogue of courses.

The AI Advantage How Automation Accelerates Learning

AI matters in L&D because it removes delay.

In many organizations, the hardest part of training isn't identifying what people need to learn. It's converting raw operational knowledge into something structured, current, and usable. A policy changes. A process is revised. A new location opens. A product update rolls out. Then somebody has to rebuild content, format assessments, assign learners, and track whether the material landed.

That is where automation changes the pace of execution.

A franchise onboarding scenario

A franchise group opens several new locations close together. Every site needs the same core onboarding. Brand standards, service flow, point-of-sale procedures, health and safety basics, escalation steps.

The old way is familiar. Someone assembles slide decks, copies old modules, edits PDFs, and sends managers a checklist. Each location improvises a little. The result is variation.

A more modern system starts with the source documents. Operations manuals, opening checklists, customer handling scripts, and role expectations are turned into modular learning assets. New hires receive role-based pathways instead of a generic stack of files. Managers see where people are ready and where they still need support.

That kind of speed matters in Canada, especially for smaller businesses. A 2025 Statistics Canada report shows 62% of Canadian SMBs struggle with training due to time constraints, and the same cited analysis notes that AI automation can cut onboarding time by up to 80% by instantly creating courses from existing documents, as referenced in McKinsey's article on harnessing the power of learning.

A regulated industry scenario

Now take a regulated environment. A finance or healthcare team receives an updated policy document. The risk isn't just slow adoption. It's inconsistency.

Manual update cycles create exposure. One version gets revised. Another stays buried in an old module. Managers give slightly different interpretations. Employees complete training but still rely on outdated reference materials.

Automation helps by shortening the path from source update to learner-ready content. It also makes refreshers easier to push in smaller, more frequent bursts. That supports retention better than waiting for the next annual cycle.

What AI should handle and what humans should keep

AI is strong at transformation, structure, and scale. It can help convert documents into courses, generate quizzes, organise learning paths, and support faster updates.

Humans still need to own the parts that require judgement:

Setting capability priorities

Approving high-risk content

Interpreting analytics

Coaching learners

Deciding what good performance looks like

That division of labour is healthy. L&D teams shouldn't spend their best hours formatting the same kind of training over and over. They should be working on design choices, stakeholder alignment, and business impact.

What works in practice

Organizations usually get the most value from AI automation when they start with repetitive, document-heavy workflows.

Examples include:

Onboarding built from existing manuals

Compliance refreshers based on policy updates

Role-specific microlearning from SOPs

Distributed training across franchise or field teams

Knowledge base conversion into searchable learning assets

What doesn't work is layering AI onto a weak content strategy. If source materials are contradictory, obsolete, or ownerless, automation will only scale the confusion faster.

The real advantage isn't that AI creates more training. It's that it helps teams create the right training faster and keep it aligned with changing work.

That is why AI is no longer optional in serious discussions about learning in organizations. It isn't replacing strategy. It's making strategy executable.

If your team is trying to scale onboarding, compliance, or role-based learning without adding more manual admin, Learniverse is worth evaluating. It turns existing PDFs, manuals, and web content into interactive training, supports automated learning paths, and gives L&D teams a cleaner way to deliver and update training at operational speed.