You’ve spent weeks building a critical compliance training programme. The content is polished, the videos are engaging, and launch day is close. Then the weak point appears. The assessment still looks like a pile of rushed quiz questions with obvious distractors, vague wording, and too many “all of the above” shortcuts.

That’s a problem because a bad multiple-choice test doesn’t just weaken measurement. It teaches the wrong things. Learners start hunting for clues instead of applying judgement, and managers end up with completion data that says little about competence.

Strong multiple choice items examples do something different. They force a learner to discriminate between plausible options, apply a rule in context, and show whether the training changed how they think. That’s where instructional design matters. Bloom’s Taxonomy helps you decide whether a question should test recall, application, analysis, or evaluation. Kirkpatrick’s model helps you decide whether the assessment should confirm understanding or support a broader performance conversation.

The practical side matters just as much as the theory. In California education systems, multiple-choice formats have long been used at scale because they support statistical analysis and item refinement. For example, statewide data from the California Standards Tests technical reporting showed that 68% of Math CST items fell within an item difficulty range of 0.40 to 0.70, a useful benchmark for balanced challenge in large-scale testing (Purdue chemistry education statistics reference). That principle carries over to workplace training. Good items sit in the zone where competent learners can succeed, but weak preparation still shows.

Below are eight practical multiple choice items examples for modern training teams. Each one pairs a worked example with the design principle behind it, plus a template you can adapt in tools like Learniverse.

1. Best Practice for Knowledge Retention in Online Training

A familiar failure shows up a week after onboarding. Learners passed the quiz, signed off the module, and still cannot recall the incident-reporting steps when the task appears in real work. A retention-focused multiple choice item should test whether they can choose the practice pattern that supports recall over time.

Example item:

A training manager wants employees to retain incident-reporting steps beyond the first week of onboarding. Which approach is most likely to support long-term retention?

A. Deliver all training and testing in one extended session on day oneB. Spread short practice quizzes across later intervals and mix related topicsC. Remove quizzes to reduce friction and rely on a final sign-offD. Repeat the same question format back-to-back in one sitting

Correct answer: B.

This item works because the learner must recognize a sound retention strategy in a realistic design decision. The question sits at the application level of Bloom’s Taxonomy and asks for judgment, not definition matching.

Why this item works

The distractors are believable because they reflect common design choices I still see in onboarding builds. Teams compress too much into day one, confuse repeated exposure with retrieval practice, or strip out checks because they want the course to feel faster. Good distractors should come from those real mistakes, because that is what separates a usable assessment item from a classroom trivia question.

The principle behind the item is straightforward. If the learning goal is retention, the item should ask learners to choose spacing, sequencing, or retrieval conditions that improve later recall. That creates a direct line between the question and the instructional design principle you want to measure.

Kirkpatrick also helps here. This kind of question works best when the goal is to confirm learning, then support later transfer on the job. If the course only measures immediate completion, the team gets clean scores and weak evidence.

Practical rule: If a learner can answer right after training but misses a parallel version a week later, the item is measuring short-term recognition.

Template you can reuse

Use this structure for retention-focused multiple choice items examples:

Start with a realistic performance problem: late recall, missed steps, or poor follow-through after onboarding

Ask for a design decision: timing, spacing, sequencing, or retrieval method

Write distractors from actual team habits: cram on day one, remove practice, repeat identical items in one sitting

Tie the correct answer to one principle: spaced retrieval, interleaving, or feedback timing

A simple prompt in Learniverse can speed up first drafts: generate four answer options for a retention question based on spaced retrieval, with one correct design choice and three plausible distractors based on common onboarding mistakes.

If you need a stronger starting point for the objective behind the item, use these examples of learning objectives. Clear objectives make retention questions easier to write and easier to defend during review.

If you’re mapping these questions into a broader learning strategy, Learniverse’s guide to assessment for learning is a useful framing reference. It helps you separate questions that reinforce learning from questions that certify completion.

2. Identifying Effective Learning Objectives in Course Design

Weak questions often come from weak objectives. If the objective says “understand the policy,” the item writer has no anchor. That’s when vague, trivia-style questions creep in.

Example item:

Which learning objective is best suited for a role-based onboarding assessment?

A. Understand the POS systemB. Learn how customer payments workC. Complete standard POS transactions accurately in live scenariosD. Know the sales workflow

Correct answer: C.

This item works because the learner must identify the objective that is observable and testable. The principle is basic, but the impact is not. If the objective is unclear, the assessment will be unclear too.

The design principle behind it

Bloom’s Taxonomy helps because it pushes you toward an action. “Understand” sounds fine in a meeting. It fails when you need to write a question that proves performance. “Complete,” “identify,” “select,” “classify,” and “evaluate” give you something you can assess.

The best distractors in this kind of item are the ones teams commonly approve by accident. “Learn the process” and “understand the policy” look harmless until you try to score them. Then you realise they don’t point to a measurable behaviour.

Here’s a simple pattern that holds up well in course design:

Vague objective: hard to assess, easy to overestimate

Behaviour-based objective: easier to map to scenarios, evidence, and pass criteria

Role-specific objective: easier to defend to managers and auditors

A better writing template

Use this formula when drafting objectives before you create quiz items:

Learner + action verb + task or condition + expected standard

That structure makes AI-generated assessment drafting more useful because the source objective is clear. If you feed weak outcomes into an AI tool, you’ll usually get polished but shallow questions back.

A practical reference point is Learniverse’s collection of examples of learning objectives. It’s a good shortcut when you need to turn broad training goals into assessable statements before building your quiz bank.

The fastest way to improve your assessment isn’t rewriting distractors. It’s rewriting the objective the distractors came from.

3. Choosing Appropriate Assessment Methods for Compliance Training

Compliance teams often make one of two mistakes. They either treat every checkpoint like a final exam, or they make the final exam feel like a casual knowledge check. Both approaches create trouble.

Example item:

A healthcare employer needs evidence that staff can complete required training and also needs defensible documentation for certification review. Which assessment approach fits best?

A. One ungraded quiz at the end of the moduleB. Discussion prompts only, with no scored assessmentC. Short formative checks during training plus a controlled final assessmentD. A learner self-attestation without item-level evidence

Correct answer: C.

This question works because it forces the learner to match assessment method to purpose. That’s classic instructional design. Formative checks help learning. Summative assessments support decisions, records, and audit readiness.

What the statistics tell you about defensibility

Assessment design matters even more when pass decisions carry weight. Research on MCQ scoring methods identified 21 distinct scoring methods across the literature, with credit ranges spanning from -3 to +1 points per item (PMC review of MCQ scoring methodologies). The practical implication is simple. Scoring isn’t neutral. Option count and scoring logic affect what chance performance looks like.

That matters in regulated environments because guessing can inflate scores if the format is too forgiving. A five-option item can be more defensible than a binary item when you need clearer separation between prepared and unprepared learners.

What works in practice

For most compliance programmes, use a layered structure instead of one high-stakes moment:

Formative checks: short, low-pressure questions after key concepts or scenarios

Summative evidence: a controlled final assessment tied to a policy, credential, or sign-off

Version control: alternate forms for retakes and periodic refreshers

A lot of teams also underestimate item format choice. True/false is fast, but it’s weak when nuance matters. Learniverse’s guide to true or false questions is worth reviewing if your current compliance library leans too heavily on binary items.

Good compliance assessments don’t just ask, “Did the learner finish?” They ask, “Would this record hold up if someone reviewed it later?”

4. Optimizing Mobile Learning for Field-Based Training Delivery

A field-based assessment has to survive real conditions. Noise, interruptions, weak signal, short attention windows, and one-handed use all change what counts as a good item.

Example item:

A franchise employee completes food safety training on a phone during short breaks and sometimes loses connection. Which assessment design is the strongest fit?

A. One long timed exam that requires constant internet accessB. Short quiz bursts with simple layouts and offline completion supportC. Dense text questions with multiple policy excerpts on screenD. A desktop-only exam sent by email after the shift

Correct answer: B.

The principle here is context-fit. The item measures whether the learner can identify the delivery design that matches the environment. That sits at the application level and mirrors the decisions training teams make.

What mobile-first changes

Mobile assessments need cleaner stems, shorter option text, and tighter visual hierarchy. Long scenario questions aren’t always wrong, but they need trimming and careful chunking. If a learner has to scroll up and down to compare options, the item is doing extra cognitive work that has nothing to do with competence.

The same issue shows up in question formats. Matrix or grid structures can be efficient for desktop review, especially when teams want aggregated analytics across related topics. They’re useful because they create structured data that’s easier to analyse at scale and can help training managers assess multiple competency areas in a compact format (Checkbox overview of multiple-choice formats). On a small screen, though, they can become awkward fast unless the interface is designed carefully.

Mobile-friendly writing moves

Use these when building field-ready multiple choice items examples:

Front-load the context: Put the scenario first so learners know what they’re solving.

Trim every option: If two options differ only in a long final clause, mobile users will miss it.

Design for interruption: Let learners resume without losing progress or context.

I also prefer writing the mobile version first when the workforce is largely distributed. If the question works on a phone, it usually works everywhere else. The reverse isn’t true.

5. Measuring Training Impact and ROI on Business Outcomes

A leadership team approves a training rollout, sees high completion rates, and asks six weeks later, “What changed in the business?” That is where weak assessment design gets exposed. If your multiple-choice items only check recall, they cannot help you defend training spend or improve the program.

Example item:

A training director wants to know whether a new onboarding programme is worth continuing. Which action best supports a credible ROI analysis?

A. Report completions onlyB. Compare post-training results to a baseline and connect them to business measuresC. Ask managers whether they liked the trainingD. Count how many modules were published this quarter

Correct answer: B.

This item works because it measures judgment, not terminology. Learners must separate activity metrics from outcome metrics and choose the evidence chain that would stand up in an operational review. That maps well to Kirkpatrick’s higher levels, where the question is not whether training happened, but whether performance changed and whether that change mattered to the business.

The design principle is straightforward. Good ROI questions ask learners to identify the metric that best matches the decision. That usually pushes the item above simple recall and into application or analysis on Bloom’s Taxonomy. In practice, these questions are harder to write because every distractor has to sound plausible to a stakeholder who is busy and metric-driven.

What useful impact measurement looks like

Use a baseline, a defined review period, and one business measure the audience already trusts. For onboarding, that might be time to proficiency. For service teams, it could be escalation rate, first-contact resolution, or rework. For compliance training, I usually look for indicators tied to operational risk, such as repeat errors, audit exceptions, or corrective actions.

A good item should reflect those trade-offs. Completion data is easy to collect, but it is a weak proxy for impact. Manager feedback adds context, but it is still perception. Business measures are slower to gather and messier to interpret, yet they are what leaders use when budgets get reviewed.

A reusable template for stronger ROI items

Use this structure:

Scenario: A team needs to decide whether a training program should continue, expand, or be revised.Question: Which evidence would give the strongest basis for that decision?Correct option: A comparison of pre- and post-training performance tied to a business result.Distractors: Completion counts, satisfaction ratings, content volume, or other activity measures.

This is also a strong place to use AI carefully. In Learniverse, prompt for one business goal, one learner group, one valid outcome metric, and three distractors based on common reporting mistakes. Then review each option by hand. AI is useful for speed. It still needs an instructional designer to check whether the question measures real decision quality rather than surface familiarity with metrics.

For score review, simple descriptive statistics still help. If your team needs a quick refresher on distributions and score summaries, this explainer on mean, median, mode, and range is a useful companion.

Before you publish ROI-focused items, check three things:

Does the question ask for evidence of performance change, not training activity?

Is the correct answer tied to a business measure the organisation already uses?

Do the distractors reflect real reporting mistakes managers make?

That last check matters. A good multiple-choice item does more than test knowledge. It exposes whether the learner can spot weak evidence before it reaches an executive dashboard.

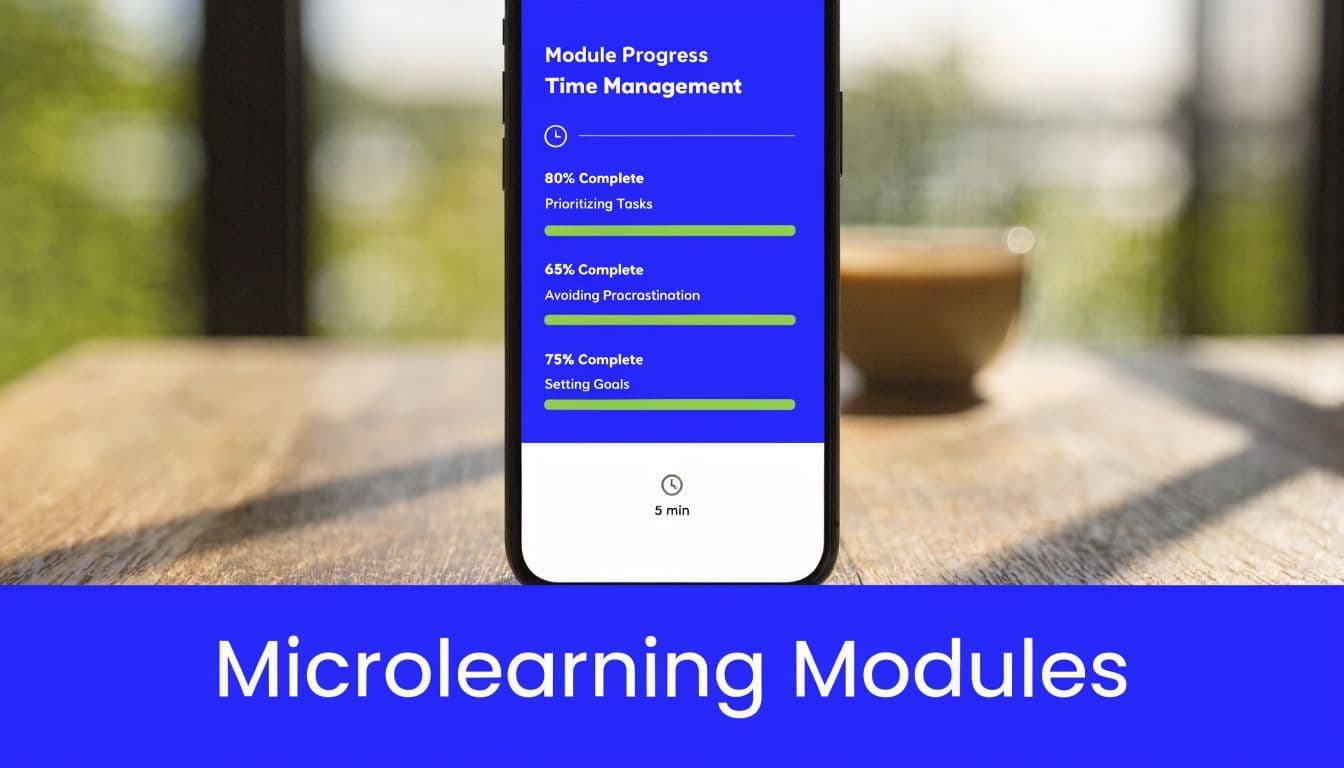

6. Designing Effective Microlearning for Busy Professionals

A sales manager has three minutes between calls and opens a training module on a phone. If the lesson asks for too much, the learner leaves with a vague impression instead of a usable decision rule. Good microlearning is narrow by design.

Example item:

Which design choice best fits a microlearning lesson for busy professionals?

A. Cover several unrelated objectives in one short module to save timeB. Focus one short module on one clear objective and one immediate job taskC. Remove practice questions to keep the module briefD. Present all background theory before showing any scenario

Correct answer: B.

This item works because it tests scope control, not just preference. That maps to a core instructional design principle. If the learning objective sits at the application level of Bloom's Taxonomy, the module needs one action, one context, and one decision. Once designers squeeze in extra concepts, the assessment starts measuring memory load more than job readiness.

The trade-off is real. Stakeholders often want a five-minute module to cover policy, rationale, exceptions, and documentation steps at once. That request sounds efficient, but it usually weakens retention and lowers item quality. A better build gives each micro-module one job. Then the question can check whether the learner can act on that job in a realistic moment.

Why focused microlearning produces better multiple-choice items

Busy professionals need questions they can answer from practice, not from re-reading a condensed lecture. The strongest microlearning items usually include a short trigger, a job-relevant choice, and distractors based on common mistakes in the workflow.

That is also why deconstructing the item matters more than copying the format. The correct option works because it aligns objective, content, and task. The distractors work because they reflect familiar design errors. Too broad. No practice. Too much theory before action.

The lesson stays the same across different settings. Better assessment alignment produces better decisions.

A repeatable pattern for microlearning item design

Use this structure when building your own item bank:

Job moment: “A supervisor notices...”

Decision prompt: “What should they do next?”

Options: one best response, two common mistakes, one partially correct but incomplete choice

This pattern holds up on mobile because it is short without becoming superficial. It also gives you a clean template for AI-assisted drafting in Learniverse. Prompt for one learner role, one job task, one observable mistake, and one correct next step. Then review the output by hand to confirm that the item measures applied judgment rather than general familiarity.

Before publishing a microlearning question, check three things:

Does the item test one objective only?

Can the learner answer it from a realistic job context in under a minute?

Do the distractors reflect mistakes people make at work?

If those answers are yes, the module is more likely to do what microlearning is supposed to do. Support fast recall, fast decisions, and better performance on the job.

7. Ensuring Training Accessibility and Inclusive Design Compliance

Accessibility isn’t a post-production fix. If a multiple-choice item is hard to use, hard to read, or dependent on inaccessible media, the score no longer reflects knowledge alone.

Example item:

Which design choice most improves accessibility for a required online training assessment?

A. Put key information inside images without text alternativesB. Rely on colour alone to distinguish answer statesC. Support keyboard navigation, readable contrast, and text alternativesD. Use small text to keep more content on screen

Correct answer: C.

This item works because it asks the learner to select a design decision, not recite a standard. For most workplace settings, that’s the right level. You want practical compliance behaviour, not jargon recognition.

A lot of accessibility failures in quizzes come from ordinary production habits. Designers paste screenshots of policy charts instead of writing readable text. Video-based questions lack captions. Answer feedback appears only through colour changes. None of that looks dramatic in a build review, but it changes who can succeed.

Here’s a useful design reminder before publishing any quiz:

Accessibility work improves measurement quality because it removes barriers unrelated to competence.

To support teams that need a visual primer, this short accessibility video is a good refresher before final QA.

What to audit inside the item itself

Don’t stop at the course shell. Audit the question components:

Stem readability: Plain language, clear task, no unnecessary complexity

Option formatting: Consistent length and structure where possible

Interaction access: Full keyboard support and visible focus states

If a learner can’t perceive or operate the question cleanly, your result is compromised before scoring even starts.

8. Leveraging AI and Automation for Rapid Course Development

A team uploads a policy pack into an AI course builder at 4 p.m. By 4:10, they have 30 quiz questions, and half of them look usable. By the next review cycle, the substantive work begins. Two answer options say nearly the same thing, one stem tests recall when the objective calls for application, and a distractor introduces language the policy never used.

That is the practical tension with AI in course development. It speeds up production, but it does not remove the need for instructional judgement.

Example item:

A training manager uploads policy documents into an AI course builder to produce quiz items. What is the best next step?

A. Publish the generated questions immediately because AI is faster than reviewB. Ask subject matter experts to review objectives, scenarios, and distractors before launchC. Keep every generated distractor, even if options overlapD. Skip performance data review after launch because the initial draft is complete

Correct answer: B.

This item works because it measures decision-making inside a real workflow. It is not asking whether the learner likes AI. It checks whether the learner can apply a sound development process. That puts the question closer to Bloom's application level, which is usually the right target for course owners, training managers, and reviewers making production decisions under time pressure.

Why this item works

The stem gives a realistic trigger. The options reflect common production mistakes. Immediate publishing rewards speed over quality. Keeping weak distractors creates noisy measurement. Skipping post-launch review ignores item performance data.

The correct answer points to the actual control point in AI-assisted design. Human review protects alignment, accuracy, and validity. In practice, that is where I see teams recover the most value from AI. They save time on drafting, then spend expert time where it matters most.

Where AI helps, and where review still matters

AI is good at first drafts. It can turn source content into item stems, rewrite questions for different reading levels, generate parallel versions, and convert a single policy into role-specific scenarios.

It is less reliable at three things that directly affect assessment quality:

Objective alignment: A polished question can still measure the wrong cognitive skill.

Distractor quality: Weak options make the item easier without improving learning.

Policy nuance: AI often smooths over exceptions, edge cases, or conditional language that matters in regulated or high-risk contexts.

That trade-off matters in rapid course builds. Speed is useful only if the final item still measures the intended competency.

A practical workflow for AI-assisted quiz creation

Use AI to reduce drafting time, then apply review in a fixed sequence:

Start with a clear objective. State the behaviour the learner must demonstrate before generating any item.

Feed the tool clean source material. Remove duplicates, outdated policies, and conflicting references.

Generate multiple versions per objective. Variation gives reviewers better options than editing one weak draft.

Review for instructional quality. Check the stem, cognitive level, distractor plausibility, and terminology.

Validate with SMEs. Confirm that scenarios and options reflect actual policy and real job decisions.

Check performance after launch. Revise items with poor discrimination, unusual option patterns, or avoidable confusion.

Learniverse fits naturally into that workflow because it can convert source materials into course and quiz drafts quickly, giving teams a starting point for review instead of a blank page. Used this way, AI shortens development time while keeping instructional control with the people responsible for quality.

Multiple-Choice Item Examples: 8-Topic Comparison

Item | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

Best Practice for Knowledge Retention (Spaced Repetition) | Moderate–High: scheduling, sequencing, analytics required 🔄 | LMS with scheduling/analytics, AI for automation, sustained learner engagement ⚡ | Sustained long-term retention; higher pass and retention rates 📊 | Onboarding, compliance, multi-week software training 💡 | Improves retention and reduces remedial training; strong ROI ⭐ |

Identifying Effective Learning Objectives (Bloom's Taxonomy) | Low–Moderate: upfront design time to craft measurable objectives 🔄 | SME time, instructional designers, minimal technical tools ⚡ | Clear assessment alignment and measurable competency gains 📊 | Course design, AI content generation, KPI-mapped programs 💡 | Enables precise alignment and better analytics; improves credibility ⭐ |

Choosing Assessment Methods for Compliance Training | High: combines formative + summative + proctoring and audit trails 🔄 | Advanced LMS, proctoring, secure data storage, assessment dev resources ⚡ | Regulatory defensibility, documented competency, improved learning outcomes 📊 | Regulated industries (pharma, finance, healthcare), certification programs 💡 | Ensures compliance and reduces organizational risk; audit-ready evidence ⭐ |

Optimizing Mobile Learning for Field-Based Delivery | Moderate: offline sync, microcontent, UX for small screens 🔄 | Mobile development, testing in low-bandwidth environments, content chunking ⚡ | Higher accessibility and completion rates; just‑in‑time learning 📊 | Field workers, franchises, retail, logistics, on-shift training 💡 | Increases accessibility and engagement for distributed workforces ⭐ |

Measuring Training Impact & ROI on Business Outcomes | High: data collection, attribution, cross-system analytics 🔄 | Data infrastructure, analytics expertise, baseline and KPI tracking ⚡ | Behavior change and business metric improvements (revenue, safety, retention) 📊 | Executive reporting, budget justification, program optimization 💡 | Demonstrates training value to C‑suite; informs investment decisions ⭐ |

Designing Effective Microlearning for Busy Professionals | Moderate: careful chunking and sequencing; potential sequencing complexity 🔄 | Content design resources, LMS supporting short modules and tracking ⚡ | Much higher completion and focused retention; flexible access 📊 | Busy professionals, just‑in‑time skill refreshers, short onboarding tasks 💡 | Boosts completion and retention; fits realistic schedules and mobile use ⭐ |

Ensuring Training Accessibility & Inclusive Design Compliance | Moderate–High: WCAG/ADA compliance, testing with users 🔄 | Accessibility expertise, assistive-technology testing, captioning/transcripts tools ⚡ | Legal compliance, broader learner reach, improved UX for all 📊 | Public-facing training, regulated orgs, diversity & inclusion initiatives 💡 | Reduces legal risk and demonstrates commitment to inclusion ⭐ |

Leveraging AI & Automation for Rapid Course Development | Low–Moderate: platform setup plus SME review and QA 🔄 | AI platform, well-organized source materials, SME review capacity ⚡ | Rapid course generation, scalable updates, lower development time/cost 📊 | Large content migrations, fast-growing orgs, frequent updates 💡 | Dramatically reduces time-to-launch and scales training cost-effectively ⭐ |

From Question to Competency Your Assessment Blueprint

The difference between weak and effective multiple-choice items usually isn’t the writing style. It’s the design logic underneath. Good questions start with a precise objective, match the training context, and make one meaningful distinction between competent and non-competent performance. Bad questions tend to do the opposite. They test wording tricks, reward pattern spotting, or measure recall that disappears as soon as the session ends.

That’s why the strongest multiple choice items examples aren’t just finished questions. They’re models of decision-making. A retention item should reflect retrieval over time. A compliance item should match the level of evidence the organisation needs. A mobile item should respect the environment where people will take it. An ROI item should point the learner toward baselines, business measures, and outcome analysis rather than vanity metrics.

The statistical side matters too, but only when it supports better design. Item difficulty, score spread, reliability, and scoring logic all help you improve a question bank when you use them to make practical decisions. They help you spot items that are too easy, too ambiguous, or too dependent on chance. They also help you justify why one assessment format is more defensible than another.

That’s where many teams improve quickly. They stop asking, “How do we write fifty questions fast?” and start asking better questions first. What behaviour are we trying to confirm? What kind of error would a weak performer make? Which distractor represents a believable misconception rather than a silly throwaway? Once those decisions are clear, item writing gets much easier.

I’d also treat assessment review as an ongoing design habit, not a one-time publishing task. Look at response patterns. Check whether your top performers and struggling learners are separating in ways that make sense. Remove items that are technically correct but instructionally weak. Replace distractors that nobody selects. Tighten stems that confuse competent learners for the wrong reasons.

If you’re running formal testing or controlled certification, practical delivery features matter too. Secure conditions, timing controls, retake logic, and invigilation options all affect how credible the result feels. This overview of exam mode features is useful if you’re comparing what your current platform should support for higher-stakes assessments.

The broader lesson is simple. Start with the outcome, then work backwards. If the outcome is job performance, your question should look like a decision. If the outcome is compliance evidence, your assessment structure should support documentation. If the outcome is retention, your item schedule matters almost as much as the item wording.

Learniverse is one practical option for teams that want to move faster on that process. It can generate course and quiz drafts from source materials, which helps reduce manual build work. The value still comes from the review process. AI speeds up production, but instructional design is what makes the assessment worth trusting.

Your next assessment doesn’t need more filler questions. It needs better evidence.

If you want to turn manuals, policies, and onboarding materials into usable quiz drafts faster, Learniverse is worth exploring. It helps training teams generate courses, multiple-choice assessments, and learning paths from existing content so they can spend more time reviewing quality, improving items, and connecting training to real performance.