Fast-growing companies usually don't struggle because they lack training content. They struggle because they ship training too fast, with too many hidden inconsistencies.

One onboarding module uses the old policy language. Another has quiz answers that don't match the lesson. A compliance course looks polished, but half the links are broken on mobile. Managers start sending side notes in Slack to “clarify” the course, which defeats the point of having a formal programme in the first place. Learners notice. They may not say “your quality framework is weak,” but they feel the friction immediately.

That's why the debate around quality control vs quality assurance matters so much in eLearning. In a modern training function, especially one using AI to generate courses at speed, quality isn't a final review task. It has to be built into the system, then checked with discipline before release.

The Hidden Cost of Inconsistent Training Quality

A familiar pattern shows up in growing teams. The business needs training live by Friday. Subject matter experts send rough notes on Tuesday. An instructional designer builds quickly. Someone gives it a quick look. The course launches, and the problems appear one learner at a time.

The visible issues are obvious enough. Wrong screenshots, confusing quiz wording, duplicate slides, inconsistent terminology. The less visible costs are usually worse. Managers lose confidence in the training team. Learners stop trusting course instructions. Compliance owners start asking for manual sign-off on every module because the process no longer feels reliable.

Where poor quality starts to bite

When teams rely only on end-stage inspection, they spend too much time fixing output that should've been prevented earlier. Firms that implemented proactive QA processes such as SPC integrated into ISO 9001 systems achieved a 42% lower defect rate than firms relying only on reactive QC inspections, according to 6Sigma's review of quality assurance vs quality control. In eLearning contexts, that translates to minimising rework costs estimated at 15-20% of training budgets in SMBs in the same source.

That rework doesn't only sit with the training team. Operations leaders rewrite job aids. HR answers repeat questions. Compliance officers request revisions after launch. A rushed course can create more work across the business than the original build saved.

Practical rule: If managers keep explaining a course after launch, the training asset is not finished. It is still in rework.

Many teams diagnose this as a content problem. It is usually a system problem. The fix starts before development, with clearer standards, better review gates, and a more disciplined understanding of what the training needs to achieve. A proper training needs assessment process often reveals that quality issues begin long before a module is built.

Defining QA and QC in Corporate Training

The simplest way to separate the two is this:

Quality assurance (QA) prevents defects by controlling the process.Quality control (QC) finds defects by inspecting the finished output.

In corporate training, that distinction matters because course quality isn't produced by one final reviewer. It's shaped by dozens of earlier decisions, including source selection, template use, review timing, approval rules, accessibility standards, and version control.

Think blueprint versus final inspection

If you were building a house, QA would be the blueprint, materials standard, site process, and inspection schedule built into the project from day one. QC would be the final walk-through where someone checks whether the doors close, the wiring works, and the finish matches the spec.

Training works the same way.

QA includes the rules that shape how a course gets made. That might mean a style guide for tone, a required review by a compliance lead, a standard lesson structure for onboarding, or a documented change-control process when policy content changes. QA is proactive. It asks, “How do we stop defects from entering the course in the first place?”

QC happens later. It checks whether the finished module meets the standard. That means testing links, verifying quiz accuracy, reviewing screen flow, checking brand consistency, confirming accessibility elements, and making sure the lesson aligns with the approved source material.

What each one looks like in practice

A training team using QA well usually has:

Documented build standards that define structure, naming, formatting, and review rules

Template discipline so every microlearning lesson, quiz, or policy module follows a repeatable pattern

Role clarity around who approves source content, who edits for learning quality, and who signs off before release

A team using QC well usually has:

Release checklists for content, design, functionality, and compliance checks

Review evidence such as issue logs, test notes, and approval records

A correction loop so every issue found becomes input for improving the next build

QA asks whether your process is strong enough to produce a good course consistently. QC asks whether this specific course is good enough to release.

When people confuse the two, they usually overinvest in checking and underinvest in prevention. That creates a tired pattern: launch fast, spot problems, patch them, repeat. It feels productive because people are busy, but it doesn't create reliable training at scale.

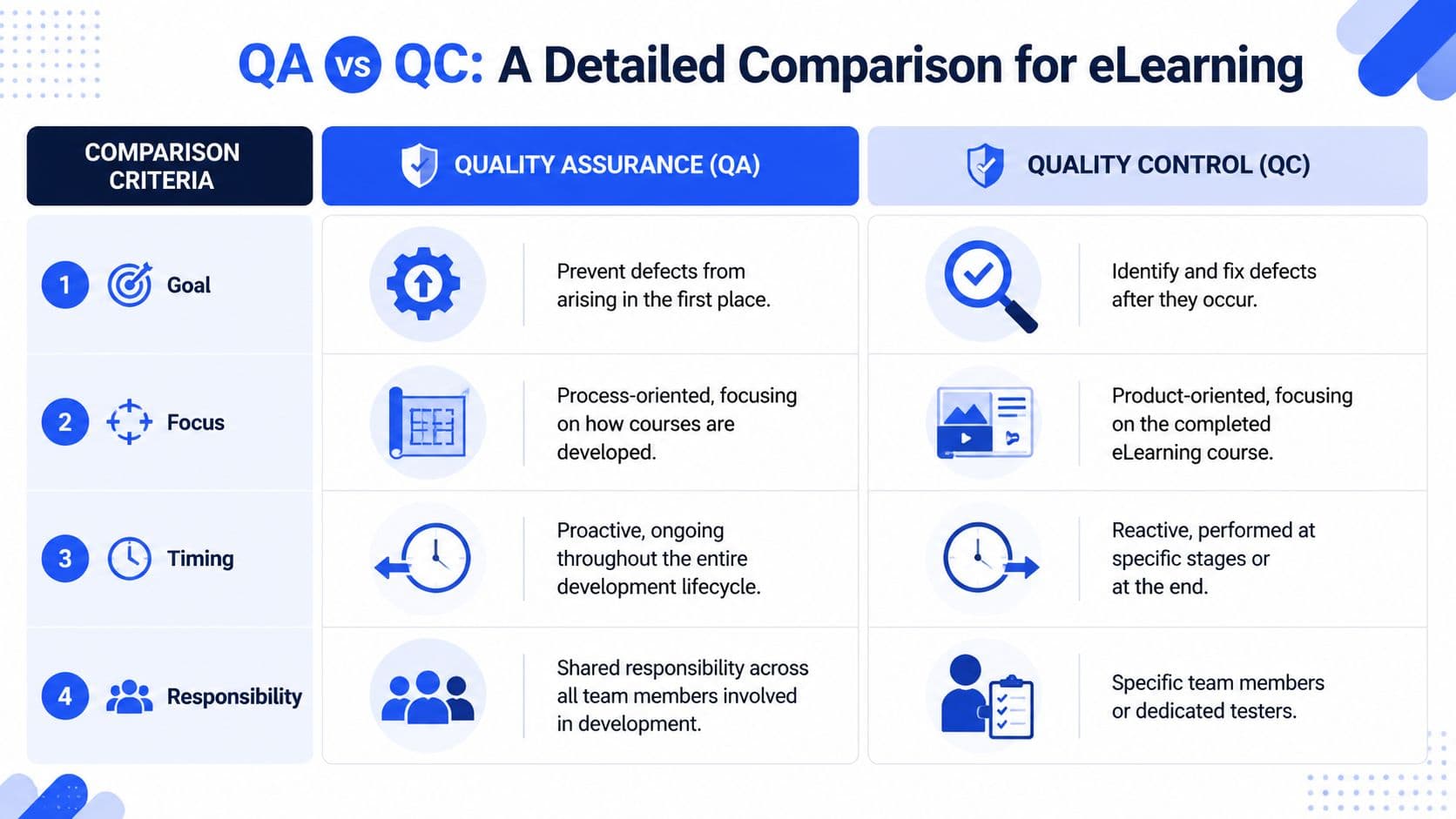

QA vs QC A Detailed Comparison for eLearning

When people search for quality control vs quality assurance, they often want a clean winner. There isn't one. In eLearning, each serves a different job. Remove QA and your team keeps producing avoidable errors. Remove QC and those errors reach learners.

Criteria | Quality Assurance (QA) | Quality Control (QC) |

Goal | Prevent defects before they happen | Identify and fix defects after they appear |

Focus | The development process | The finished course or module |

Timing | Ongoing through the full lifecycle | At defined checkpoints or near release |

Responsibility | Shared across SMEs, designers, reviewers, and managers | Usually handled by reviewers, testers, or approvers |

Typical eLearning example | Standardised templates, style guides, change control, review workflows | Testing links, checking quiz accuracy, validating navigation, spotting content errors |

The difference in daily work

QA lives inside the workflow. It shapes how the team collects source material, how modules are structured, how reviews are sequenced, and how changes are approved. If a training team requires every course to start from an approved outline, follow a standard lesson pattern, and pass a peer review before stakeholder review, that's QA in action.

QC is narrower and more tactical. It happens when someone opens the course and checks whether it works. Are the questions answerable from the lesson? Do all buttons work? Does the completion rule fire correctly? Does the legal disclaimer match the current policy version?

One reason teams underuse QA is that QC feels more visible. A reviewer can point to a typo or a broken interaction and say, “I caught that.” QA is less dramatic. It shows up when the team never creates the typo-prone layout in the first place.

Responsibility is not the same

Many training functions often get stuck.

QC can sit with a designated reviewer, an instructional designer doing final checks, or a compliance lead who signs off before launch. QA cannot sit with one person alone. If subject matter experts send outdated materials, if managers bypass templates, or if reviewers approve changes informally in email, the QA system breaks regardless of how strong the final checker is.

That's why QA usually needs cross-functional agreement, not just training team discipline.

Operational reality: QC can catch a bad module. QA is what stops your organisation from producing the same bad module next month.

Timing changes the economics

QC near launch is necessary, but it's also expensive in time and momentum. When a reviewer finds factual errors at the end, the team often has to reopen design files, re-record narration, update screenshots, retest navigation, and resubmit for approval. Small defects become scheduling problems.

QA moves that effort earlier. It asks for source validation before build, approved language before scripting, and template decisions before design. That front-loads some discipline, but it prevents the slow churn that burns capacity in fast-growth teams.

In eLearning, the best systems use both

The strongest training operations don't debate QA or QC. They make them complementary.

QA sets the rails. QC checks the train before passengers board.

If you only choose one, choose neither. You'll either build chaos efficiently or inspect defects after they've already cost you time.

Implementing Proactive Quality Assurance Processes

A useful QA system doesn't start with software. It starts with a few decisions the team agrees to follow every time.

The first decision is what “good” looks like. Most training teams talk about quality in broad terms, but broad terms don't prevent defects. Clear standards do. If your team can't describe the approved lesson structure, brand voice, source hierarchy, assessment rules, and review sequence, then quality is still based on personal judgement.

According to ASQ's overview of quality assurance and quality control, QA activities cover virtually all of the quality system. For corporate training teams, that's the right mindset. Quality should be embedded in documentation standards, change control, review rules, and personnel practices, not saved for the end. The same source notes that organisations combining QA with QC achieve a 15-20% reduction in training delivery errors.

Start with three core documents

You don't need a giant manual. You need a working set of standards your team will follow.

A course development style guide Define tone, terminology, heading patterns, formatting rules, image treatment, accessibility expectations, and how to handle policy language. It allows you to settle recurring debates before they slow down production.

Standard templates for common module typesBuild separate templates for onboarding, compliance refreshers, product training, and microlearning. Each should reflect the way that format works best, not a one-size-fits-all layout.

A documented review and approval pathDecide who reviews for factual accuracy, who reviews for learning quality, who owns legal or compliance approval, and what happens when a source document changes after sign-off.

Build QA into the workflow

A strong QA process usually includes several essential elements:

Approved source firstNo one should build from draft notes, chat messages, or half-updated slides if the content is regulated or operationally important.

Change control secondIf a manager changes a policy statement after design starts, the team needs a documented method to trace, approve, and update that change.

Peer review before stakeholder reviewThis prevents senior reviewers from wasting time on obvious issues the team should've caught internally.

A short explainer on process discipline can help align teams before rollout:

What works and what fails

What works is boring in the best sense. Templates. naming rules. review stages. controlled source files. sign-off logs. These don't feel creative, but they protect creative work from preventable mistakes.

What fails is “flexibility” without guardrails. When every course gets built differently, quality becomes reviewer-dependent. That's manageable at ten modules. It breaks at scale.

Good QA reduces judgement calls. It doesn't remove expertise. It reserves expertise for the places where judgement actually matters.

Applying Tactical Quality Control Checks

QC is where the team proves that the finished learning asset is ready for real users. This isn't a casual preview. It's structured inspection.

In eLearning, QC usually needs to test more than content. A module can be factually correct and still fail because navigation breaks, interactions don't respond on mobile, or assessment logic marks the wrong answer as correct. AI-generated content adds another layer. It can produce fluent language that sounds right while still introducing subtle errors, weak distractors in quizzes, or summaries that overstate the source material.

According to SciLife's explanation of QA vs QC, QC in eLearning is the operational inspection and testing phase, using techniques such as inspection and statistical sampling. When QC finds issues in AI-generated courses, that should trigger CAPA investigations that feed improvements back into QA.

A practical QC checklist

A final course review should cover at least four categories.

Functional checks Test buttons, menus, branching, completion rules, downloadable files, embedded media, and links on the devices your learners use.

Content checksVerify that policy statements, process steps, product names, dates, and quiz answers match the approved source material. AI summaries need especially close review here.

Standards checksCompare the finished module against your style guide, template rules, accessibility expectations, and approval requirements.

Learner experience checksReview pacing, clarity, readability, screen density, and whether instructions make sense without a facilitator translating them.

Use test cases, not vague review requests

A lot of QC fails because reviewers are asked to “take a look” instead of following defined scenarios. Product teams learned this long ago. The same principle helps training teams. If you need a practical model for structuring repeatable review scenarios, Figr's guide to creating test cases for product teams is worth borrowing from.

That mindset works especially well when you're testing branching modules, certification flows, or role-based learning paths. Define the expected learner action, the expected system response, and the pass or fail condition.

Close the loop after defects are found

QC isn't complete when someone logs an issue. It's complete when the team decides what that issue means for the system.

If the reviewer repeatedly finds broken references in AI-generated quizzes, the fix may not be “edit the quiz.” The fix may be “tighten source validation before generation” or “require a second reviewer for assessments derived from policy documents.” That's where QC stops being a gate and starts becoming useful management data.

For teams trying to formalise release checks, it also helps to connect QC findings with learner outcomes after launch. A platform that supports tracking learner progress can reveal whether defects you missed in review are showing up later as failed completions, repeat attempts, or drop-off inside specific modules.

How AI Redefines Quality Management in eLearning

AI changes the speed of training production, but the more important shift is what it does to quality operations.

Traditional models treat QA and QC as separate lanes. One group creates standards. Another group checks output. That still applies conceptually, but AI compresses the gap between the two. The same system that generates a lesson can also enforce formatting rules, compare content against a source document, flag structural inconsistencies, and identify missing elements before a human reviewer opens the file.

AI doesn't remove quality work. It reallocates it

This is the practical shift many teams miss. AI is excellent at repetitive pattern checks. It can review naming consistency, identify duplicate statements, flag missing alt text, check whether all sections follow the required template, and scan large batches of content faster than a manual reviewer ever could.

That changes the role of the training leader. Instead of spending half the week on mechanical checks, the team can spend more time on judgment-heavy work such as learning design quality, policy interpretation, risk review, and stakeholder alignment.

A 2025 PwC California Workforce Study found that integrated AI-QA/QC can cut training content defects by 60%, while AI tools can automate up to 75% of manual QC tasks, according to MaintainX's discussion of quality control vs quality assurance. The implication for training teams is clear. The old boundary between prevention and inspection is getting thinner.

Where AI is most useful

AI tends to create the most value in three places:

Pre-build enforcementIt can apply standard structures, required sections, and consistent formatting before content reaches review.

Batch inspectionIt can scan large numbers of modules for common defects that humans often miss through fatigue.

Feedback loopsIt can surface recurring defect patterns so managers can improve the underlying process instead of fixing the same issue one course at a time.

The smart use of AI in quality management is not “trust the machine.” It's “automate the repeatable checks so humans can focus on risk, clarity, and learning quality.”

For training leaders, that means quality management becomes more continuous. Review no longer has to wait for a final draft. The system can support earlier interventions, faster corrections, and stronger consistency across a growing library. If you want a broader view of that shift, Learniverse has a useful overview of how AI is transforming corporate training.

Building Your Integrated Training Quality Framework

Most teams don't need a massive quality transformation. They need a framework they can start using this month.

The right model for most companies is simple: combine a lightweight QA foundation with disciplined QC checkpoints, then add AI where it removes repetitive work without weakening accountability. That gives you a system that can grow with the business instead of collapsing under volume.

Crawl

At the earliest stage, focus on control and visibility.

Create a release checklist for every module. Require one factual reviewer and one learner-experience reviewer. Log every issue found before launch. If your team does nothing else, this step alone will expose where defects originate.

Walk

Once the team has issue data, build the process that prevents repeat problems.

Implement a style guide. Standardize module templates. Define approval roles. Introduce source control rules so the team knows which document is authoritative. Quality assurance begins its work at this stage. It reduces variation before the build begins.

Run

An integrated model becomes powerful in this context.

Use AI to apply structural rules during development, automate routine checks during review, and surface defect patterns across the whole catalogue. Keep human reviewers focused on judgment, risk, and instructional quality. The point isn't to replace quality professionals. It's to stop using them as expensive spellcheckers and broken-link testers.

The framework in one view

Stage | Primary focus | Team habit |

Crawl | Basic QC discipline | Review every module with a standard checklist |

Walk | Repeatable QA standards | Use templates, style rules, and formal approvals |

Run | Integrated AI-supported quality | Automate routine checks and improve from defect patterns |

A framework like this works because it respects reality. Fast-growing companies need speed. They also need consistency. If you force a choice between the two, quality usually loses. If you build QA and QC into the same operating model, speed becomes less risky.

Build the system first. Then scale the content. Teams that do this in the opposite order usually spend the next year cleaning up preventable problems.

The strongest training departments don't treat quality as a project. They treat it as an operating discipline. That's what keeps onboarding clear, compliance defensible, and learning experiences trustworthy as the company grows.

If you're ready to turn quality from a manual scramble into a repeatable system, Learniverse helps teams build, deliver, and improve training with AI-powered course creation, structured learning workflows, and the analytics needed to keep standards high as content scales.