Effective sales training in the USA generates about $4.53 for every dollar invested, or 353% ROI, according to 2026 US sales training statistics. That number changes the conversation. Sales and training isn't a soft function. It's a revenue system.

New training managers often inherit the same pattern. The company hires aggressively, hands reps a slide deck, runs a kickoff session, then wonders why messaging is inconsistent, managers coach by instinct, and new hires take too long to contribute. The problem usually isn't effort. It's that the organisation treats training like an event instead of an operating discipline.

A strong sales and training programme does three things at once. It builds seller capability, reduces avoidable mistakes, and gives leaders a repeatable way to improve execution over time. Once you see training that way, budget decisions, curriculum design, reporting, and technology choices become much easier.

Why Most Sales and Training Programs Fail

Sales training programs usually break down at the point where information is supposed to become action.

A workshop can introduce a framework. It does not automatically help a rep ask sharper discovery questions in a live call, adjust a demo when a buyer pushes back, or stay disciplined during negotiation. Those abilities are built the same way athletes build muscle memory. Repetition matters. Feedback matters. Coaching matters. Review matters.

That is the first mistake. Companies treat training like a knowledge transfer exercise when performance improvement is the objective.

The second mistake is more subtle. Training often sits in the business as a support activity, so it gets managed like a calendar event instead of an operating system. A kickoff happens. Content gets uploaded. Attendance looks good. Then everyone goes back to work, and leaders assume the field will absorb the message on its own.

It rarely works that way.

Sales behavior follows the path of least resistance. If the old talk track feels easier, reps use the old talk track. If managers each coach from personal experience, teams develop five different versions of the same sales process. If nobody checks whether training shows up in calls, pipeline reviews, and deal progression, the program creates the appearance of motion without much change in execution.

Training gets judged by activity instead of adoption

New training managers often inherit reporting that focuses on inputs. How many people attended. How many modules were assigned. How many certifications were completed.

Those metrics have a place, but they do not answer the underlying question. Did the team start selling differently?

A stronger review asks three practical questions:

Are reps using the message consistently in live customer conversations

Are managers coaching the same behaviors across teams

Are the new habits showing up in pipeline quality, conversion points, and deal movement

If the answer is unclear, the program probably has a design problem, not an effort problem.

One-off training creates the illusion of progress

A single session often feels successful because the room is attentive and the content is fresh. That feeling can mislead leadership.

Learning works like installing software. The first session is only the initial download. Practice, coaching, reinforcement, and inspection are the updates that make the system usable in the field. Without them, even strong content fades fast.

Many traditional sales training models fall short. They explain what good selling looks like, but they leave too much manual work between theory and field execution. Someone has to schedule refreshers, assign follow-up practice, track completion, remind managers to coach, and piece together evidence of impact from scattered systems.

Modern teams need a tighter loop. They need training that is designed for reinforcement from the start, then delivered and maintained through automation. Platforms such as Learniverse help training leaders turn curriculum into an active system by automating assignments, practice flows, reminders, and program updates at scale. That shift matters because consistency is usually the first thing to break when a program depends on manual follow-through.

A practical rule helps here. If training ends when the session ends, the program delivered content. It did not build capability.

The Foundation of Modern Sales Training

Sales training works like a practice system, not a presentation. A strong team needs shared habits, clear standards, and repeated rehearsal under realistic conditions. Sports offers a useful comparison here. A coach does not hand players a playbook, run one practice, and expect precise execution under pressure. The team studies film, drills specific situations, and gets feedback until the right response becomes repeatable.

Sales performance develops the same way.

Four layers every programme needs

A modern sales training system usually rests on four layers. New training managers often combine these into one broad programme, then wonder why reps still perform unevenly. The problem is not volume. It is structure.

Onboarding: This moves new hires from company orientation to field readiness. It should cover the market, ideal customer profile, product basics, tools, messaging, and live practice.

Core skill development: This strengthens the selling motions that show up across roles, such as prospecting, discovery, objection handling, demos, negotiation, and account planning.

Product and market knowledge: Reps need to know what the offer does, who should buy it, who should not, and how competitors change the buying conversation.

Methodology and process: This gives the team a common operating language. Managers can coach to it, and reps can use it to move deals with more consistency.

Each layer supports the others. Product knowledge without discovery skill leads to feature dumping. Strong prospecting without opportunity discipline creates pipeline that stalls later. Good methodology without current market context turns into rigid box-checking.

A useful test is simple. If you removed one layer, where would performance break first? That answer usually reveals the current gap.

Training culture changes how teams work every week

A training event lives on the calendar. A training culture shows up in operating rhythm.

You can see it in manager call reviews, in onboarding that includes role-play instead of reading only, in certification based on demonstrated skill, and in content updates that reflect new products, competitors, or objections. You can also see it in what the team expects. In healthy environments, coaching is part of the job, not a signal that someone is in trouble.

The difference becomes clearer when you look at daily behavior:

Signal | Training event | Training culture |

Cadence | Occasional sessions | Ongoing reinforcement |

Ownership | L&D or enablement only | Shared by managers and revenue leaders |

Content use | Consumed once | Reused in calls, coaching, and refreshers |

Success check | Attendance and completion | Observable behaviour and business impact |

This distinction matters because many teams still build programmes as if content delivery were the finish line. It is only the starting point.

Delivery design matters as much as content design

Blended learning remains the practical model for sales because different skills require different formats. A negotiation workshop benefits from live discussion and feedback. Product positioning can be taught efficiently through short on-demand modules. A refresher on pricing changes works better as mobile-friendly microlearning that reps can review between meetings.

The method should match the job to be done.

That sounds obvious, but many programmes still choose format based on convenience. If the LMS is easy to update, everything becomes e-learning. If the facilitator is strong in the room, everything becomes a workshop. Good curriculum design starts with the learning objective, then assigns the right delivery method.

This is also where modern implementation starts to separate from traditional theory. Older training models often stop at curriculum architecture. Modern teams also plan the delivery engine. They decide what should be automated, what requires manager observation, what needs spaced reinforcement, and what should trigger a refresher after a product change or missed certification. Platforms such as Learniverse help training teams put that operating model into practice by assigning paths, scheduling reinforcement, and keeping role-based learning current without constant manual coordination. If you need a concrete example of how that looks in practice, this sales training case study template can help you map programme design to rollout and results.

Modern teams organise training by role, motion, and risk

A shared curriculum still matters, but modern sales training adds precision. The goal is not to send every rep through the same path. The goal is to build the right capability for the work each role performs.

An SDR needs repeated practice in outbound messaging, call openings, and qualification discipline. An AE needs stronger discovery, demo control, commercial conversations, and deal strategy. A frontline manager needs coaching frameworks, inspection routines, and clear standards for call review. Teams in regulated industries also need tighter message control, documentation discipline, and policy-specific scenarios.

In a practical sense, AI becomes useful. Not as a replacement for managers or trainers, but as a system for scaling good judgement. AI can sort reps into role-based paths, assign refreshers after assessment gaps, suggest practice based on call patterns, and reduce the admin load that usually slows programme execution. That closes a gap many training guides ignore. Designing a smart curriculum is one job. Launching it consistently across a growing team is another.

The foundation of modern sales training is a system that connects both. Clear learning design. Reinforcement built into the workflow. Automation that keeps the programme running after the kickoff session ends.

Building the Business Case for Sales Training Investment

Budget decisions rarely turn on whether training sounds useful. They turn on whether a leadership team can see a clear path from investment to better sales execution.

That is the shift to make in every budget conversation. Present sales training as an operating improvement with defined costs, expected behavior change, and business impact.

Start with the business problem leaders already feel

A CFO, CRO, or VP of Sales is usually reacting to friction in the revenue engine. New hires take too long to become productive. Pipeline quality varies by manager. Deals stall at the same stage. Compliance reviews create rework. Forecasts feel less reliable than they should.

Those are training problems only if you can trace them back to capability gaps. That is your job.

A useful test is simple. If reps performed a sales motion more consistently, would the business outcome improve? If the answer is yes, you have the beginning of a credible case.

Frame your request around one or two problems such as:

Slow ramp for new hires

Inconsistent manager coaching

Weak execution in a specific stage, such as discovery, qualification, or negotiation

Message drift in regulated or high-risk environments

No clear link between existing training and field performance

This approach changes the conversation. You are no longer asking for money to run courses. You are asking to reduce a known source of lost productivity or risk.

Build ROI like a simple operating model

New training managers often overcomplicate ROI. A better approach is to treat it like diagnosing a sales funnel. Start with where performance breaks, estimate the cost of that break, then show how training can improve the conversion point.

Use three parts.

Input | What to estimate | Why it matters |

Cost | Programme design, delivery time, manager coaching time, platform spend | Shows the full investment |

Performance change | Improvement in the behaviour or sales outcome you are targeting | Connects training to execution |

Business impact | Revenue gain, productivity improvement, or risk reduction | Helps leaders compare this investment with other priorities |

For onboarding, estimate the cost of reps reaching quota later than planned. For opportunity management, estimate the cost of poor qualification and stalled deals. For regulated selling, estimate the cost of avoidable errors, extra approvals, or delayed customer communication.

Precision matters less than logic. Leaders will accept assumptions they can inspect. They will reject a proposal that skips the link between training activity and business results.

Finance can work with estimated ranges. It cannot work with vague cause and effect.

Show how evidence will be collected before asking for budget

Training loses credibility when success is defined after rollout. Set the scorecard early.

Start with leading indicators. These show whether the program is changing behavior before revenue results appear. Examples include certification scores tied to real selling tasks, manager adoption of call reviews, or rep performance in structured practice.

Then define business outcomes. These might include faster ramp, better stage conversion, fewer compliance corrections, stronger win rates in a target segment, or improved forecast accuracy. The right measure depends on the original problem statement.

This is also where modern implementation matters. Traditional training plans often stop at curriculum design. Modern teams need a launch system that assigns learning by role, automates reminders, tracks completion, and surfaces practice gaps without adding manual admin each week. Platforms such as Learniverse help connect the business case to execution because the same system used to design the program can also support rollout, reinforcement, and reporting at scale.

If you need a clean leadership format, adapt a training ROI case study template for executive reviews. It gives you a practical structure for linking the business problem, intervention, evidence, and results.

Position training as a multiplier across the revenue team

Sales training affects more than rep knowledge. It shapes manager coaching quality, onboarding speed, message consistency, and how reliably your sales process shows up in customer conversations.

That is why the business case should be broader than course completion. A good program improves how the whole sales system runs.

A useful analogy is this. Training is not a one-time event. It works more like maintenance on a production line. If the line is misaligned, every unit that comes through carries the same defect. If your sales behaviors are inconsistent, every new hire, every forecast call, and every late-stage deal carries that variance forward. Training investment fixes the process, not just the person.

That is the case leaders fund.

Designing Your High-Impact Sales Curriculum

A strong curriculum feels less like a course list and more like a staircase. Each step supports the next. If you ask reps to negotiate complex deals before they can run a disciplined discovery call, the curriculum is out of order.

That sequencing matters because adult learners don't retain much from passive exposure alone. They learn faster when the material is relevant, applied quickly, and reinforced over time.

Build from foundational moves to advanced judgement

Most new training managers make one of two mistakes. They either over-index on product information or they jump too quickly into advanced frameworks. Both create confusion.

Start with the moves every rep must execute reliably:

Positioning and messagingReps need clear language for the problem you solve, the buyer you serve, and the outcomes that matter.

Prospecting and opening conversationsThis includes outreach quality, first-call structure, and how to earn the next meeting.

Discovery and qualificationIf reps can't diagnose the buyer's situation, every later stage becomes harder.

Objection handling and commercial confidenceTeach reps how to respond without becoming defensive or generic.

Demo or solution conversation controlProduct conversations should support the buyer's problem, not replace it.

Negotiation and deal strategyThese belong later because they rely on good work earlier in the cycle.

Modular design works better than giant programmes

A modular curriculum is easier to assign, update, and personalise. It also helps managers coach more specifically.

A useful structure looks like this:

Core modules: Required for everyone in the role

Role modules: SDR, AE, account manager, partner sales, customer success

Industry modules: Healthcare, fintech, franchise, manufacturing, or other verticals

Manager modules: Coaching, pipeline reviews, scorecards, feedback quality

Reinforcement modules: Short refreshers linked to real field situations

The following data illustrates how many programmes achieve greater sharpness. In California's regulated sectors, technical sales training is associated with a 35% reduction in compliance violation rates post-certification and a 24% increase in opportunity close rates, according to PMMI's certification information. That tells you curriculum design isn't only about selling skill. In some environments, it's also about risk control and execution discipline.

Tailor by role, not just by seniority

A beginner AE and a veteran AE may need different depth, but they still share a role. Design around role first because role determines the selling situations the person faces every day.

For example:

Role | Core focus | Common curriculum mistake |

SDR | Outreach, qualification, meeting conversion | Giving too much late-stage negotiation content |

AE | Discovery, demos, objections, deal strategy | Overloading product details without enough practice |

Account manager | Expansion, stakeholder mapping, renewal risk | Treating them like net-new hunters |

Sales manager | Coaching, inspection, skill diagnosis | Assuming top reps automatically coach well |

One helpful reference point for role development is this guide to building a leadership training programme, especially if your sales managers are carrying too much of the reinforcement burden without formal coaching training.

Reinforcement should be designed in from day one

Don't treat reinforcement as an optional add-on. Build it into the curriculum itself.

Use short scenario reviews, call debriefs, peer practice, manager-led coaching prompts, and quick knowledge checks. The sequence matters. Learn, practise, apply, review, repeat.

A short explainer can help when you're aligning internal stakeholders on curriculum flow and delivery choices:

A curriculum is high impact when a manager can point to one module and say, "I know exactly what good looks like after this."

Keep content usable in the field

Training content should survive contact with reality. That means the materials need to be short enough to revisit, specific enough to coach against, and practical enough to use before a meeting.

If a rep can't review a framework quickly before a customer call, the content is probably too broad. If a manager can't score a role-play against it, the lesson is too vague. And if the curriculum doesn't reflect the actual objections, buying motions, and compliance boundaries your team faces, it isn't finished yet.

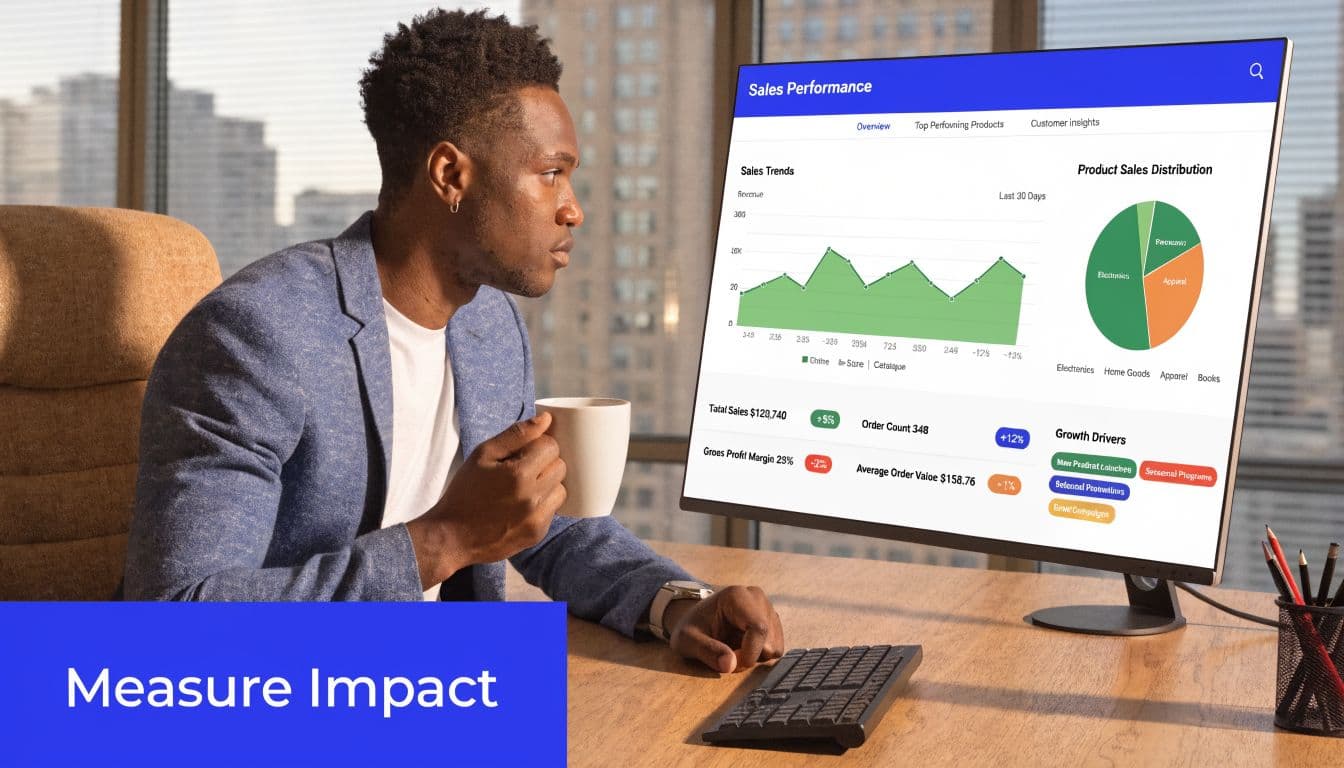

Measuring What Matters in Your Sales Program

Completion rates are tidy. They are also weak evidence.

A rep can finish every module and still run poor calls. That's why measurement in sales and training has to move from activity data to behaviour data and then to business outcomes. If you stop at attendance, you'll report effort instead of impact.

Use a layered model

The Kirkpatrick model is useful here because it keeps your dashboard honest. It asks four different questions, not one.

Reaction: Did learners find the training relevant and credible?

Learning: Did they understand the knowledge or skill?

Behaviour: Did they apply it in the field?

Results: Did business outcomes move in the intended direction?

Most programmes over-measure reaction and under-measure behaviour. That's understandable because behaviour takes more work to inspect. But that's where the key signal sits.

Build a dashboard with leading and lagging indicators

A practical dashboard should include both. Leading indicators tell you whether the programme is being adopted correctly. Lagging indicators tell you whether adoption is changing business performance.

Layer | Stronger metrics | Weaker metrics |

Learning | Assessment quality, scenario responses, certification performance | Completion alone |

Behaviour | Call reviews, role-play scores, framework usage in CRM notes | Self-reported confidence only |

Results | Quota attainment, deal progression, close outcomes | General satisfaction |

Operations | Manager coaching consistency, reinforcement cadence | Number of files uploaded |

A good dashboard is small. If leaders need ten minutes to understand it, it has too many metrics.

Tie measurement to a specific training intervention

In California tech sales, effective programmes show a 27% uplift in quota attainment for trained participants compared with untrained reps, and AI-powered role-play simulations can mirror buyer objections with 92% accuracy to real deal data, according to Highspot's 2026 training overview. The lesson isn't that every team will produce the same result. The lesson is that measurement becomes more convincing when it is linked to a specific intervention, such as role-play quality, objection handling, or manager coaching consistency.

That means your dashboard should answer a chain of cause and effect:

Did the rep complete the right training path?

Did the rep demonstrate the skill in practice?

Did the manager reinforce it in live coaching?

Did the sales outcome improve where that skill matters?

If you can't connect a metric to a decision, remove it from the dashboard.

Review at the team level first

New managers often try to prove impact person by person. That's slow and messy. Start with team patterns.

Look for shifts in call quality, qualification discipline, stage conversion confidence, and manager coaching habits. Once those patterns stabilise, you can go deeper into segment, role, or cohort analysis.

That approach makes your reporting more useful because it guides action. Measurement isn't there to decorate a quarterly review. It's there to tell you which parts of the programme need to be strengthened, simplified, or retired.

Your AI-Powered Implementation Roadmap

Traditional sales training implementation is slow because teams often build everything by hand. They collect PDFs, clean up slide decks, rewrite documents into lesson plans, create quizzes from scratch, assign content manually, and chase managers for feedback. That work isn't strategic. It's administrative.

AI changes the operating model. It doesn't remove the need for subject matter expertise or judgement, but it can remove a large share of the setup burden.

Start with a content audit

Before you build anything, gather the assets your team already uses:

Existing training files: Decks, call scripts, onboarding guides, PDFs, policy documents

Operational content: CRM playbooks, messaging documents, product notes, FAQs

Manager materials: Coaching scorecards, role-play prompts, inspection checklists

Compliance references: Approved language, required disclaimers, process rules

Many leaders realise the problem isn't lack of content. It's that the content is fragmented, outdated, or trapped in formats nobody wants to maintain.

Design role-based learning paths

California data highlights a key challenge here. Only 30% of sales roles in the state's tech hubs offer specialised pathways, even though customised training is important for advanced questioning and strategic account planning, according to ASLAN's discussion of role-specific sales training. That gap matters because generic training rarely fits every role.

Use AI to help organise learning paths by role and business context. For example, create separate paths for SDRs, AEs, channel partners, franchise staff, or regulated-industry reps. Then layer in optional modules for product lines, territories, or manager responsibilities.

Automate the build where repetition adds no value

This section is often underutilized. Platforms that support AI-driven course generation can convert source material into structured learning assets much faster than manual production.

For example, Learniverse's overview of how AI is transforming corporate training shows the operating model clearly. A team can turn manuals, PDFs, or internal content into courses, quizzes, and microlearning, then organise them into branded academies with tracking and role-based assignments. That's different from using a static LMS as a storage folder.

If you're comparing broader workflow ideas, this perspective on AI for sales automation is also useful because it explains how automation shifts work from repetitive admin to guided execution across the sales stack.

Use a phased launch instead of a big-bang rollout

A measured launch usually works better than trying to train everyone on everything at once.

Pilot one pathChoose one role, one region, or one high-friction problem.

Validate the contentAsk managers whether the scenarios match live field conditions.

Set reinforcement rulesDecide how often managers review, coach, and certify.

Launch the academyMake access simple. If people have to hunt for training, usage drops.

Review data quicklyLook for confusion points, low engagement modules, and manager adoption issues.

Traditional versus AI-automated rollout

Phase | Traditional Manual Approach | AI-Automated Approach (Learniverse) |

Content gathering | Files are collected and sorted manually across folders and inboxes | Source materials are uploaded and organised into one training workspace |

Course creation | Instructional designers rewrite content into modules and quizzes by hand | AI converts existing materials into interactive lessons and assessments |

Role assignment | Admins manually map who needs what training | Learning paths are structured by role and assigned more systematically |

Branding and launch | Academy setup requires multiple manual configuration steps | Branded academy setup is faster and more standardised |

Reporting | Managers compile updates from separate spreadsheets and systems | Dashboards centralise learner progress and engagement signals |

Maintenance | Updates require revising content piece by piece | AI-assisted updates reduce repetitive editing work |

The best use of AI in sales and training isn't replacing experts. It's freeing them to spend more time on design, coaching, and quality control.

The practical goal is simple. Reduce manual build time, increase role relevance, and create a programme that can be maintained after launch.

Real-World Use Cases for Training Leaders

Training strategy makes more sense when you can see who uses it and why. Here are three common situations that training leaders face.

Franchise operations leader

A franchise group opens new locations regularly. Each site needs the same service standards, the same product knowledge, and the same customer-facing language. But local managers train differently, so onboarding quality varies.

The operations leader responds by centralising onboarding into a repeatable academy. New staff complete the same sequence, managers certify the same core tasks, and refreshers are assigned when promotions, products, or policies change. The value isn't only speed. It's consistency across locations.

Corporate training director in a regulated sector

A training director in a regulated environment faces a harder problem. The team doesn't just need better sales conversations. It also needs consistent adherence to approved processes and language.

That pressure grows when reinforcement is weak. In California's regulated industries, 70% of salespeople lack formal training, and state DOL data from late 2025 shows 25% annual sales churn in these sectors due to inadequate follow-up, as summarised in this discussion of the lack of sales training. In practice, that means the director can't rely on annual certification alone. They need continuous, bite-sized reinforcement tied to live risks.

So the programme shifts from one annual event to a rhythm. Short modules, scenario checks, manager reviews, and recurring reminders become part of the operating cadence. The training director starts treating reinforcement as control, not decoration.

In regulated sales environments, the absence of follow-up is its own risk category.

SMB owner or talent manager

A small business often has the clearest need and the fewest resources. There may be no enablement team, no instructional designer, and no time for elaborate curriculum projects.

The owner usually starts with a simple question. How do I get everyone selling in the same way without spending months building a formal academy?

The answer is usually a lightweight system. Standardise the message, create a short onboarding path, define what good discovery looks like, and give managers a few scorecards they can use. In smaller teams, discipline matters more than sophistication. A modest programme that gets used beats an impressive one that never leaves the planning stage.

What these examples have in common

These leaders work in different conditions, but the pattern is the same.

They reduce variation: Everyone learns the same core standards.

They reinforce in the flow of work: Training shows up between real customer interactions, not apart from them.

They make managers part of the system: Coaching isn't optional.

They favour usable content: Materials are short, current, and tied to real decisions.

That's what effective sales and training looks like when it leaves the whiteboard and enters daily operations.

Conclusion From Training to Transformation

Sales and training works when leaders stop treating it like a library and start treating it like infrastructure. The strongest programmes don't rely on occasional inspiration. They run on clear curriculum design, role relevance, manager coaching, and disciplined measurement.

The commercial case is strong. The operational case is stronger. Training improves execution, lowers inconsistency, supports compliance, and gives sales leaders a more reliable way to shape performance over time.

AI adds a practical advantage. It reduces the manual work that has slowed training teams for years, especially when they need to convert scattered content into structured learning paths and keep those paths updated. That doesn't make strategy less important. It makes strategy easier to apply at scale.

If you're a new training manager, don't try to build everything at once. Start with one business problem, one role, and one measurement plan. Build the habit of reinforcement early. Keep the content usable. Involve managers from the start.

That's how sales and training moves from an event to an engine.

If you want a faster way to turn manuals, PDFs, and internal knowledge into structured learning paths, Learniverse gives training teams a practical way to build branded academies, automate course creation, and track learner progress without managing every step by hand.