A soft skills programme stops being “nice to have” when it starts moving promotion, retention, and turnover. In Canada, structured soft skills programmes were associated with a 32% higher promotion rate for participants, and in regulated industries a 3.5:1 ROI, while AI-enabled tracking helped teams monitor outcomes such as an 18% reduction in turnover according to a Canadian study cited by National Soft Skills.

That matters because most organisations still try to train soft skills in fragments. One workshop on feedback. A webinar on communication. A manager toolkit no one uses. The content may be sensible, but the system around it is weak. Soft skills only stick when the programme is built like an operating model: diagnose the gaps, design practice that looks like work, deliver in a format people can use, measure behaviour change, then scale without burying the L&D team in admin.

That’s the standard I use. Not inspirational sessions. Not check-the-box completions. A practical framework that lets training leaders build a repeatable programme and improve it over time.

Auditing Skills and Defining Clear Objectives

Soft skills programmes usually fail at the scoping stage, not in the classroom. The teams I see struggle are rarely short on content. They are short on diagnosis. “We need better communication” sounds reasonable until you ask who needs it, in which workflow, and what business problem it should fix.

A proper audit turns a vague request into an operating plan. It gives L&D a way to target the right behaviour, tie it to performance, and avoid building training that feels relevant but changes nothing.

The pattern is familiar across sectors. Employers consistently report gaps in communication, adaptability, teamwork, and problem solving, including in the Conference Board of Canada research often cited in workforce readiness discussions. In practice, those gaps are usually visible long before they appear in a learning brief. They show up as repeat escalations, muddled handoffs, delayed decisions, and managers avoiding hard conversations.

Start with business friction, not skill labels

“Communication” is too broad to audit well and too broad to train well.

Break it into moments where work succeeds or breaks down:

Manager communication: Running one-to-ones, giving corrective feedback, handling resistance.

Frontline communication: De-escalating frustration, listening accurately, clarifying next steps.

Cross-functional communication: Writing concise updates, surfacing risk early, reducing unnecessary back-and-forth.

Leadership communication: Setting direction, explaining change, handling ambiguity without creating confusion.

I start with friction in the workflow. Where do projects stall? Where do customers get passed around? Which conversations managers postpone because they know they will go badly? Those answers produce a sharper training scope than any generic competency list.

For teams that need structure, a practical training needs analysis template helps connect role expectations, current performance, and learning priorities without turning the audit into an admin exercise.

A simple audit pulls from four evidence streams:

Stakeholder interviews with business leaders, people managers, and HR.

Performance signals such as customer complaints, escalation patterns, team handoff issues, or project delays.

Employee voice from surveys, pulse checks, or manager comments.

Observed behaviours from call reviews, meeting audits, coaching notes, or peer feedback.

Practical rule: If the team cannot name the workplace moments where the skill appears, the training request is still too fuzzy.

Build the audit around observable behaviour

Soft skills become trainable when the behaviour is concrete. “Show more empathy” is too loose to coach or measure. “Acknowledge the concern before proposing a solution” can be observed in a call, practised in a scenario, and reinforced by a manager.

The distinction is critical because your objectives, activities, and measurement all depend on it.

Use a framing table like this:

Audit question | Weak version | Strong version |

What skill is missing? | Better communication | Clear, concise updates across teams |

Where does it show up? | In meetings | Project handoffs and status reporting |

What behaviour should change? | Be clearer | Summarise actions, owners, and deadlines in one message |

What business result matters? | Better teamwork | Fewer delays caused by misalignment |

This is also where AI tools start to help. Learniverse can speed up pattern detection by organising interview themes, tagging repeated behavioural issues across feedback sources, and turning those findings into draft skill matrices and learning objectives. That saves time, but it does not replace judgement. L&D still needs to decide which gaps are trainable, which are management issues, and which sit upstream in process design.

Use 360 feedback carefully

360 feedback is useful when you treat it as directional evidence, not a verdict. It often surfaces behaviours that never show up in a dashboard. A manager may rate someone as decisive. Peers may describe the same person as dismissive in cross-functional work. Both views can be true.

There are trade-offs.

Use patterns, not isolated comments. One dramatic remark should not reshape the whole programme.

Use behavioural prompts. Ask what the person does, not what their personality is like.

Use role context. A low collaboration score means something different for a sales lead than for a compliance analyst.

The strongest audits triangulate 360 feedback with workflow evidence and manager observation. If all three point to the same issue, the problem is usually real and trainable.

This same principle shows up outside corporate learning too. Programmes built for younger learners, such as social skills training for K-8, also work best when the target behaviour is specific, observable, and practised in context rather than treated as an abstract trait.

Turn findings into clear learning objectives

Once the audit is done, convert each finding into an objective that describes the behaviour, the context, and the standard. That is where many soft skills programmes either become operational or stay generic.

For example:

Managers will deliver corrective feedback using a structured conversation model that addresses the issue, impact, and next step.

Customer-facing staff will use active listening and clarification techniques before offering a solution.

Team leads will run project updates that end with decisions, owners, and deadlines.

Supervisors will adjust communication style when handling tension or resistance.

A good objective gives design teams something they can build around and gives managers something they can reinforce on the job. A weak objective reads like a value statement. A strong one reads like a workplace behaviour.

Before approving the scope, test every objective against three questions:

Can a manager observe it?

Can a learner practise it?

Can the organisation measure whether it happened more often after training?

If the answer is no to any of the three, the objective needs more work. That discipline is what turns soft skills training from a one-off workshop into a repeatable system.

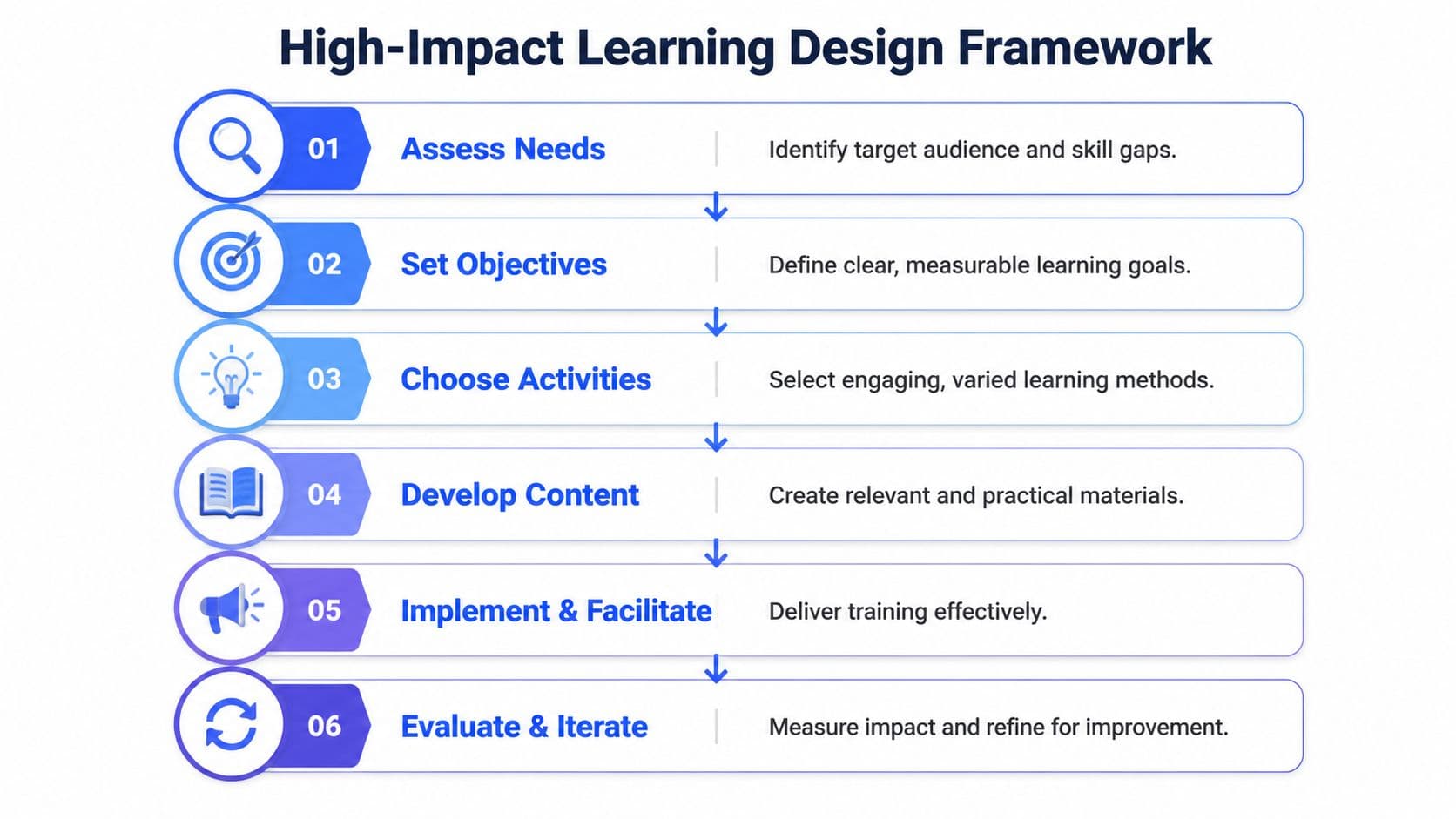

Designing High-Impact Learning Activities

Teams forget lectures fast. They remember practice that felt uncomfortably close to a real conversation.

The design standard is simple. Every activity should rehearse a workplace behaviour under realistic conditions, with feedback tight enough to change the next attempt. If the exercise only tests recall, it will not improve how a manager gives feedback, how a lead handles resistance, or how a customer-facing employee resets a tense exchange.

That is the operating difference between awareness training and performance training.

Match the activity to the skill cluster

Soft skills sit in different behaviour families, and each one needs a different practice format. Many weak programmes rely on a single method because it is easy to schedule. A discussion-based session gets used for conflict. A self-paced module gets used for coaching. A webinar gets used for leadership presence. The issue is not the format itself. The issue is fit.

Use a mapping like this:

Skill cluster | Best-fit activities | Why it works |

Communication | Role-play, message rewriting, response drills | Learners practise wording, tone, and structure |

Feedback and conflict | Branching scenarios, coached rehearsal, reflection | People need consequence-based decisions |

Leadership and coaching | Live practice, peer coaching, observation guides | The skill develops through judgement and follow-up |

Teamwork and collaboration | Simulations, problem-solving tasks, team debriefs | Collaboration has to happen with other people |

Time management and self-management | Microlearning, habit prompts, workflow exercises | These skills depend on repetition and routines |

Start with the moment at work that needs to improve. Then select the activity that lets people practise that moment with enough pressure, ambiguity, and consequence to make the learning stick.

In my teams, that rule prevents a common design failure. Stakeholders often ask for content on a broad topic such as communication. What they usually need is rehearsal for three or four narrow moments: opening a difficult conversation, asking a sharper follow-up question, closing a meeting with decisions, or responding without escalation.

Build scenarios that force choices

Soft skills improve through judgement. Learners need to decide what to say, hear the response, and adjust.

A useful scenario for a frontline manager is not a multiple-choice definition of constructive feedback. It is a short exchange where an employee becomes defensive, time is tight, and the manager has to open the conversation, respond to pushback, and leave with a clear next step. That sequence trains language, timing, and emotional control in the same rep.

Use a simple scenario-writing process:

Define the trigger. What starts the interaction?

Add emotional context. Is the other person frustrated, sceptical, rushed, or embarrassed?

Write realistic options. Avoid one ridiculous answer and one obvious winner.

Show the consequence of each choice. Let learners see how the conversation changes.

Require reflection. Ask what they would keep, change, or try next time.

Good scenarios create tension without becoming theatre. Learners should recognise the exchange from their own work.

For communication modules, I often start with rewrite-and-respond exercises before full role-play. Learners revise a weak message, explain why the change matters, and only then move into live practice. That lowers the social risk and improves the quality of the first rehearsal.

Use microlearning with discipline

Microlearning works well for narrow behaviours and reinforcement. It does not carry an entire soft skills programme on its own.

Use short modules in three places:

Before live practice to teach a model, checklist, or conversation structure

Between sessions to reinforce one behaviour at a time

After workshops to prompt use in real work situations

Short lessons are useful because they focus attention on one action. They fail when they replace practice for skills that depend on reading the room, handling emotion, or adapting in real time.

This is one area where AI tools help if they are used with restraint. Learniverse can generate scenario variations, role-play prompts, reflection questions, and reinforcement nudges from a defined objective set. That cuts design time and makes it easier to produce targeted practice assets at scale. It does not remove the need for facilitator judgement. Someone still has to decide which moments matter, which examples sound credible, and what good performance looks like.

Design for adults who already have context

Corporate learners bring experience, habits, and opinions into the room. Training has to respect that. If an activity feels generic or juvenile, participation drops and the debrief gets shallow.

The best design choices usually follow proven adult learning principles for workplace training: relevance to current work, room for self-direction, and immediate application. That sounds obvious, but it changes how activities are built. Case studies need believable constraints. Practice prompts need role-specific language. Reflection questions need to connect to an actual meeting, customer issue, or team tension the learner will face this week.

A useful contrast appears in youth programmes. Resources like social skills training for K-8 show how social behaviour is taught through modelling, repetition, and guided interaction. The principle still holds in corporate settings. The difference is that adult scenarios must reflect power dynamics, deadlines, cross-functional friction, and accountability for results.

Make practice safe enough to be honest

People avoid soft skills practice for predictable reasons. They worry about sounding awkward in front of peers. They are unsure what good looks like. They do not want to mishandle a conflict scenario in public.

Design can reduce that friction.

Use scripts as starting points. Give learners an opening phrase, not a full speech.

Let them observe first. A model example lowers uncertainty.

Break the skill into parts. Practise the opening before the pushback.

Debrief behaviours. Focus feedback on language, timing, and choices.

Repeat with variation. Improvement usually shows up on the second and third attempt.

A strong feedback module often follows this sequence:

Watch a short model conversation.

Identify what the manager did well and where the exchange weakened.

Rewrite the opening line.

Practise in pairs with a prompt card.

Repeat with a more defensive employee response.

Commit one phrase each learner will use at work this week.

That sequence works because it builds load gradually. It also creates observable evidence that the learner can apply the skill, which matters later when the programme is measured for impact and scaled across teams.

High-impact activity design is the centre of the operating model. Get this part right, and the rest of the programme has something real to deliver, measure, and improve.

Choosing the Right Delivery Model

Companies with strong learning cultures are far more likely to develop the leadership and people capabilities they need, according to LinkedIn Learning’s workplace learning research. Delivery model is one of the biggest reasons those programmes either hold up in practice or stall after launch.

The choice is operational, not academic. Pick a format that matches the behaviour, the work environment, and the manager’s ability to reinforce it. Get that wrong, and even well-designed practice activities lose force once people return to the job.

A side-by-side view of the trade-offs

Delivery model | Best use case | Strengths | Risks |

Blended learning | Leadership, conflict, coaching, high-stakes communication | Stronger practice conditions, social accountability, room for reflection between sessions | Higher coordination load, more manager involvement, harder to schedule at scale |

Fully virtual | Distributed teams, manager enablement across locations, fast enterprise rollouts | Broader reach, consistent delivery, easier access across regions | Passive sessions fail fast, attention drops if facilitation is weak |

Microlearning-first | Frontline teams, reinforcement, just-in-time skill building | Fits shifts and workload constraints, supports repetition, useful for manager follow-up | Too shallow on its own for judgement-heavy conversations |

I use one rule first. The more a skill depends on judgement under pressure, the more live practice it needs.

A manager learning to handle a defensive performance conversation needs real-time response, feedback, and a second attempt. A customer support team standardising de-escalation phrases may get faster results from short modules, call-floor coaching, and quick refreshers. Same broad category of soft skills. Different delivery logic.

When blended learning earns the complexity

Blended delivery works best for skills that require rehearsal, reflection, and transfer over time. That includes coaching, conflict, feedback, and executive communication. These skills rarely improve in a single event.

A practical blended sequence usually looks like this:

self-paced prework to set a shared baseline

live workshop for practice and feedback

manager check-ins within one to two weeks

short reinforcement modules tied to real work scenarios

follow-up practice or peer review later in the month

The trade-off is coordination. Someone has to schedule the live session, brief managers, and keep reinforcement from slipping. In my teams, that extra effort paid off when the target skill affected performance reviews, leadership readiness, or cross-functional execution. It was not worth the overhead for every topic.

Learniverse helps here because it keeps those moving parts in one system. Teams can assign prework, trigger reinforcement by role, and track whether managers completed follow-up coaching instead of leaving the handoff to email and memory.

When fully virtual is the smarter choice

Virtual delivery makes sense when the business needs consistency across locations or when pulling people off the job is too expensive. It is also effective for manager cohorts who already share context and need structured practice more than classroom energy.

Bad virtual design is the core problem. Long webinars, weak facilitation, and vague breakout prompts produce low transfer in any company.

Use virtual delivery when you can support it with:

sessions short enough to keep participation high

breakout practice built around one observable behaviour

facilitator or peer feedback during the session

digital job aids that learners use in the same week

manager prompts that turn the session into on-the-job action

If your team is weighing live versus self-paced elements, this guide to synchronous vs asynchronous learning gives a practical framework for deciding what must happen in real time.

One caution. Virtual is efficient, but efficiency is not the same as behaviour change.

Where microlearning fits best

Microlearning works well for reinforcement, habit formation, and narrow behavioural targets. It is useful when employees have little uninterrupted time and managers can coach in the flow of work.

I use it for skills like meeting openings, active listening prompts, escalation language, and feedback structure. I do not use it alone for emotionally loaded conversations, conflict resolution, or coaching that depends on reading nuance in the moment.

Choose a microlearning-first model when these conditions are true:

the behaviour can be described clearly in a few steps

learners need access during the workday, not before or after it

supervisors can observe and reinforce the behaviour quickly

the business needs broad coverage with minimal disruption

Many organisations pair microlearning with coaching because the combination holds up better than content alone. If you need the business case for that support layer, this breakdown of how coaching impacts the bottom line is a useful reference.

The strongest programmes do not commit to one format across every skill. They build a delivery mix on purpose, then standardise the operating model behind it. That is how soft skills training becomes repeatable across teams, measurable over time, and easier to scale with AI.

Measuring Training Impact and ROI

A soft skills programme earns budget renewal when it proves one thing. People work differently after training, and the business benefits.

Attendance, completions, and post-session sentiment still have a place. They help diagnose delivery quality. They do not prove that managers give better feedback, that teams resolve conflict faster, or that customer conversations improve under pressure.

The practical standard is straightforward. Tie every learning experience to a target behaviour, then tie that behaviour to a business measure the function already trusts. Without that chain, ROI discussions drift into opinion.

Use Kirkpatrick, but run it like an operating model

Kirkpatrick still works. The mistake is treating it as an academic framework instead of a reporting discipline for L&D, managers, and business leaders.

Level | What to measure | Example in soft skills training |

Reaction | Did learners find it relevant and usable? | Session feedback, confidence to apply the skill |

Learning | Did they understand the model? | Scenario scores, knowledge checks, skill rehearsal ratings |

Behaviour | Did they use it at work? | Manager observation, 360 follow-up, call review changes |

Results | Did business performance shift? | Promotions, retention, turnover, team productivity |

The trade-off is simple. Level 1 and Level 2 data is fast to collect and easy to package. Level 3 and Level 4 data takes coordination across managers, HR, and operations, but that is where credibility comes from.

For example, if the programme teaches managers to run feedback conversations, start with practice scores and learner confidence. Then check whether managers hold the conversations, whether employees report clearer expectations, and whether recurring issues are addressed earlier. That sequence gives executives a line of sight from learning to performance.

Build dashboards by skill family, not by programme title

A single dashboard for "soft skills" creates noise. Communication, coaching, collaboration, and customer-facing behaviours produce different signals and need different measures.

A collaboration dashboard might include:

Learning indicators: completion of scenario practice, quality of submitted responses, participation in workshops

Behaviour indicators: peer feedback on responsiveness, meeting observation checklists, project handoff quality

Business indicators: fewer delays from missed dependencies, stronger cross-functional execution, lower avoidable escalation

A leadership communication dashboard should track a different mix:

Learning indicators: confidence to run difficult conversations, quality of role-play practice

Behaviour indicators: manager one-to-one consistency, team survey comments, follow-through on action items

Business indicators: promotion readiness, retention, turnover patterns, team productivity

Many teams lose precision when they report one blended success metric across very different skills, then struggle to explain what improved and why. Separate dashboards fix that problem and make follow-up action much clearer.

Measurement gets easier when every objective names a behaviour a manager can observe.

Combine manager judgement with system data

Good soft skills measurement uses both human observation and platform evidence. Surveys alone miss application. Platform analytics alone miss context and quality.

Use a mix such as:

learner self-assessment before and after training

manager checklists tied to specific behaviours

360 follow-up for roles where peer interaction matters

workflow data such as escalations, quality issues, or handoff errors

people metrics like promotion, retention, or turnover where relevant

I have seen one pattern hold up across programmes. The strongest evidence comes from systems identifying where practice happens and managers confirming whether the behaviour shows up in real work.

AI platforms help because they reduce the reporting burden. Learniverse, for example, can organise practice data, flag stalled participation, and surface weak skill areas without forcing an L&D team into manual spreadsheet work every week. That changes the economics of measurement. The team spends less time chasing inputs and more time reviewing evidence with managers.

For leaders who want a broader commercial lens, this piece on how coaching impacts the bottom line is useful because it frames people development in operational and financial terms rather than in learning jargon.

Review impact in cycles, not once at the end

Annual or end-of-programme evaluation usually produces muddy conclusions. By that point, managers have changed priorities, memory has faded, and too many variables are in play.

Use a review cadence instead:

Immediately after training: reaction and confidence to apply

Several weeks later: manager observation and learner self-report

Later in the cycle: performance indicators tied to the programme objective

This approach keeps the programme adjustable while it is still live. If practice completion is high but behaviour adoption is weak, the issue is not content coverage. It is manager reinforcement, scenario quality, or lack of opportunity to apply the skill. That is the level of diagnosis an operational playbook requires.

This is also the point where a quick visual explanation can help align stakeholders on what “impact” means.

One rule should stay fixed. Do not claim ROI if the target behaviour was never defined at the start. Without that anchor, the numbers may look polished, but the analysis will not hold up in a budget review.

Scaling Your Program with AI Automation

A soft skills pilot can be elegant and still collapse at scale. That’s the core operational challenge. Content has to stay current. Practice has to feel relevant by role. Reinforcement has to continue after launch. Reporting has to happen without adding another layer of admin that no one can sustain.

That’s where AI changes the economics of programme delivery. Not because it replaces facilitators or managers. It doesn’t. It removes the repetitive work that keeps L&D teams stuck in manual production and patchy follow-up.

One study cited in a workforce development resource found that traditional in-person training fails up to 60% of workers facing barriers such as transportation, while AI-powered eLearning boosted retention by 35%, as noted in this soft skills accessibility reference. That’s not just a delivery convenience. It’s a scale and access issue.

The manual model breaks in predictable ways

When organisations try to scale soft skills training manually, the same problems appear:

content updates lag behind policy or process changes

workshops vary by facilitator

reinforcement depends on which managers care enough to follow through

reporting arrives late and doesn’t help anyone improve the programme

localisation for role, region, or language becomes expensive and slow

None of those problems are solved by adding more PDFs to the LMS. They’re solved by reducing production friction and increasing adaptability.

Where AI is actually useful

AI earns its place when it handles repetitive, rules-based work and supports personalisation at the learner level. In practice, that means three high-value uses.

Content generation from existing materials

Many organisations already have the raw material for soft skills training. Policies, playbooks, call guides, manager handbooks, service standards, escalation procedures. The bottleneck is turning those materials into something teachable.

AI platforms can convert source material into microlearning lessons, quizzes, scenario prompts, and reinforcement content much faster than a manual build process. That doesn’t remove the need for instructional review. It shortens the path from source content to usable learning.

For teams comparing AI-enabled production workflows, this roundup of Direct AI's top tools for creators is a helpful reference point for how generative tools are being used to accelerate content work more broadly.

Personalised reinforcement

Not everyone needs the same next lesson. One manager struggles with feedback openings. Another struggles with listening when challenged. A customer-facing employee may need help with tone under pressure, while a supervisor needs help structuring one-to-ones.

AI can support adaptive paths that reinforce the exact behaviour a learner needs next. That’s one of the biggest advantages over static programmes. The training becomes less generic and more role-aware.

The verified Canadian data also warns that generic content reduces engagement by 28%, while AI-driven personalisation is recommended to reach stronger learner satisfaction benchmarks, as captured in the earlier Canadian methodology source. That’s a practical reminder: scale only works if relevance survives the rollout.

Faster analytics and cleaner decisions

L&D teams often spend too much time preparing reports and too little time deciding what to change. AI can surface patterns faster: where learners stall, which scenarios produce weak responses, which teams need refreshers, which managers are reinforcing the skill, and where dropout risk is rising.

That’s especially useful in larger rollouts where manual analysis becomes slow and inconsistent.

The point of automation isn’t fewer people in training. It’s fewer hours wasted on assembly work that software can handle.

How to scale without losing quality

AI can expand reach quickly, but speed creates its own risks. Poorly governed automation can flood learners with shallow content or amplify weak source material.

Use these guardrails:

Keep a human review step: SMEs and L&D leads should approve role-play logic, examples, and tone.

Prioritise high-friction skills first: Start with communication, feedback, and frontline service scenarios where scale pressures are obvious.

Use manager prompts alongside learner content: Training sticks better when managers know what to reinforce.

Refresh with intent: Don’t push content because the platform can. Push content when the behaviour needs reinforcement.

Monitor for relevance drift: If business conditions change, update examples and scenarios quickly.

Used this way, AI is a force multiplier. A platform such as Learniverse can turn company manuals, PDFs, and web content into interactive courses and microlearning, then automate learning paths and analytics so the L&D team can focus on design quality, coaching, and programme governance rather than manual production.

From Framework to Action

Soft skills training works when it stops behaving like an event. A workshop can create momentum. It can’t create a sustained capability on its own. Capability comes from a system.

That system starts with a hard look at current behaviour. Audit the work, not the buzzwords. Define objectives that managers can observe. Design activities that force judgement, not passive agreement. Choose a delivery model that fits the skill and the workflow. Measure application and business outcomes, not just attendance. Then use automation carefully so the programme can grow without turning the L&D function into a content factory.

The organisations that train soft skills well don’t treat communication, collaboration, or leadership as side topics. They treat them as operating skills. That changes the quality of management, the speed of execution, and the consistency of customer experience.

If your current approach is a patchwork of workshops, slide decks, and good intentions, that’s fixable. Start smaller than you think. One audience. One behaviour set. One measurement plan. Then build from evidence.

If you want a simpler way to operationalise that framework, Learniverse helps teams turn existing documents and internal knowledge into interactive training, automate delivery, and track learner progress without the usual manual setup. For L&D leaders who need to scale soft skills programmes with less admin and more consistency, it’s a practical starting point.