You're usually only one click away from an export to csv. That's the deceptive part.

The file downloads, you open it, and suddenly the report you promised for leadership is blocked by broken accents, shifted columns, IDs that lost their formatting, or learner records that shouldn't have been exported in the first place. For Canadian training teams, that's not just an annoyance. It affects reporting accuracy, bilingual usability, and privacy handling.

A good CSV export isn't a dump of whatever the system can produce. It's a controlled handoff from one system to another. When managers treat it that way, reporting gets faster, integrations become more dependable, and compliance reviews get easier.

Why Your CSV Export Strategy Matters

Most CSV problems don't start in Excel. They start earlier, when someone assumes export means finished.

In practice, export to csv is the moment your learner data leaves a structured application and enters a fragile format that many tools interpret differently. Commas, quotes, encodings, dates, formulas, and identifiers can all behave in unexpected ways once the file lands on a desktop or gets sent to another team. If your organisation uses learner analytics for franchise oversight, onboarding, compliance tracking, or executive reporting, those small mistakes travel quickly.

Training managers often run into the same pattern. They need a learner progress report, click export, and only then realise the file isn't analysis-ready. A cleaner workflow starts before the download. If you're already working on reporting processes, Learniverse's guide to tracking learner progress is useful context for deciding what data you need before you export it.

What a usable export actually looks like

A usable CSV file has a few basic qualities:

Correct structure so every row has the same number of columns

Stable formatting so dates, IDs, and completion values stay readable

Bilingual compatibility so English and French text survive the trip intact

Appropriate scope so you export only the learners and fields required

Safe handling so personal data isn't copied around casually

Practical rule: If a manager has to spend the first half hour fixing the file, the export process is broken.

The business cost of bad exports

Bad exports create quiet operational drag. Managers second-guess reports. Analysts spend time cleaning data instead of interpreting it. HR teams hesitate to share learner files because they're unsure what's inside them. In regulated environments, weak handling can also create privacy exposure.

The better approach is simple. Treat CSV as part of data stewardship, not just a convenience feature. That shift changes what you check, what you automate, and what you allow out of the system.

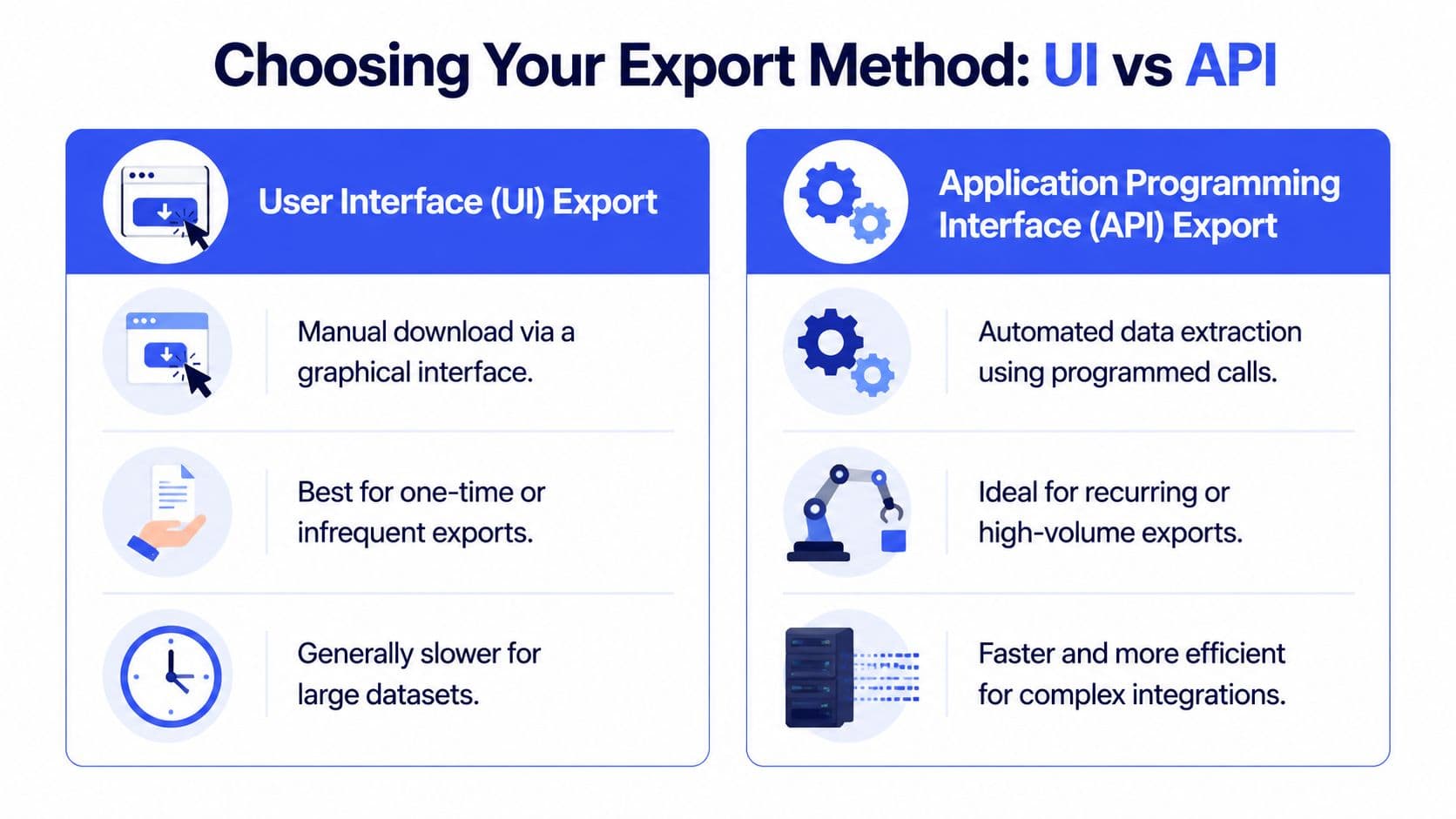

Choosing Your Export Method UI versus API

The first decision isn't about file format. It's about how you'll get the data out.

Organizations typically follow one of two routes. They either click a manual export in the user interface, or they use an API-based workflow to pull data on a schedule. Both are valid. The wrong choice is using the same method for every job.

When the UI export is the right tool

The UI export is best when the need is immediate and limited. A manager wants completion data for one region. A trainer needs a list of learners who missed a deadline. A consultant is checking a one-time course launch.

That approach works because it's simple. No setup. No dependency on developers. No waiting for a scheduled process to be created.

Use the UI when:

The export is occasional and doesn't justify technical setup

The audience is small and the file won't be reused repeatedly

You need a quick review rather than a repeatable pipeline

You want a manual check before the data leaves the platform

The downside is consistency. Manual exports depend on the person doing them. Filters get missed. Date ranges change. Column selections drift. File names become cryptic. That's manageable once in a while. It becomes painful when reporting is recurring.

When API export is the better option

API export makes sense when the file is part of a process, not a one-off task. If your team sends the same learner report every week, syncs training data into HR systems, or feeds a dashboard used by multiple stakeholders, manual downloading becomes a weak link.

According to Gartner's 2026 “Future of Data” report, organisations that automate routine data extraction tasks like CSV exports see a 60% reduction in manual errors and free up an average of 8 hours per employee per month for higher-value analysis, as cited by Gartner.

That doesn't mean every team needs a fully engineered integration. It means recurring exports deserve a repeatable method.

Here's a practical comparison:

Method | Best use | Main strength | Main trade-off |

UI export | One-time reports | Fast and accessible | Easy to do inconsistently |

API export | Recurring and high-volume workflows | Repeatable and auditable | Needs setup and ownership |

What usually works in real teams

A mixed model works best.

Use manual exports for ad hoc operational questions. Use API workflows for anything that repeats, feeds another system, or contains sensitive learner data that shouldn't depend on someone downloading files to their laptop every Friday. If your team is deciding what's available through product interfaces and integrations, the Learniverse documentation is the right place to confirm current export options and implementation details.

Manual export is a task. API export is a process.

That distinction matters because process is where quality control becomes realistic. Once a workflow is automated, you can validate schema, standardise filenames, limit fields, and keep a record of what ran and when.

Mastering CSV Formatting and Encoding

Most broken CSV files are technically valid files. They're just interpreted badly by the next tool.

That's why formatting choices matter more than many managers expect. If you want export to csv to work consistently, focus on three things first: delimiters, text qualifiers, and encoding.

Delimiters and quoted text

CSV suggests commas, but many receiving tools don't behave the same way. Some expect commas. Others open semicolon-delimited files more cleanly depending on locale settings. If the entire file appears in one column after import, delimiter mismatch is usually the first thing to check.

Text qualifiers matter just as much. If a field contains a comma, such as a job title or a location description, that value should be wrapped in double quotes. Without quotes, the parser treats the comma as a separator and shifts the row structure.

Check these details before sending the file onward:

Delimiter match so the receiving tool splits columns correctly

Double quotes around text fields that may contain commas

Consistent header row so downstream users know what each field represents

Encoding is the Canadian pain point

Encoding errors are where bilingual learner data often breaks.

If names like Hélène, François, or Montréal appear as garbled text after export, the problem usually isn't the learner record. It's the encoding used when the file was written or opened. Existing vendor guides often ignore the need for UTF-8 BOM encoding for Canada's bilingual data, leading to a 2025 eLearning Industry Canada survey finding that 68% of corporate trainers in Quebec and Ottawa report CSV import failures due to encoding issues with French accents, as cited in the background reference on exporting to CSV.

For Canadian organisations, UTF-8 is the baseline. In many spreadsheet workflows, UTF-8-SIG or UTF-8 with BOM is the safer choice because some desktop tools recognise it more reliably.

What to set before and after export

If you have any control over the export configuration or preprocessing step, use this checklist:

Set UTF-8 or UTF-8-SIG explicitly when bilingual text is involved.

Standardise your delimiter and confirm what the receiving system expects.

Keep date formats stable, preferably one format across the entire file.

Open a sample in two tools, not just one. A text editor and a spreadsheet app will reveal different issues.

Test French names and labels first, because they usually expose encoding mistakes immediately.

If accents break in the first ten rows, stop there. Don't clean the file manually. Fix the export setting.

What doesn't work well

What fails most often is the “open it and save it again” habit. A manager opens the CSV in a spreadsheet app, sees broken characters, saves it under a new name, and unknowingly changes encoding, date handling, or formula behaviour again. That can make the file look fixed while introducing fresh corruption.

The safer move is to identify the root setting. A clean export with the right encoding will survive transfer, review, and import without needing rescue work.

Preparing Clean Data Before You Export

The easiest CSV to clean is the one that never needed cleaning.

Many teams treat export as the start of data preparation. It should be the end of it. If the learner dataset is cluttered, inconsistent, or wider than necessary before export, the CSV preserves those problems in a less forgiving format.

Reduce the file at the source

Start by narrowing the dataset inside the platform before you export anything. Pull only the records needed for the decision in front of you. If a regional manager needs Ontario completion data for one business unit, don't export all learners across every franchise and every date range.

That habit has two benefits. First, the file becomes easier to review. Second, fewer unnecessary personal fields leave the system.

Use pre-export filters for:

Business unit or location such as province, franchise, or department

Relevant time window such as current quarter or active programme period

Specific learner status like enrolled, completed, overdue, or inactive

Removal of test accounts so sandbox records don't pollute reporting

If you're still organising learner records upstream, the quick start guide for adding learners is a good reminder that input discipline affects reporting quality later.

Standardise fields before the file exists

A 2025 Forrester report found that data teams spend up to 40% of their time cleaning and preparing data, and that burden can be reduced by implementing pre-export validation rules in source systems, according to Forrester.

That aligns with what operations teams see every day. The pain usually comes from avoidable inconsistency:

one manager enters dates one way, another uses a different format

department names vary slightly across records

employee IDs are stored as mixed text and numeric values

test users sit next to real employees in the same export

Those aren't CSV issues. They're source-data issues.

Think like a handoff owner

A useful mental model is to treat the CSV as a handoff to another system or another person. If someone else had to import, audit, or transform the file tomorrow, would the structure make sense without follow-up questions?

That same handoff mindset shows up in other operational file conversions too. For example, teams dealing with payments learn quickly that structured input matters when converting spreadsheets to SEPA XML files, because downstream systems are far less forgiving than the spreadsheet where the data started.

Clean exports are built upstream. Post-export cleanup should be exception handling, not the default workflow.

A short pre-export review

Before clicking download, confirm:

Check | Why it matters |

Field list | Prevents over-exporting unnecessary columns |

Date format | Stops mixed interpretations later |

Naming consistency | Keeps filters and grouping usable |

Test data removal | Avoids false counts and noise |

Scope sanity check | Reduces privacy risk and file size |

Teams that do this consistently spend less time repairing spreadsheets and more time answering actual business questions.

Troubleshooting Common CSV Export Errors

Even careful teams hit CSV issues. The difference is whether they know the symptom fast enough to fix the root cause.

Most problems fall into a short list. Broken identifiers, shifted rows, malformed text, or spreadsheet security warnings. Each one has a predictable pattern.

Postal codes and IDs losing their format

This is one of the most common frustrations in Canadian learner exports. Spreadsheet tools often try to be helpful and interpret text-like values as numbers. That breaks fields that should stay exactly as written.

In Canada, a common pitfall is the mishandling of postal codes, with tools misinterpreting them as numbers and dropping leading zeros, causing 45% of import failures in some BC training platforms, a problem solvable with proper data type validation before import, according to the cited reference on common CSV mistakes.

The same logic applies to employee IDs, franchise codes, or internal learner identifiers.

Use this quick diagnosis table:

Symptom | Likely cause | Direct fix |

Postal code looks wrong | Spreadsheet converted it | Import column as text |

Leading zero disappeared | Field treated as numeric | Force text format before opening or importing |

ID shows scientific notation | Large identifier auto-formatted | Keep IDs as strings, not numbers |

Row misalignment and broken quotes

If one row suddenly pushes data into the wrong columns, look for an unescaped comma or quote inside a text field. This often happens in free-text columns such as job title, notes, or location name.

The cleanest fix is upstream. Ensure the export process wraps text values correctly in double quotes and escapes embedded quotes where needed. If you only patch the damaged row manually, the file may import today but fail again on the next export.

Formula injection warnings

Some CSV files trigger spreadsheet warnings because a text field begins with characters that a spreadsheet interprets as a formula. That can happen if a learner name, note, or imported source value starts with symbols that spreadsheet software treats specially.

Open the file in a plain text editor before sending it broadly. A spreadsheet app shows presentation. A text editor shows the file you actually exported.

For operational teams, the best practice is simple:

Inspect a sample in a text editor such as Notepad++

Review unexpected leading symbols in free-text fields

Avoid casual re-saving in spreadsheet software before validation

Use a validation step when the export feeds another system

A practical triage order

When a CSV looks wrong, check in this order:

Encoding

Delimiter

Text qualifiers

Column data types on import

Spreadsheet auto-formatting behaviour

That order saves time because many “bad data” complaints are really file interpretation issues. Once you know where to look, most fixes are routine.

Security and Compliance for Learner Data Exports

A learner CSV file may look harmless because it's familiar. It's still a data handling event.

The moment names, email addresses, employee IDs, assessment results, or progress records leave the platform and become a downloadable file, someone takes custody of that data. For Canadian organisations, that changes the conversation from reporting convenience to accountability.

Export only what the task requires

The safest CSV is the smallest one that still gets the job done.

If you're preparing a trend report for course completion by region, you probably don't need personal email addresses. If you're reviewing overdue training counts, you may not need to export assessment-level detail. Data minimisation is practical, not theoretical. Smaller files are easier to review, easier to protect, and less likely to be mishandled.

With 75% of Canadian SMBs using eLearning, automating bulk CSV exports with PIPEDA-compliant anonymisation is a critical but underexplored need, as 42% of HR directors cite data breach fears in manual CSV workflows, according to the cited background reference on bulk CSV export privacy concerns.

That fear is justified. Manual exports often end up on local drives, email attachments, or shared folders with weak controls.

When anonymisation makes more sense than raw export

For many reporting tasks, identifiable learner data isn't required. Trend analysis, completion monitoring, and aggregate dashboards can often work with pseudonymised IDs instead of names and email addresses.

A practical approach is to separate exports into two categories:

Operational files that require identifiable records for direct follow-up

Analytical files that can use masked or pseudonymised learner identifiers

That split helps teams avoid exporting full PII by default.

Security habits that actually hold up

Policies matter, but day-to-day habits matter more. In regulated sectors, teams often benefit from broader software compliance perspectives, especially where training systems intersect with sensitive workforce records. For that reason, Cleffex's healthcare compliance insights are useful reading even outside healthcare, because they show how process design affects risk exposure.

A practical handling standard for learner exports should include:

Restricted field selection so only necessary columns are exported

Controlled storage location instead of downloads scattered across personal devices

Defined retention practice so temporary files aren't kept indefinitely

Repeatable export procedures so teams don't invent their own methods each time

Auditable automation where possible to reduce manual handling

The main security risk in CSV workflows usually isn't the file format. It's the human process around the file.

Teams that automate exports thoughtfully often improve security because the workflow becomes more predictable. Fewer ad hoc downloads. Fewer copies. Fewer opportunities for someone to forward the wrong file.

If your team wants a simpler way to build, track, and manage training data workflows without so much manual admin, Learniverse is worth a look. It helps training managers run scalable eLearning programmes with automation, learner analytics, and structured processes that make reporting easier to trust.