A training manager sees the same pattern every quarter. One group gets productive quickly. Another needs extra coaching. A third looks confident in class, then slows down the moment real work begins. The content hasn’t changed, the trainer hasn’t changed, but the outcomes keep moving.

That’s usually where costs start to drift. Schedules slip, managers add remedial sessions, and leaders start asking a hard question: why can’t we predict time to competence more accurately? The problem often isn’t effort. It’s that many teams still treat learning as a vague human process instead of a measurable operational one.

The idea behind learning curves foundation is simple. People don’t improve at random. They improve in patterns. Those patterns can be observed, measured, and used to make better decisions about training design, staffing, support, and follow-up.

Good educators have always known this intuitively. The first few repetitions of a task tend to produce big gains. Later gains take more time. Some learners stall early because the practice sequence is wrong, not because they lack ability. Others need less instruction and more repetition. If you’ve ever tried to how to study smarter not harder, you’ve already touched the same idea from the learner side.

For training leaders, the opportunity is bigger. A learning curve doesn’t just explain progress after the fact. It helps you forecast it. That changes how you plan onboarding, how you structure practice, and how you justify investment in analytics and automation. Teams trying to build a more systematic capability can start with practical guidance on learning in organisations, then layer in measurement.

Introduction The Predictable Path to Mastery

Most training problems look like content problems at first.

A supervisor says new hires need “more confidence”. A compliance lead says the class should be “more engaging”. A sales enablement director says reps need “better retention”. Sometimes those observations are right. Often, though, the deeper issue is that nobody has mapped how performance changes over repeated practice.

Why unpredictability feels so expensive

Take a common onboarding task such as handling a customer handoff in a CRM. One learner struggles on the first few attempts, then suddenly becomes smooth and fast. Another appears strong early, then plateaus because they memorised steps without understanding the logic. If you only assess once at the end, both learners can look similar in the classroom and very different in production.

That’s why training budgets often increase discreetly. Teams add sessions, add content, and add checklists when what they really need is better visibility into the pattern of improvement.

Training feels expensive when leaders can’t tell the difference between a normal learning dip and a true design flaw.

What a learning curve gives you

A learning curve gives training managers a working model of skill acquisition. Instead of asking, “Did the learner pass?” you ask more useful questions:

How fast is performance improving: Are learners getting quicker or more accurate with each repetition?

Where does progress slow down: Does the stall happen after the first exposure, after guided practice, or after transfer to live work?

Which tasks improve predictably: Some tasks respond well to repetition. Others need coaching, feedback, or scenario variety.

What should happen next: If the curve is flattening too early, the design needs adjustment.

That’s the practical value of the learning curves foundation. It turns vague impressions into observable signals. For peers in training and enablement, that matters because better signals lead to better intervention. You stop guessing who needs help, when reinforcement should happen, and how much practice a role really requires.

What Is a Learning Curve The Core Concept Explained

A learning curve shows how performance changes as someone gains experience with a task.

Think about teaching someone to type on a new keyboard shortcut set. The first attempts are slow because they’re searching, thinking, and correcting mistakes. After a handful of repetitions, speed improves quickly. Later, improvement still happens, but not at the same pace. The person is refining, not discovering.

That shape matters. Early gains are usually larger because learners eliminate obvious friction first. As they get better, each additional gain becomes harder to earn.

The simplest way to picture it

A useful analogy is driving a familiar route.

On day one, you notice every sign and second-guess each turn. After several trips, you stop spending mental energy on basics. You drive more smoothly because the route has become structured in memory. The route didn’t change. Your processing did.

That’s what a learning curve captures. It makes visible the relationship between repetition and performance.

Core idea: learning usually improves quickly at first, then more gradually as the learner approaches reliable competence.

The 80 percent benchmark

A widely used benchmark makes the concept concrete. The 80 percent learning curve is considered a standard benchmark in many industries. It means that each time cumulative output doubles, the time required to complete each unit decreases by 20 percent, according to the PMI discussion of the 80 percent learning curve.

The classic example is easy to understand:

First unit: takes 10 minutes

Second unit: expected to take 8 minutes

Fourth unit: expected to take 6.4 minutes

That doesn’t mean every learner or every task follows the pattern exactly. It means the benchmark gives training managers a disciplined starting point for estimating practice time and improvement.

Where readers often get confused

Many people hear “learning curve” and assume it means difficulty. In everyday speech, someone says a tool has a “steep learning curve” when it’s hard to learn. In training analysis, the curve isn’t a label for difficulty. It’s a description of how performance changes over time.

Another common confusion is assuming progress should be linear. It usually isn’t. If a learner improves a lot in the first few repetitions and then only a little later, that doesn’t mean training has failed. It means the easy gains have already been captured.

Why this matters for training managers

If you understand the curve, you stop designing programmes as though every session contributes equally. Introductory practice often creates large returns. Advanced refinement often needs narrower feedback and more realistic scenarios.

That changes decisions such as:

Session length: A long first session may create fatigue instead of useful repetition.

Practice spacing: Some tasks benefit from repeated short cycles rather than one large workshop.

Coaching focus: Early correction targets basic errors. Later correction targets consistency and judgement.

A learning curve is not abstract theory. It’s a planning tool. It helps you estimate how much practice a task needs, what “normal” improvement looks like, and when to intervene because the pattern no longer makes sense.

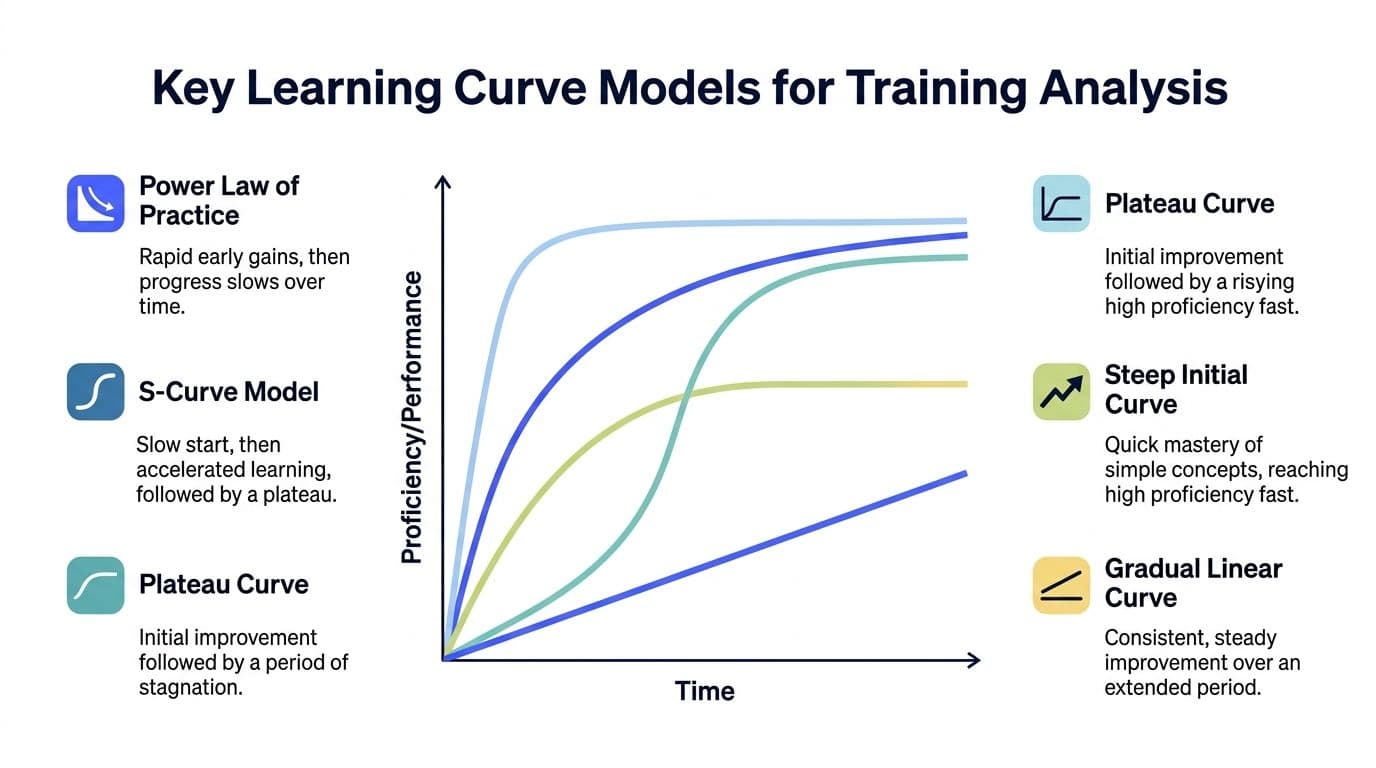

Key Learning Curve Models for Training Analysis

Not every skill develops in the same shape.

That’s where training leaders often oversimplify the learning curves foundation. They assume one curve fits every context. It doesn’t. The pattern for entering invoice data isn’t the same as the pattern for coaching a customer through an escalated service issue. Repetitive tasks, conceptual tasks, and judgement-heavy tasks often improve in different ways.

Three models training managers use most

In day-to-day practice, three curve types are especially useful as mental models.

Power law

This is the classic pattern for repetitive tasks. Learners improve quickly at first, then gains taper off. It suits tasks where repetition removes friction, such as data entry, equipment setup, or using a standard script.

The practical signal is clear. If performance isn’t improving early on a highly repeatable task, the issue may be unclear instruction, poor workflow design, or weak feedback.

Exponential model

This model also shows early rapid improvement, but it’s often used when performance moves quickly toward a limit. It can fit simpler knowledge or process tasks where learners grasp the essentials fast and then make smaller refinements.

For managers, this model is useful when designing short practical modules. You may not need a long programme if the skill can be learned quickly and maintained with occasional refreshers.

S-shaped curve

This one matters for complex skills. Progress starts slowly because learners are orienting themselves. Then capability accelerates once pieces begin to connect. Later, performance levels off as mastery approaches.

This is common in leadership conversations, technical troubleshooting, and scenario-based judgement. Early slowness in these cases doesn’t always indicate poor training. Sometimes the learner is still building the mental model needed for later acceleration.

A comparison you can use in planning

Model | Curve Shape | Best For | Practical Implication |

Power Law | Fast early improvement, then gradual slowing | Repetitive operational tasks | Use frequent repetitions and track time or error reduction closely |

Exponential Model | Sharp improvement toward a stable limit | Simple procedures and fast-to-learn tools | Keep modules lean and avoid overbuilding the course |

S-Shaped Curve | Slow start, faster middle phase, eventual plateau | Complex judgement and layered skills | Don’t overreact to slow early progress if deeper understanding is still forming |

Where the ASY model helps

When you want more than a visual pattern, the ASY model adds structure. It uses three parameters: Amplitude, Survival, and Asymptote, as described in CNA’s overview of statistical methods for learning curves.

Here’s the plain-language version:

Amplitude: how much improvement is available

Survival: how quickly improvement happens

Asymptote: the likely performance ceiling

That framing is useful because it separates three issues that often get mixed together. A learner may have a high ceiling but improve slowly. Another may improve quickly but level off at a moderate standard. Those are different design problems.

CNA’s discussion also notes that some complex tasks may require 6 to 7 years of practice to reach 99 percent proficiency. That’s a helpful reminder for any manager who expects advanced professional judgement to appear after a short onboarding cycle.

If a complex skill shows a slow start, don’t rush to rewrite the curriculum. First ask whether the skill naturally follows an S-shaped path.

Choosing the right model without overcomplicating it

You don’t need a data science team to use these models sensibly. Start with the nature of the task.

Ask:

Is the task repetitive or variable: Repetitive tasks often resemble a power law.

Can the basics be learned quickly: If yes, an exponential pattern may fit.

Does competence depend on integrating multiple sub-skills: If yes, expect something closer to an S-curve.

Use the model as a lens, not as a rigid rule. The aim is better judgement. When the expected shape and the actual shape don’t match, that’s where design insight begins.

Measuring and Interpreting Your Team's Learning Curve

Many teams already have enough data to begin. They just don’t organise it as a learning curve.

A call centre may have handling time, first-pass resolution, and quality scores. A field operations team may have task completion time, rework frequency, and supervisor sign-off results. An onboarding programme may have quiz attempts, scenario ratings, and time to independent work. The challenge isn’t always data collection. It’s knowing what to watch, when to watch it, and how to interpret the pattern.

A practical four-step method

1. Pick one performance metric first

Choose a metric that connects to real work. Good options include time to complete a task, error rate, quality rating, or successful completion without assistance.

Don’t start with ten metrics. Start with one that matters to the business and one that learners can influence directly.

2. Track the metric across repeated attempts

A learning curve needs sequence, not just snapshots. You want to see what happens across attempts, sessions, or production cycles.

For example, if you’re training customer support staff on a new ticket workflow, track the same measure over repeated use. If you only compare day one to week four, you’ll miss the shape between them.

3. Plot the pattern visually

A basic line chart is enough to start. Put attempts or time periods on one axis and performance on the other.

Many leaders overcomplicate this step. You don’t need advanced modelling to get value. A simple chart can reveal whether the team is improving steadily, improving in bursts, or flattening too early. If you’re building a reporting habit, a dedicated training analytics dashboard can make the pattern easier to monitor over time.

4. Interpret the shape before changing the course

Many teams move too fast. They see a plateau and immediately add more content. Sometimes the content is fine and the practice design is weak. Sometimes the metric is noisy because the environment changed.

Look at the curve and ask what story it tells.

What different shapes usually mean

Steep early improvement: Learners are absorbing basics quickly. Keep practice going so performance stabilises.

Slow but steady growth: The task may be more complex than expected, or coaching may be helping incrementally.

Early plateau: Learners may have memorised the surface steps and now need varied practice or clearer feedback.

Erratic swings: External conditions may be affecting the data, or learners may not be practising the same task consistently.

Field note: a plateau isn’t always a learner problem. It’s often a signal that the design has stopped creating useful challenge.

How to deal with noisy data

Real-world training data is messy. Workloads vary. Managers coach differently. Some learners get easier cases. Others practise less often. If you ignore that, you can misread the curve.

A few practical safeguards help:

Keep the task definition tight: Measure the same kind of task each time where possible.

Separate learning from workload shocks: A sudden process change can distort the curve.

Review groups and individuals: Group trends matter, but individual curves often reveal design problems sooner.

Pair numbers with observations: Supervisor notes can explain why a line moved unexpectedly.

What not to do

Don’t average everything so heavily that the underlying pattern disappears. Don’t compare learners working on different task types as though they share the same curve. And don’t treat every dip as failure. Many curves include temporary dips when learners move from guided practice to live performance.

A useful measurement habit is to ask one question every review cycle: is the learner improving at the rate this task should reasonably allow? That keeps the focus where it belongs, on observable progress and design fit.

Applying Learning Curves to Smarter Course Design

Good measurement should change design choices.

If a learning curve only lives in a dashboard, it becomes another report no one uses. Value appears when instructional designers, trainers, and operations leaders use the pattern to shape pacing, support, goals, and reinforcement.

Match pacing to the rhythm of improvement

Some courses overload the front end. They deliver concepts, exceptions, and edge cases before learners can perform the basics smoothly. That creates the illusion of coverage without creating fluency.

Learning curve data helps you pace differently. If early repetitions produce strong gains, build short practice loops into the opening phase. If improvement stalls when complexity rises, insert support where the curve weakens rather than adding content everywhere.

A practical design adjustment might be to reduce lecture time and increase tightly guided reps on the first critical workflow. Another might be to delay advanced exceptions until the base task is stable.

Set realistic goals and timelines

Managers often ask for target dates before anyone has measured actual progression. That’s risky. A learning curve gives you a more defensible timeline.

Instead of promising that all learners will be fully productive by a fixed date, you can define expected movement by stage. For example, the first phase may focus on speed and accuracy in a controlled setting. The next may focus on transfer under live conditions. Those milestones are more credible than one blanket deadline.

Find support needs early

Two learners can finish the same module with similar scores and still have very different trajectories. One may improve consistently. Another may spike early and then flatten.

That’s why the learning curves foundation is so useful for intervention. It doesn’t just tell you who is behind. It tells you who is drifting toward risk before final performance drops.

Use curve patterns to identify:

Learners who need extra repetition: They improve, but only with more cycles.

Learners who need conceptual coaching: They can copy steps but can’t adapt.

Learners ready for stretch tasks: Their curve shows stable performance sooner.

Design for retention, not just completion

This is the part many programmes miss. Initial improvement doesn’t guarantee lasting performance.

In regulated CA industries like delivery fleets, foundational training outcomes can weaken without reinforcement. 42% of course completers initially retain safe habits, but that can drop to 25% within 12 months in high-regulation sectors due to untracked skill decay, according to the cited discussion on cornering and traction management.pdf).

That finding matters far beyond fleet training. It shows why course design shouldn’t end at completion. If you want ROI, you need a reinforcement model.

Completion tells you what happened at the end of training. Retention tells you whether training changed work.

Use the curve to shape reinforcement

A few design moves follow naturally:

Schedule refreshers by risk, not tradition: High-risk tasks need earlier follow-up when decay is likely.

Use micro-assessments after the course: Short checks can reveal whether performance is holding.

Recreate critical scenarios: Transfer improves when learners practise in conditions closer to live work.

Track post-course behaviour: Especially in compliance-heavy settings, the curve after training can matter more than the curve during training.

Budgeting becomes more credible

When you understand how long competence takes and where decay occurs, budget conversations improve. You can distinguish between upfront delivery cost and the ongoing cost of maintaining capability.

That gives you a stronger basis for ROI conversations. Instead of arguing that training is “important,” you can show where the design needs investment, where reinforcement prevents loss, and where manual follow-up can be replaced with targeted support.

For training managers, that’s one of the most practical outcomes of the learning curves foundation. It turns course design into an operational system rather than a one-time event.

Real-World Examples of Learning Curves in Action

Concepts stick when you see them in work people recognise.

Sales onboarding and the new CRM

A sales enablement lead rolls out a new CRM workflow. Early complaints focus on the interface. Reps say it’s clunky, managers say adoption is weak, and leadership wonders if the training was too light.

When the team reviews performance across repeated deals, the pattern tells a different story. Reps are getting faster on the basic record updates, but they stall when they need to log next-step actions consistently. The problem isn’t the entire system. It’s one part of the workflow where the training examples didn’t match live selling conditions.

The team changes the course design. They add scenario practice around messy handoffs and incomplete notes. Performance improves because the bottleneck becomes visible.

Assembly training on a production line

A plant supervisor notices that new operators differ widely in how long they take to complete the same assembly sequence. Some improve rapidly after a few runs. Others stay slow even after several shifts.

A simple learning curve review shows that the slower operators aren’t struggling with hand movement. They’re hesitating during inspection points because they don’t yet recognise acceptable variance. The fix isn’t more repetition of the whole sequence. It’s better visual examples and targeted coaching on the judgement step.

That distinction matters. Repetition helps when the issue is familiarity. It does less when the issue is interpretation.

Motorcycle foundation training in Ontario

A more specialised example comes from foundational motorcycle training. Standardised beginner courses are built for consistency, which makes sense operationally. But consistency can also hide individual weaknesses.

In Ontario, 28% of new riders fail foundational tests due to inconsistent curve handling, according to the cited riding strategy resource used in the verified data. That’s exactly the kind of problem where learning curve analysis becomes useful.

If an instructor only sees the final result, they know the rider struggled. If they track repeated attempts, they can see more. One learner may enter turns too fast, then improve with pacing cues. Another may understand entry speed but misjudge line selection under pressure. Those are different curves and different coaching needs.

The same verified data notes that standardised courses overlook personalisation opportunities and that AI-driven adaptive learning could analyse rider errors in real time to adjust difficulty. For training leaders in any field, the lesson is broader than motorcycle safety. When a programme treats all learners as though they need the same sequence, it often hides where improvement breaks down.

The best learning curves don’t just tell you who failed. They show you what kind of practice would have helped earlier.

These examples all point to the same operational truth. Once you can see the pattern of improvement, decisions become more precise. You stop solving broad “training issues” and start fixing specific points of friction.

Automating Measurement and Optimization with AI

Manual tracking breaks down quickly.

A spreadsheet can handle a small pilot. It can’t easily keep up when you’re tracking repeated attempts across courses, teams, locations, and job roles. Someone has to pull data, clean it, plot it, interpret it, and follow up. That’s why many training teams understand the learning curves foundation in principle but never operationalise it at scale.

What automation changes

AI-enabled training systems make the process more practical by handling routine measurement tasks continuously. They can capture learner interactions, organise them by skill or module, and surface patterns that a human reviewer might miss until much later.

That matters in three ways:

Collection becomes automatic: Practice attempts, quiz outcomes, and engagement signals can be logged without extra admin.

Curves update in near real time: Managers don’t have to wait for a monthly review to see that a cohort is flattening early.

Intervention becomes targeted: Instead of sending everyone the same refresher, the system can flag where support is needed.

Teams exploring adjacent AI evaluation methods often find it useful to look at approaches such as AI-driven synthetic user testing workflows, because the logic is similar. You instrument behaviour, look for repeatable patterns, and use the signal to improve the experience faster.

The platform’s own AI has a learning curve too

There’s a helpful analogy here. In machine learning, the gap between a training curve and a validation curve reveals whether a model is learning to generalise or merely memorising patterns. The verified data notes that for an AI like Learniverse, a model may learn to generate courses from 2,000 documents on the training side, but generalisation to new content is the true test, as discussed in this explanation of learning curves for diagnosing model performance.

The same logic applies to corporate training. A learner who performs well inside one familiar module may still struggle to apply the skill elsewhere. Strong systems monitor not just internal completion metrics, but also transfer signals and behavioural evidence. That’s where AI learning insights become operationally useful.

A short walkthrough helps make that idea concrete.

Why this matters for ROI

When measurement is automated, learning curves stop being a specialist analysis exercise and become part of daily training operations. Managers can see where learners speed up, where they stall, and where post-course reinforcement needs to happen.

That’s the bridge between theory and ROI. You’re not just delivering content. You’re building a system that observes performance, adapts support, and helps prove whether training changed outcomes in the first place.

If you want to put this into practice, Learniverse helps teams turn training materials into interactive learning experiences with built-in automation, analytics, and AI support. It’s a practical option for organisations that want to measure learning curves more consistently, reduce manual admin, and improve training outcomes with less guesswork.