Valuable insights from your training programs have a frustratingly short shelf life. It’s a phenomenon often called corporate amnesia. As soon as a session wraps up, all the brilliant feedback and hard-won lessons seem to vanish.

A lesson learned template is your most practical tool for stopping this cycle. It’s a simple, structured document for capturing, analyzing, and actually acting on feedback from any training, project, or business initiative. Think of it as the system that stops you from making the same mistakes twice and helps you scale your successes across the entire organization.

Stop Repeating Training Mistakes

Think about the last big training program you launched. You invested significant time, money, and energy. But what happened to all that gold—the real-time feedback from participants and facilitators?

For most organizations, the honest answer is "not much." That crucial knowledge gets buried in siloed survey results, lost in forgotten email chains, or just evaporates completely. This cycle of lost knowledge isn't just frustrating; it's costly, leading you to stumble over the same preventable hurdles. A dedicated lesson learned template is the most direct way to fix this.

Why Your Organization Needs a Formal Process

Moving from casual, anecdotal feedback to data-driven improvements requires a formal process. Without one, you're essentially relying on memory and hallway chatter to guide your most critical programs—a method that’s neither reliable nor scalable.

A lesson learned template provides that essential framework, ensuring you collect feedback consistently across every initiative. It creates a single source of truth that helps turn subjective opinions into objective, actionable insights.

The purpose of a lesson learned template isn't just to write down what happened. It’s to build a reliable feedback loop that fuels continuous improvement and prevents knowledge from walking out the door.

By making this process a formal part of your workflow, you give your team the power to:

Spot recurring patterns in training feedback, like a module that consistently confuses people or an activity that always gets rave reviews.

Create clear accountability by assigning concrete action items to specific people or teams for follow-up.

Build a knowledge base of what works and what doesn’t, dramatically speeding up the development of future training content.

Justify resource requests for training improvements with documented evidence of both the need and the potential impact.

Ultimately, a good template turns your training efforts from a series of one-off events into an intelligent, evolving system. And when you pair it with a tool like Learniverse, you can take it a step further. Imagine feeding these captured insights directly to an AI that automatically refines your courses—creating a powerful, automated cycle of feedback and improvement.

When to Use Your Lesson Learned Template

Having a great lesson learned template is one thing, but knowing the right time to use it is what truly makes a difference. The real magic happens when you capture feedback while the experience is still fresh in everyone’s mind.

This shouldn't be an afterthought or something you do only when a project goes off the rails. The best approach is to build these feedback sessions right into your project plans and operational rhythms. Think of it as a proactive tool for continuous improvement, not just a reactive measure.

At the End of New Hire Onboarding

A new hire’s first 90 days are a whirlwind. Once they’ve finished their formal onboarding program, you have a perfect window of opportunity. Their experience is vivid, and their feedback is invaluable for polishing the process for the next cohort.

Get recent hires, their managers, and the training facilitators in a room together. Your goal is to get feedback on specific, tangible parts of the program. To do this, ask direct questions like:

Content Clarity: Were there any modules that felt confusing? Was there any internal jargon that we forgot to explain?

Pacing and Flow: Did the schedule feel too rushed, or were there lulls where you felt you were waiting for the next step?

Tool and System Training: Do you feel confident using our core software after the training, or are there still gaps?

Cultural Integration: Did the program give you a real sense of our company values and how things actually get done around here?

Gathering these details allows you to make quick, targeted fixes that can improve retention and get new employees up to speed much faster. This feedback is so critical, it often feeds directly into a larger training needs analysis to guide future program development.

After a Major Compliance Training Push

Let’s be honest: company-wide compliance training can feel like a box-ticking exercise. But after rolling out a major module, like your annual data security or workplace safety training, you need to dig deeper than just completion rates.

Don't just ask if they completed the training; ask if it changed their understanding or behavior. Engagement and comprehension are the metrics that truly signal a return on investment for compliance efforts.

In this scenario, your lesson learned template should help you uncover the real story:

Engagement Levels: Were people actively engaged with the materials, or did they just click through as fast as possible?

Scenario Relevance: Did the examples and case studies feel realistic and connected to their everyday work?

Knowledge Gaps: Which quiz questions stumped the most people? This is a huge red flag pointing to content that needs to be clarified or reinforced.

Following a Key Project or Initiative

Every significant project—whether it's launching a new product, migrating to a new system, or wrapping up a major client campaign—is packed with learning opportunities. Running a post-project debrief with a structured template helps your team turn that hard-won knowledge into something the whole organisation can use.

While many businesses realise the value of this process, finding specific regional data on adoption rates, especially in places like California, is tricky without diving into niche industry reports. The universal best practice, however, remains clear: formalize your debrief. You can explore more ideas on how to structure these meetings by reviewing comprehensive guides on lessons learned frameworks. The key is to keep the focus on improving processes, collaboration, and resource planning, not on individual performance.

How to Capture Genuinely Actionable Insights

A template is just a tool. Its real power comes from the quality of the insights you capture. The goal isn’t just to check boxes; it’s about digging deep to uncover the kind of feedback that sparks real improvements in your training programs.

Let’s walk through how to transform surface-level comments into feedback that actually helps you get better. This is the heart of any solid continuous improvement loop.

Table: Template Field Breakdown and Guiding Questions

To get the most out of every lesson learned session, you need to ask the right questions. This table breaks down each field in our template and gives you effective prompts for eliciting specific, meaningful responses from participants.

Template Field | Purpose | Example Guiding Questions |

Project/Training Name | To provide immediate, searchable context for the feedback. | What was the exact name of the session? Which cohort participated? When did it take place? |

What Went Well | To identify successful elements that should be replicated or scaled. | What was the most valuable part of the training for you? Was there a specific moment when a concept "clicked"? Which resource or activity did you find most helpful? |

What Could Be Improved | To pinpoint specific weaknesses and opportunities for iteration. | At what point did you feel disengaged? Was any content irrelevant to your role? What part of the training felt confusing or rushed? |

Key Learnings | To synthesize feedback into high-level, strategic takeaways. | What's the single biggest theme from all the feedback? If you had to summarize the core lesson in one sentence, what would it be? |

Action Items | To create a concrete, trackable plan for implementing changes. | What is the very next step we need to take? Who owns this task? What is a realistic deadline for getting this done? |

These questions are designed to move the conversation from vague feelings to concrete details, which is where the real value lies.

Set the Scene: Naming and Context Are Everything

Before you even think about feedback, get the first field right: Project/Training Name. I know it seems basic, but this is your foundation for a searchable database. Poorly named records get lost.

Be ruthlessly specific. Don't just write "Onboarding." Instead, use something like "Q3 2026 New Hire Onboarding - Sales Cohort." Instantly, anyone reading this knows the who, what, and when.

This level of detail is crucial. The lessons from a compliance course for warehouse staff will be wildly different from a leadership workshop for new managers. Good naming turns a folder of documents into a practical knowledge base. If you're building this out, our guide to knowledge management best practices has some great pointers on structuring these assets for the long haul.

Dig for Details: Balancing Positives and Negatives

This is where the real work begins, in the two core feedback columns: ‘What Went Well’ and ‘What Could Be Improved.’ Your job is to act like a detective and get past the easy, vague answers.

What Went Well

This column isn't just a pat on the back; it's your roadmap for what to protect and replicate. When someone says, "The training was good," don't let them off the hook.

Ask follow-up questions to get to the why:

"Which part did you find most engaging, and what made it stand out?"

"Was there a facilitator or a specific resource that made a difference?"

"Tell me about the moment a tricky subject finally made sense."

Actionable Insight Example: For a sales enablement session, a fantastic piece of feedback is: "The role-playing scenarios for objection handling were spot-on. They were realistic enough that I actually used the new framework on a call the next day." Now that's something you can build on.

What Could Be Improved

Here’s where you find your biggest growth opportunities. But you’ll only get honest feedback if you've created a space where people feel safe to be critical without fear of reprisal.

Guide them away from generic complaints like "It was boring."

Pinpoint the actual issue with targeted questions:

"Which section seemed to drag on the most?"

"Was there anything that felt like a waste of time for your specific job?"

"Can you remember the point where you started to tune out?"

Actionable Insight Example: For a compliance training, a truly useful insight would be: "The module on emergency protocols was all theory. We need a hands-on simulation to actually remember the sequence under pressure."

From Insight to Action: Synthesizing a Plan

The final two fields, ‘Key Learnings’ and ‘Action Items,’ are where you turn all this great feedback into a concrete plan. This is the step that separates a document that gets filed away forever from a living tool that drives improvement.

Key Learnings

This section is for looking at the big picture. Step back from the individual comments and look for the overarching themes. A Key Learning is the concise summary of the main insight.

Example Key Learning: "Our new hires are craving more hands-on practice in our systems training; they're getting lost in the theory."

Action Items

This is the most important part of the whole exercise. If your action items are fuzzy, you've wasted everyone's time. Every single one needs to be specific, measurable, assignable, relevant, and time-bound (SMART).

Vague Action: "Improve onboarding."

Strong Action: "Revise the Q4 2026 onboarding 'System Basics' module to include a 30-minute interactive simulation. Assigned to Jane Doe, due by October 15th."

A lesson isn't truly 'learned' until it leads to a change in what you do. Without a clear, owned action item, all you've done is document a problem.

Turning Your Insights Into Better Training

Collecting lessons learned is one thing, but the real value comes when you use that feedback to make your training better. A folder full of completed templates is just a folder of good intentions. Without a clear process for analysis and action, all that valuable insight will simply gather dust.

To avoid analysis paralysis, you need a simple, repeatable framework to sort through the noise and pinpoint what really matters.

From Feedback to Focus

The best way to start is by sorting the feedback into three simple buckets: theme, urgency, and impact. This quick categorization exercise helps you move from a jumble of individual comments to a clear, strategic overview.

Theme: Look for patterns. Are multiple people saying the compliance videos are too long? Do you see recurring praise for a specific onboarding activity? Grouping similar comments reveals your biggest opportunities and pain points.

Urgency: How quickly does this need fixing? A broken link in a pre-work module is high urgency. A suggestion to add more advanced content for future cohorts can probably wait.

Impact: What’s the potential payoff? Fixing a confusing quiz question might have a moderate impact on a few learners. Clarifying a core safety procedure, on the other hand, could have a massive impact on the entire organisation.

Organising feedback this way immediately clarifies your priorities. It shows you exactly where to put your energy to get the biggest return, preventing your team from getting bogged down in low-impact tweaks.

The goal of analysis is to find clarity. You aren’t just hunting for problems; you're looking for the most important problems to solve first. This structured approach turns a list of complaints into a strategic roadmap for improvement.

Prioritizing Your Action Items

Once you’ve grouped your feedback and identified the key themes, it’s time to get specific. This is where you translate those broad insights from the template into concrete to-dos. Vague goals don't get things done.

For example, if a major theme is "compliance videos are too long and boring," your action item shouldn't be "improve videos." Get granular.

Task: Break the 45-minute compliance video into three 15-minute microlearning modules.

Owner: Assign the task to the instructional design team lead.

Deadline: Set a clear completion date for the new modules.

Sometimes, feedback highlights a performance gap for a specific individual rather than a course-wide issue. In these cases, a formal performance improvement plan can provide the necessary structure for development. While many companies see the value in such plans, finding hard data on implementation rates in specific regions can be tough. For a broader look, you can often find useful information in guides on general project management best practices.

Connecting Insights to Automation

Here’s where you can really accelerate the improvement cycle. Instead of just creating a to-do list, feed your key learnings directly into smart tools to automate the fixes.

Take that insight about long compliance videos. With a platform like Learniverse, you can use your completed lesson learned template as a creative brief for its AI.

You could simply prompt it: “Based on this feedback, rewrite our long compliance video script into a series of five engaging microlearning lessons, each with a short quiz at the end.”

This closes the loop between feedback and an improved learner experience almost instantly. The AI can generate new content, build out interactive elements, and get the updated course ready for deployment. What used to be a manual, time-consuming review process becomes a nimble, responsive system that keeps your training relevant and effective.

Automating Your Continuous Learning Cycle

A filled-out lessons learned template is a great start, but its real power is unlocked when you put it to work. The difference between teams that actually learn and those that just file away documents is turning this review into a consistent, automated habit. This is how you build a genuine cycle of continuous improvement.

For example, set up automated post-training surveys that feed directly into an analysis dashboard. Instead of spending hours manually compiling feedback, you get structured data ready for you to analyse. This flips the entire data collection process from a tedious chore into a simple, automated step, freeing you up to focus on the insights.

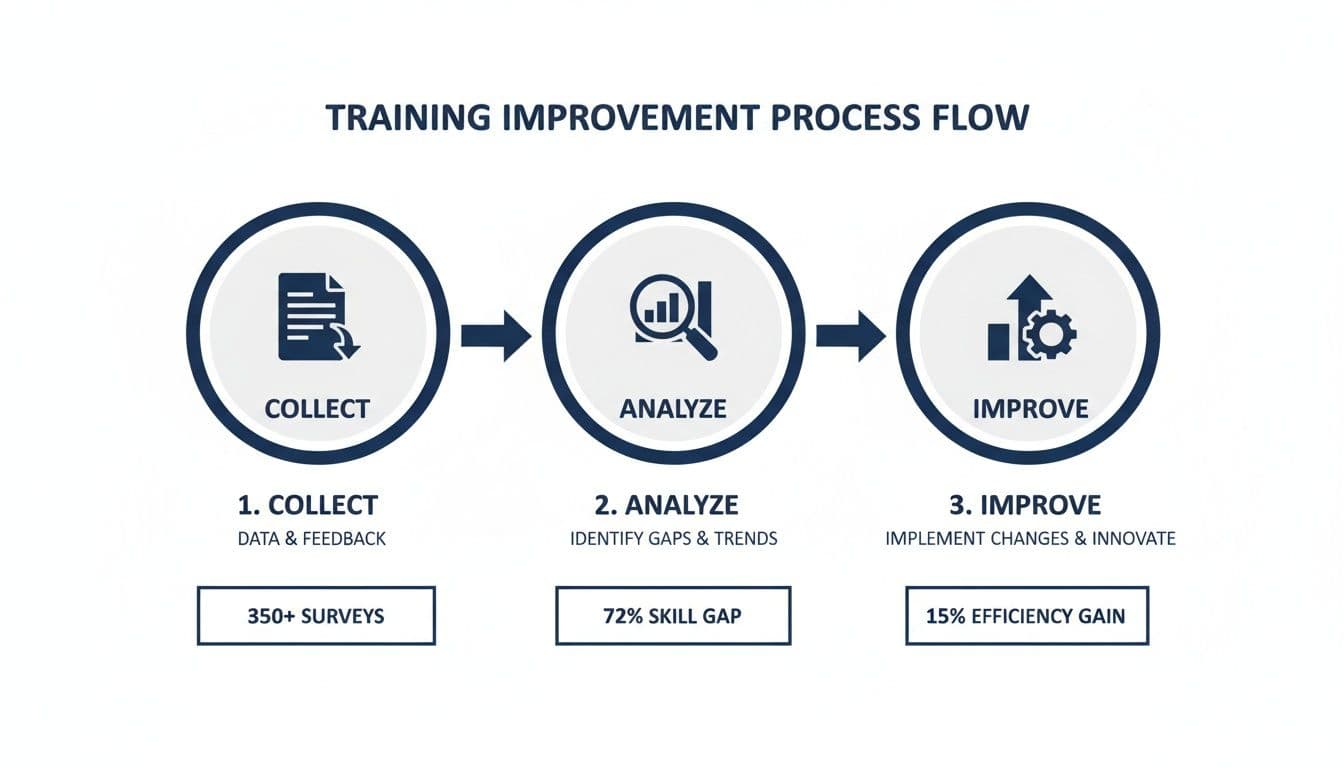

This flow chart breaks down the basic process of turning that feedback into better training.

As you can see, it's a straightforward but powerful loop: collect feedback, analyse it for patterns, and then act on what you find. The secret is to keep this cycle moving. Stale insights don't help anyone.

Activating Insights With AI

This is where things get really interesting. You can take your completed lesson learned template and use it as a direct source document to instruct an AI agent. With a platform like Learniverse, this is no longer just a theory—it’s a practical tool you can use every day.

For example, let's say after reviewing feedback from your last onboarding group, you identified a key problem: “New hires are confused by the Day 3 module on our internal software.” Instead of just adding this to a to-do list, you can take action right away.

Use your documented lessons as a direct command. Prompt the Learniverse AI Agent with a simple instruction like: "Update the onboarding course based on the attached findings. Specifically, clarify the software module with simpler language and add a new interactive quiz."

The AI can then get to work for you, handling several tasks on its own:

Revise Content: It can rewrite confusing sections to make them clearer and easier to understand.

Generate Quizzes: It will create fresh assessment questions that confirm people understand the updated material.

Adjust Learning Paths: The system might suggest—or even automatically slot—the revised module into the existing onboarding sequence.

This kind of workflow turns a manual review process that might take weeks into a fast, AI-powered loop. If you're looking to build out these kinds of systems, learning more about how to automate employee training provides a deeper dive into creating these efficient workflows.

Integrating Data From Other Tools

A strong improvement cycle also pulls in data from more than one place. For training where you have measurable results, like test preparation, specialized software can help you track student progress and scores efficiently. This gives you clean, actionable numbers to feed back into your improvement efforts.

By connecting these different data points, you get a much fuller picture of your training's effectiveness. The qualitative insights from your lessons learned, combined with hard performance metrics, give you the evidence you need to make smart decisions and keep your programs truly effective.

Your Top Questions About Lessons Learned, Answered

Once you start weaving a lessons learned template into your workflow, a few practical questions always pop up. Getting these sorted out early on is key to building a process that actually sticks. Let's tackle the most common ones.

How Often Should We Run a Lessons Learned Session?

There's no magic number here; it really comes down to the rhythm of your training or project. The goal is consistency, not just frequency.

For ongoing programs, think quarterly. If you're running something like new hire onboarding or a continuous leadership program, a quarterly review is a great cadence. It gives you enough time to gather feedback from a few different groups, spot meaningful trends, and avoid burnout from constant meetings.

For one-off events, act fast. After a big annual compliance training or a major project launch, you need to capture insights while they're still fresh. I always recommend holding the session within one to two weeks post-event. Wait any longer, and crucial details start to get fuzzy.

What you're aiming for is to make this a regular, expected part of how you operate—not a fire drill you run only when things go south.

Who Needs to Be in the Room?

The quality of your lessons learned session hinges entirely on the perspectives you bring together. You're aiming for a 360-degree view, so a siloed conversation just won't cut it.

Your invite list should always include a mix of these key players:

Learners: They’re your primary source. Their experience is the whole point of the exercise.

Facilitators/Trainers: They were on the front lines, delivering the content and seeing firsthand what landed and what didn't.

Managers: These are the people who can tell you if the training is actually making a difference on the job.

Course Designers: The instructional design team needs this direct feedback to make smart, effective updates to the material.

Can We Use This Template for More Than Just Training?

Absolutely. In fact, you should. The real power of a good lessons learned template is its versatility. The core idea—pinpointing what worked, what didn't, and what to do next—applies to almost any initiative.

I've seen marketing teams use this exact framework to debrief after a major campaign, development teams use it after a tough software sprint, and client services teams use it to review a new customer implementation. All you have to do is tweak the specific questions to fit the context. It’s a simple but powerful tool for driving improvement across the entire organisation.

Turn your hard-won lessons into better training automatically with Learniverse. Our AI-powered platform helps you build, deliver, and continuously improve your eLearning content, saving you hours of administrative work. Explore how you can create a dynamic learning cycle.