A new manager usually notices the problem in the same way. Performance is uneven, deadlines slip, and someone says, “We need more technical training.” That sounds sensible until you ask one basic question: Which technical skills, exactly?

That's where many training efforts go off course. If “technical skills” means everything from Excel formulas to SQL queries to handling customer data correctly, then the training plan becomes vague, the assessments become subjective, and the results become hard to defend. You can't build a useful programme on blurry language.

A precise technical skills definition fixes that. It gives managers a shared vocabulary, helps employees understand what “good” looks like, and makes training measurable instead of aspirational. For anyone responsible for onboarding, compliance, enablement, or capability building, that clarity is the foundation for everything that follows.

Why Your Team's Growth Depends on a Clear Technical Skills Definition

A common L&D scenario looks like this. A department head tells you the team lacks “data skills.” You ask for specifics. Do they mean building dashboards in Tableau, cleaning data in Excel, writing SQL JOIN queries, or interpreting reports for business decisions? The answer is often, “A bit of all of that.”

That answer is honest, but it isn't operational. If the skill need stays broad, the training response usually becomes broad too. People get assigned generic courses, managers tick a completion box, and three months later the original problem is still sitting there.

Vague labels create expensive training mistakes

When teams use loose labels, they tend to make three predictable mistakes:

They train knowledge instead of performance: Employees learn concepts, but still can't perform the task in the system they use every day.

They assess confidence instead of competence: A learner says they “understand databases,” but can't write the query the role requires.

They fund content instead of outcomes: Budget goes into course libraries rather than tightly defined capability gaps.

That matters in a state where technical capability has direct economic weight. In California, 1.8 million jobs in tech-related fields represented 12% of total employment in 2023, according to the California Employment Development Department labour market information resources. The same source set notes that a Bay Area Council Economic Institute report found 78% of Silicon Valley employers identified shortages in technical skills as the primary barrier to hiring, and a UC Berkeley workforce development study found companies investing in technical skills training saw 25% higher retention rates.

Those figures matter beyond the tech sector. They signal something practical for any manager. If technical skills are hard to hire for and strongly tied to retention, then defining them well inside your organisation isn't optional. It's one of the few levers you can control.

Practical rule: If a manager can't describe a skill in a way that could be tested, they haven't defined a skill yet. They've described a hope.

Precision changes how managers lead

A clear technical skills definition improves more than training. It sharpens hiring briefs, makes performance conversations fairer, and helps managers coach with specificity.

Compare these two statements:

“You need stronger reporting skills.”

“You need to build a weekly Tableau dashboard from raw sales data without manual rework.”

The second statement is useful because it points to an observable task. It tells the employee what success looks like and tells L&D what to build.

That's why strong training teams don't start with content libraries. They start with definitions. Once the skill is defined precisely, everything else gets easier: assessments, learning paths, manager feedback, and proof of progress.

Decoding Technical Skills From Hard and Soft Skills

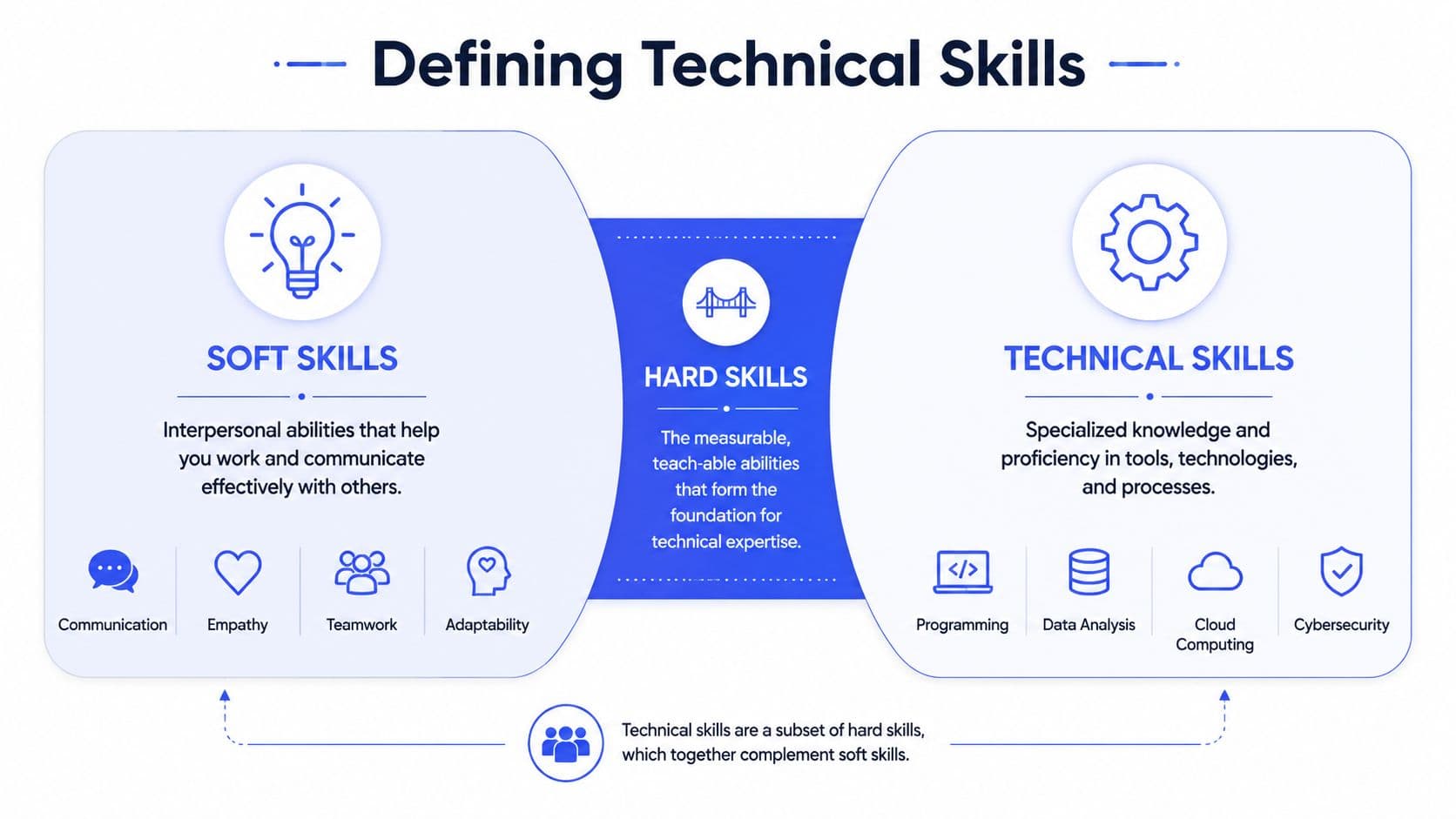

Most confusion starts with category overlap. People often use technical skills, hard skills, and soft skills as if they mean the same thing. They don't.

A simple way to think about it is cooking. A hard skill is broad, like understanding baking principles. A technical skill is narrower, like using a stand mixer correctly, setting oven temperature precisely, and producing a consistent sponge cake from a recipe. A soft skill is how you work with others in the kitchen, such as communicating clearly during a busy service.

A working definition that managers can use

Here's the version I use with line managers:

Technical skills are measurable, job-specific competencies that can be objectively tested in a real or simulated task.

That aligns with the operational framing described in SkillPannel's explanation of technical and non-technical skills, which emphasises that technical skills should be narrowly defined and objectively assessable. “Ability to execute SQL JOIN queries” is a technical skill. “Good with data” isn't.

The distinction becomes easier when you compare the categories side by side.

Attribute | Technical Skills | Soft Skills | Hard Skills (Superset) |

What they are | Job-specific, tool-based or process-based abilities | Interpersonal and behavioural abilities | Broad category of teachable, learnable abilities |

How they're learned | Practice, structured training, hands-on application | Coaching, reflection, practice in social settings | Formal learning, repetition, experience |

How they're measured | Tests, simulations, practical tasks, output quality | Observation, feedback, behaviour patterns | Varies by skill type |

Example | Writing SQL queries, configuring firewall rules, creating a Tableau dashboard | Listening, collaboration, adaptability | Includes technical skills plus other teachable skills |

Manager test | “Can the person perform the task correctly?” | “How does the person work with others?” | “Can the person learn and apply a teachable capability?” |

If you want a broader comparison of these categories in workplace training, this guide to hard skills and soft skills is a useful companion.

Why the distinction matters in training

The category matters because each type of skill needs a different learning design. If the skill is technical, your training should include practice, tools, and objective assessment. If the skill is soft, discussion, coaching, and observation matter more.

The fastest way to weaken a technical training programme is to describe the skill vaguely and then assess it with opinion.

A manager might say, “Our service team needs better system knowledge.” That sounds reasonable, but it still isn't specific enough to train. A better definition would be: “Can create a return authorisation in the CRM, attach the required evidence, and route the case to finance using the approved workflow.”

That version tells you four important things:

The platform involved

The exact task

The quality standard

The workflow context

Once you define a technical skill that clearly, learning content stops being generic. It becomes targeted, relevant, and much easier to automate.

Technical Skills in Action Examples Across Industries

Technical skills make the most sense when you can see them in a real role. The mistake many managers make is listing broad areas like “cybersecurity,” “marketing analytics,” or “operations systems.” Those are domains, not skills.

A skill is something a person can do. Usually, it includes a tool, a process, and a standard.

Regulated industry examples

In healthcare, a manager might ask for “better compliance skills.” That's too broad to train properly. Better definitions look like this:

Operating an EHR correctly: Enter patient data into the electronic health record using the required fields and access controls.

Handling sensitive data securely: Apply approved encryption and access procedures when moving patient information between systems.

Following incident procedures: Identify a data handling error and escalate it using the documented internal process.

In financial services, the same principle applies:

Using compliance software: Review flagged transactions in the monitoring platform and document the decision path correctly.

Producing audit-ready records: Export, label, and store required documentation according to internal retention rules.

Applying access controls: Assign user permissions in line with role-based access policies.

Commercial and operational examples

Technical skills aren't only for engineers. Front-line and business teams use them constantly.

For a marketing analyst, technical skills might include:

Building a performance dashboard in Google Analytics 4

Cleaning campaign data in Excel

Writing queries in SQL

Creating a report in Tableau or Power BI

For a franchise operations lead, technical skills might include:

Updating standard operating procedures in the company knowledge base

Running location performance reports in the POS reporting system

Auditing completion records in a training portal

Configuring user permissions for store managers

Digital and engineering examples

In software and IT roles, it's tempting to stop at labels like “cloud” or “programming.” But those still need narrowing.

A more useful definition looks like this:

“Can deploy a containerised application to AWS using the approved pipeline and verify the deployment through standard monitoring checks.”

That's specific enough to assess. It tells the learner what to practise and tells the manager what evidence counts.

Other examples include:

Cybersecurity analyst: Identify and report phishing attempts using the organisation's email security workflow

Developer: Write and test an API endpoint in Python following the team's code review standard

Data analyst: Join tables, validate output, and present a visual summary in Tableau

Support engineer: Use the ticketing platform to diagnose a permissions issue and apply the approved fix path

Notice the pattern. Each example names a real task, not a topic. That's the difference between a catalogue of subjects and a usable technical skills definition.

How to Identify and Assess Key Technical Skills

Once you define the skill clearly, the next challenge is diagnosis. Who can already perform it? Who can perform part of it? Who needs full training from the start?

Managers often rely on self-ratings because they're easy to collect. The problem is that self-ratings measure confidence well, but competence unevenly. Technical skills need stronger evidence.

According to the California Chamber of Commerce business and jobs reporting, 92% of businesses in the state prioritise technical skills in hiring. The same source notes that 55% of employers use certifications such as CompTIA and AWS Certified as proxies for assessment, and links the broader skills gap to an estimated $10 billion to $15 billion annually in lost productivity in California. That reinforces the need for objective measurement instead of guesswork.

Use a skills matrix before you buy training

Start with a simple matrix. List roles down one side and required technical skills across the top. Then mark each employee against a performance level that your managers understand.

A useful matrix doesn't need elaborate scoring. It just needs shared meanings such as:

Needs support: Can't yet perform the task independently

Working level: Can perform the task in common situations

Advanced level: Can handle exceptions, teach others, or improve the process

The value of the matrix isn't the spreadsheet. It's the conversation it forces. Managers have to define what “working level” means.

Practical assessments beat opinion

If the skill is real, create a practical test that mirrors the job. Keep it short and observable.

Examples:

Ask a data analyst to clean a messy dataset and build a basic dashboard.

Ask a support agent to process a mock customer case in the CRM.

Ask a cybersecurity trainee to identify suspicious email indicators in a simulation.

Manager checkpoint: If the assessment looks nothing like the work, you're probably measuring recall rather than capability.

Certifications help, but they're not enough

Certifications can provide a useful baseline, especially for technical areas with established standards. They signal that someone has engaged with a body of knowledge and passed an external test.

But a certification shouldn't be your only evidence. Someone may hold an AWS credential and still struggle with your internal deployment process. Your environment, tools, naming conventions, and approval paths still need assessment.

For teams building a stronger evaluation process, this guide to assessment of competencies offers practical structure.

Build technical evidence into performance reviews

Many performance reviews still focus heavily on goals and behaviours. That's useful, but technical roles also need concrete evidence of task performance.

Include prompts like:

Which systems can this employee use independently?

Which technical tasks require supervision?

What output can they produce to the expected standard?

Where does rework happen most often?

That changes the review from “How are they doing?” to “What can they reliably do?” For technical capability, that's the more useful question.

Building a Modern Technical Upskilling Program

Most technical upskilling programmes fail for a boring reason. They start with content before they settle the skill definition. Teams buy course libraries, assign modules, and hope relevance will emerge somewhere along the way.

It rarely does. Generic content can introduce a topic, but it won't close a precise performance gap inside your systems, workflows, and compliance requirements.

Start with task-level definitions

A modern programme starts lower down. Not with “data literacy” or “cyber awareness,” but with task-level definitions such as:

Generate a monthly Salesforce report with the approved filters

Identify phishing indicators using the company's reporting procedure

Complete a customer refund workflow in the CRM without missing a mandatory field

Apply the documented file naming and storage standard in the compliance repository

This level of precision matters because research summarised by Coursera's article on technical skills states that 63% of skill gaps in regulated industries stem from inadequately defined technical competencies in training programmes. The same source highlights that training leaders reduce compliance risk when they operationalise skills as specific, testable proficiencies with objective validation and ongoing performance tracking.

That finding matches what many L&D teams see in practice. People usually don't fail because they're unwilling to learn. They fail because the organisation never translated “capability” into concrete task expectations.

Build around evidence, not course completion

A strong upskilling programme has a different backbone from a traditional LMS rollout. It should connect each skill to proof.

That usually means:

A defined task: What must the employee do?

A standard: What counts as correct?

A learning asset: What helps them learn it?

A check: How will you verify they can do it?

A reinforcement loop: How will managers support retention on the job?

If any one of those is missing, the programme gets weaker. A course without a standard becomes content consumption. An assessment without reinforcement becomes a temporary event.

Use source materials your teams already trust

The best technical training often starts with material that already exists inside the business:

SOPs

Process manuals

Job aids

Product documentation

Compliance guides

Knowledge base articles

Those documents contain the substantive work. The challenge is that they're usually written for reference, not learning. Employees only open them when something goes wrong, and managers don't have time to turn every document into a polished course manually.

That's where automation changes the economics of L&D. When a platform can turn source material into structured lessons, checks, and microlearning, your team spends less time formatting and more time refining the skill definition and quality standard.

Here's a practical example of how AI-supported training creation can fit into that workflow:

Design for maintenance, not just launch

A modern upskilling programme also assumes that processes change. Systems get updated, policy language shifts, and role boundaries move.

That means the programme should be easy to refresh. If updating one procedure forces your team to rebuild an entire course manually, the content will drift out of date. But if your training model is tied tightly to a defined skill and linked to current source material, updates become much easier to manage.

Training scales when the skill is defined clearly enough that content, assessment, and manager coaching can all point to the same task.

That is the true benefit of precision. It does not just improve learning design. It makes the whole programme easier to maintain and easier to trust.

Automate Competency for a Future-Ready Workforce

When managers say they have a skills gap, they're often describing the symptom, not the cause. The deeper problem is usually definitional. The organisation hasn't translated business needs into specific, testable technical skills.

Once you do that, the rest becomes more straightforward. You can separate technical skills from soft skills, map role requirements cleanly, assess capability with evidence, and build learning around real tasks instead of broad subject areas. That shift moves training from “people completed modules” to “people can now perform the work.”

What future-ready teams do differently

The teams that build capability well tend to share a few habits:

They define skills at task level: They don't stop at labels like “analytics” or “security”.

They ask for proof: They use output, simulation, or direct observation.

They link training to workflow: Learning reflects the tools and standards employees use.

They make updates easy: When process documents change, training changes with them.

If you're leading L&D, HR, enablement, or operations, that's the practical takeaway. Treat the technical skills definition as infrastructure. It isn't admin. It's the design layer that makes hiring, assessment, coaching, and training work together.

There's also a scale advantage. Once your definitions are clear, automation becomes far more useful. Instead of asking a platform to train vague concepts, you can ask it to support named competencies, grounded in your own procedures and standards. That's a very different level of maturity than uploading generic learning and hoping employees connect the dots themselves.

For teams exploring that shift, it helps to see how employee training automation supports consistency, speed, and maintainability across the training lifecycle.

The strongest capability programmes don't begin with content. They begin with clarity. Define the skill precisely, assess it fairly, and build training around the actual work. That's how organisations create a workforce that can adapt without losing quality.

If you're ready to turn manuals, SOPs, and internal knowledge into structured, scalable training, Learniverse can help you automate the heavy lifting. It's built for teams that want to move faster on onboarding, compliance, and upskilling without getting buried in manual course creation.